I raised this point in yesterdays call, but I’ll repeat here for posterity. We’re being a bit too loose with our terminology for some of these transform descriptions.

With regard to the path-to-white methods, the group has been calling “hue-linear” or “chromaticity preserving” to mean straight lines in chromaticity/ratio space, which is definitely the wrong term to use. I was proposing to use the terms “dominant wavelength preserving” or “white-light-mixing”, since the method more correctly adheres to those behaviors.

The main point of confusion for me was that we’re simultaneously discussing a tonemap/tonescale operator which is truly chromaticity preserving in the correct technical sense. So we should be distinct in the differences between the two.

@Derek to address your points a bit…

I’d be a bit careful to focus on “straight lines” themselves, but instead it should be qualified that we’re discussing “straight lines in the chromaticity domain”.

The isoline (invariant line) of stimuli which produce a constant sensation of hue, but vary in chroma tend to be curved in the chromaticity domain. Remind ourselves that straight lines in the chromaticity domain can be produced by the combination of any two (non-equal) light sources, e.g. colored and white in Abney’s case. It was his experiments which showed that this situation (a straight line to adopted white in chromaticity domain) does not produce a hue isoline.

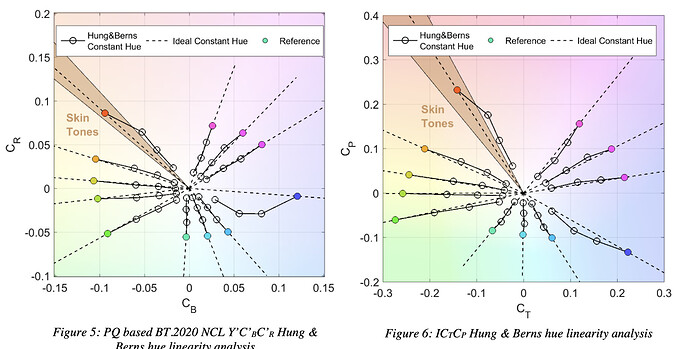

The term “hue-linear” describes the desire for color models to predict/model these curved hue isolines. So the base data is the curved hue isolines from various experiments, and the desire is for a color model (like Lab* or ICtCp) to transform these curved lines to straight lines in their domain. So in the chromaticity domain, hue isolines will be curved, in the ideal/perfect color model hue isolines will be straight. Which would basically just mean that we’ve isolated the perceptual “hue” attribute of the experimental dataset, and it is decoupled/orthogonal to the other lightness and chroma dimensions.

So in judging the quality/performance of a color model we discuss the “linearity” of its hues, or its ability to make hue-isolines straight as a sign of its predictive/descriptive capability.

For example, this is a plot from the Dolby ICtCp White Paper…

All that to say, in the chromaticity domain hue isolines are generally curved. In the idealized color model domain hue isolines should be straight, curves are sign that the model is less predictive of that dataset.