Hello again,

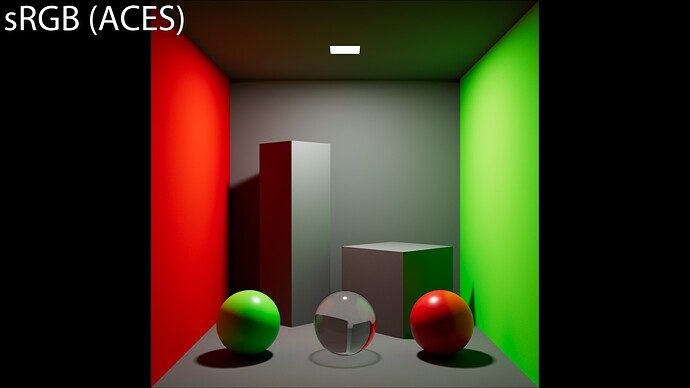

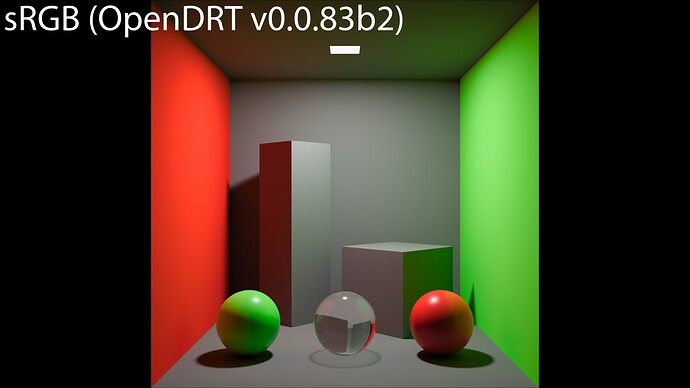

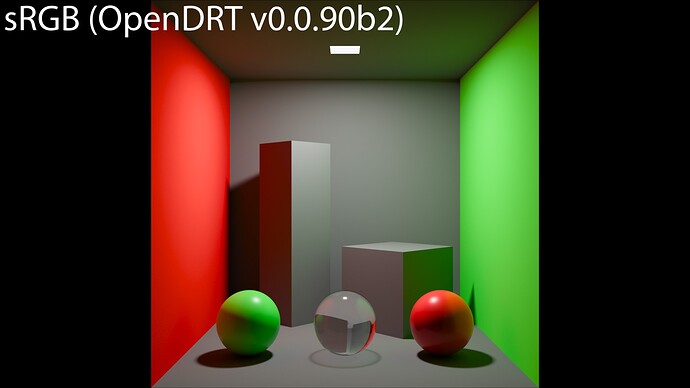

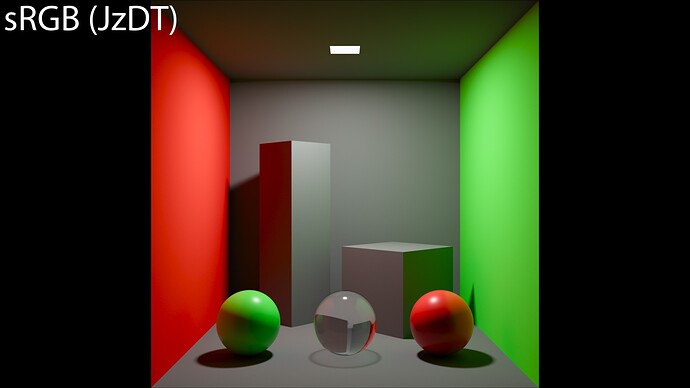

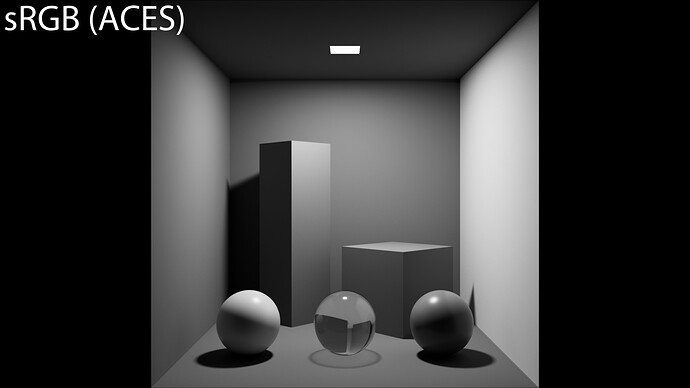

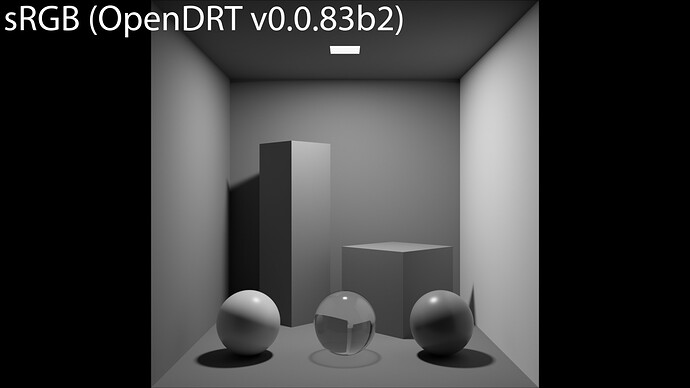

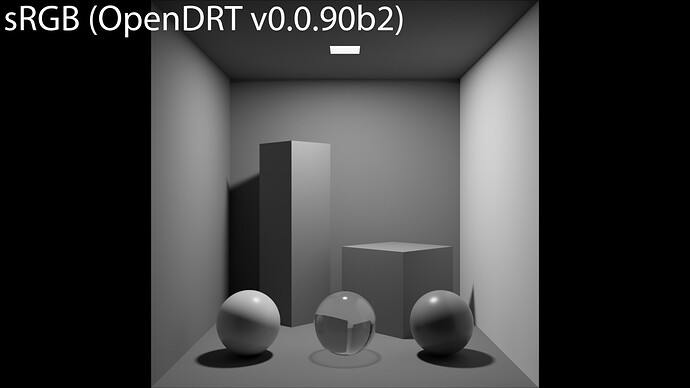

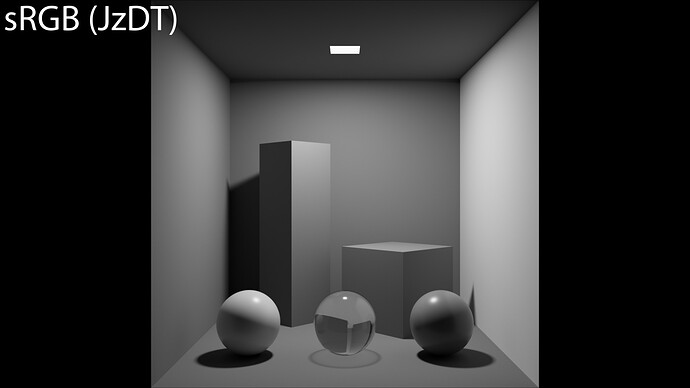

some more tests on a Cornell Box. Here is the setup : linear-sRGB textures, rendered in ACEScg and displayed in sRGB. Here we go !

ACEScg render displayed in sRGB (ACES)

ACEScg render displayed in sRGB (OpenDRT v0.0.83b2)

ACEScg render displayed in sRGB (OpenDRT v0.0.90b2)

ACEScg render displayed in sRGB (JzDT)

And some luminance tests :

desaturated ACEScg render displayed in sRGB (ACES)

desaturated ACEScg render displayed in sRGB (OpenDRT v0.0.83b2)

desaturated ACEScg render displayed in sRGB (OpenDRT v0.0.90b2)

desaturated ACEScg render displayed in sRGB (JzDT)

I think the same observations can be made from the previous renders. I still believe there is somewhere some ground truth that we can rely on rather than subjective/aesthetics judgement. I believe this is what is hinted here and here.

Hope it helps… As always, nice work Jed !

Chris