Hello everyone, it is quite intimidating to reply to this thread.  I’ll probably be writing only obvious stuff. I am a lighting lead at Illumination and a teacher at ENSI (Avignon). We are using ACES at both sites on Full CG renders.

I’ll probably be writing only obvious stuff. I am a lighting lead at Illumination and a teacher at ENSI (Avignon). We are using ACES at both sites on Full CG renders.

One thing that I would like to mention is that we do not have a color scientist at the studio nor at school. So we are pretty much satisfied with the way ACES worked in a simple way, out-of-the-box with a pleasing look. We do not modify nor tweak anything within the ACES OCIO config, our images rely basically on a full ACES workflow from IDT to ODT.

My top requirements would be to keep it simple as stated in the Group Proposal. ACES has been great for us since it was pretty much plug and play and I think many non-technical image makers would say the same.

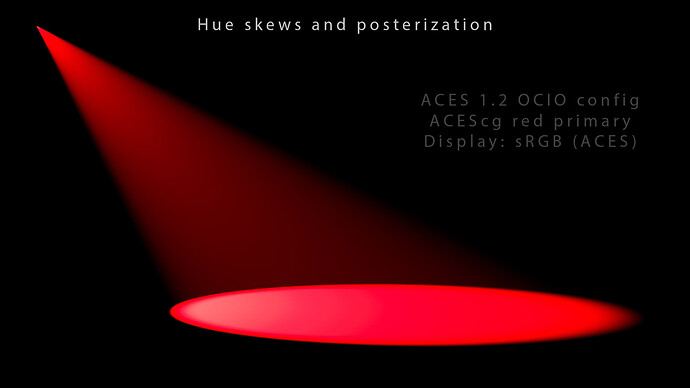

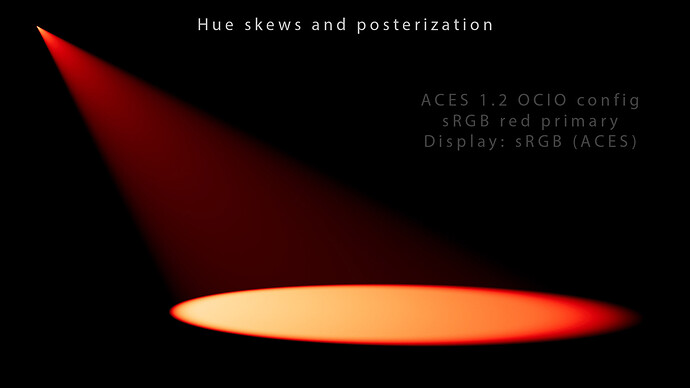

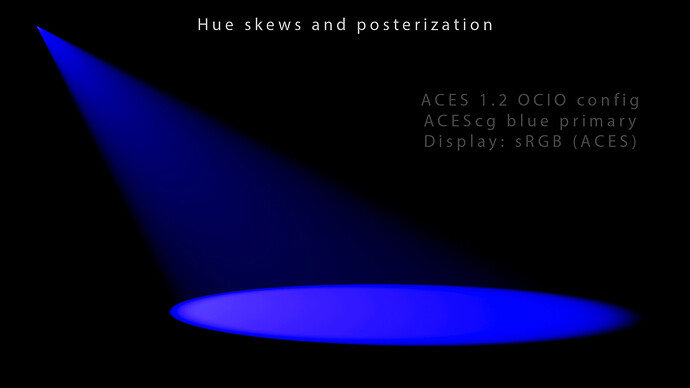

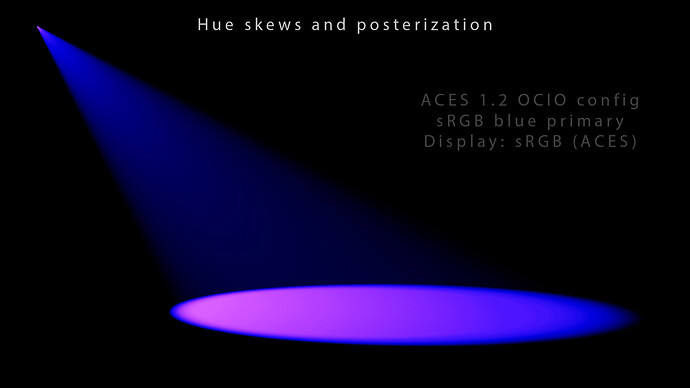

My top issues is that currently ODTs clip which gives us artifacts when you want to light with saturated colors such as ACEScg or Rec.2020 primaries for example. We end up with a lot of posterization in our renders and the only temp fix we have found is to use the GM algorithm as a side effect. Hue skews are also an issue and are noticeable on the path to white (see examples below).

My wishlist would be to improve the hue paths. As you may know I have posted a few frames on acescentral with hue skews and posterization this summer and I would love to see these artifacts disappear.  I’ll repost some of these renders in this thread for clarity. If I understood correctly, these issues are the result of the per-channel lookup in the current OT.

I’ll repost some of these renders in this thread for clarity. If I understood correctly, these issues are the result of the per-channel lookup in the current OT.

I am available if you need more information, renders or plots to explore any solution (such as gamut mapping or max(RGB) for instance). This a pretty naive post from a lighting artist I reckon since I have never checked the ACES ODT on an HDR monitor for example.

In this first render, when overexposed, a sRGB red primary goes orange and a sRGB blue primary goes purple/violet (hue skew/shift).

In this second render, when overexposed, a Rec.2020 red primary gets posterized and a Rec.2020 blue primary goes purple/violet (hue skew/shift).

In this third render, an ACEScg red primary produces some artifacts on the impact. This is a plane with a midgray shader at 0.18.

In this fourth render, a sRGB red primary goes dorito orange when overexposed. This is a volumetric light by the way.

In this fifth render, an ACEScg blue primary produces some artifacts on the impact.

In this sixth render, a sRGB blue primary gets a hue skew towards purple/violet when overexposed. This is probably the most obvious color/example.

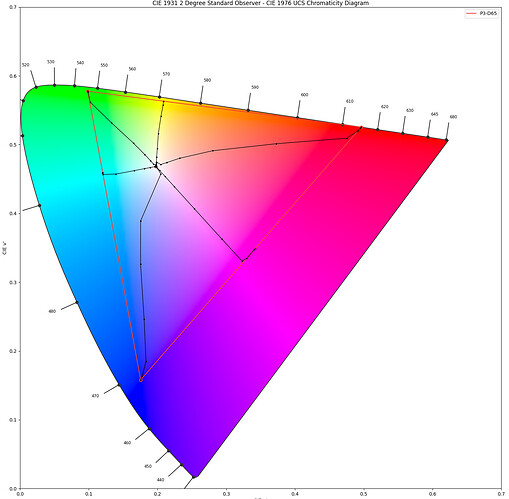

Here is a plot of ACEScg values path to white using the P3D65 ODT. I believe the posterization/clip can be observed on the edges of the gamut and several hue skews are also noticeable.

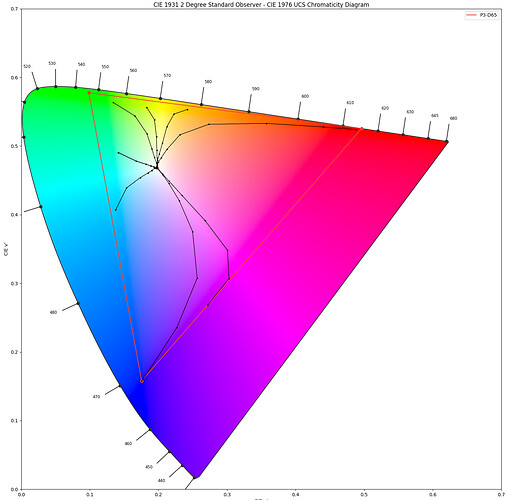

Finally, here is a plot of sRGB values using the P3D65 ODT. We can clearly observe the blue going purple and the red going orange on the path to white, as in the first render.

These great plots were done by our amazing in-house developer Christophe Verspieren. Thanks to him for generating them and letting me share them.

Update : I have fixed our plots using Output Display Linear Light by rolling the values through the inverse display EOTF. Thanks everyone !

Thanks for you help,

Chris