I just want to share a few more experiments that may help provide a few talking points

The earlier experiments were trying to understand how different input/output white points affect a normal exposure colorchecker and how those changes relate to each other when compared inside the CAM.

I also did a few experiments comparing output from a few popular rendering transforms when also fed back into the CAM.

The image I used for evaluation was the same (Colorchecker from ACES Synthetic Image) but with discreet half stop expores up to 10 stops over and 10 stops under.

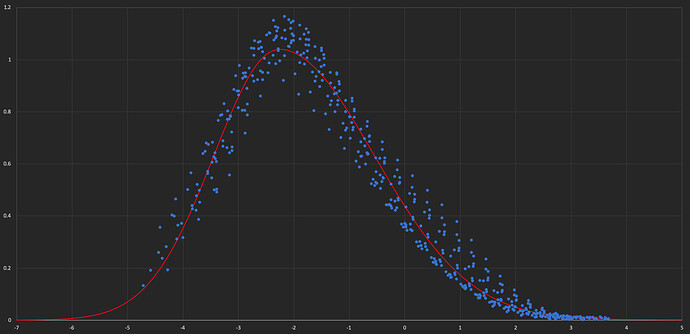

This example is Arri Reveal rec2020 (converted to SRGB)

The first experiment I did was try and understand how M relates to J in the rendered image.

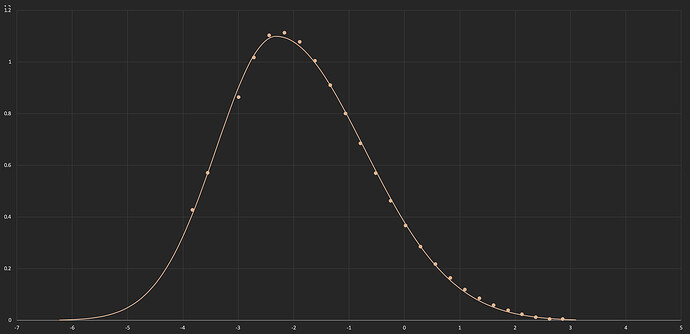

I tried several different curve fits and one that seemed to work well was an Asymetrical Gaussian.

Here is ColorChecker Patch 2

The dots are difference of M (ACES in vs Rendered M) vs Log J (no tone mapping) and the curve is an Asymetrical Gaussian Fit

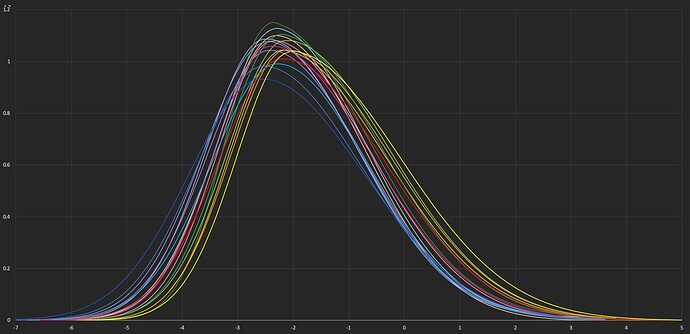

The Colorchecker obviously has a very limited gamut and (as Pekka has pointed out) these curves may not apply to highly saturated colors, but I think it is still useful to see what this range of the gamut is doing because it is an important part of the range where a pleasing/not pleasing/uncanny rendering will be evaluated. Because many (most?) of the midtones are getting a saturation boost and this is a Rec2020 output, many patches land outside of rec709 for even this limited gamut.

Here is a Gaussian Curve with a best fit to all Colorchecker Patches

I removed most of the very dark patches (all nearly indistinguishable black) for fitting since they had noisey relative J/M values likely from the luts/limited precision in this area.