Hi,

I remember in the meetings it was mentioned that blue turns magenta in some occasions like in the blue bar or in the rendered blue light cone.

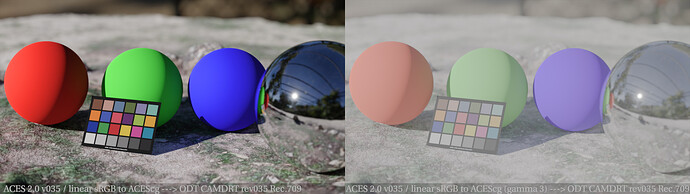

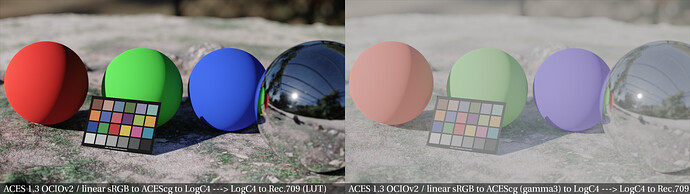

I have a render that I put though the LUT version of v035 and I noticed magenta where should only be blue in image.

The blue sphere shows a magenta highlight and with a lifted gamma before the LUT the whole sphere looks magenta.

The rendering was done in linear sRGB and after a while I might realised why this happens. The base color shader value is set to 0/0/0,800 in a principled shader. By converting the primaries in Nuke from linear sRGB to ACEScg, the pure tristimulus values in the shader and in the render get a new meaning in the EXR values in ACEcg.

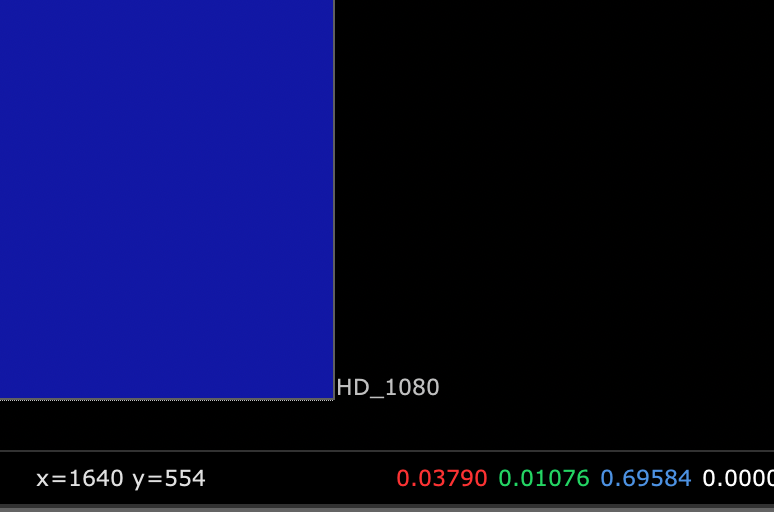

The linear sRGB 0 / 0 / 0,800 value turns to

in ACEScg.

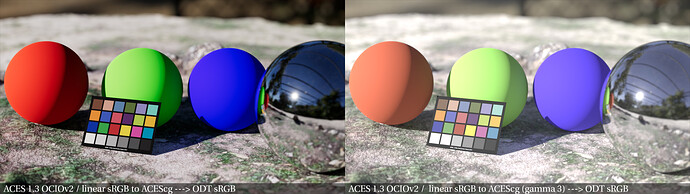

If I simply interpret the EXR rendering as ACEScg, everything becomes more colourful and wrong, but the blue sphere stays blue in the output.

I wonder if this also happens with some of of the camera footage when it is converted from camera color space to the working space.

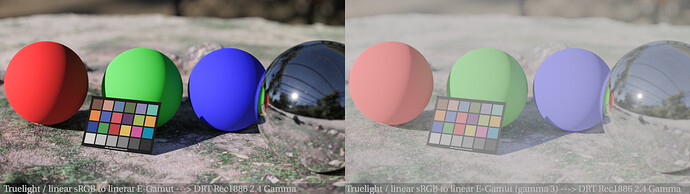

Just for comparison I rendered out some more view transforms from the same rendering:

I wonder what ARRI and T-Cam are doing different that I end up with a blue sphere as I intended to render in this case.

Actually the HDR versions look quite different. I will post them as soon as I can output a HDR version from v035.