Note: The v20 version has a broken gamut mapper and the rendering of certain colors (especially more saturated ones) in the images above are not representative of the normal gamut mapping.

Since last week’s meeting I got to thinking how current CAM DRT could be taken into a direction where it could retain saturation for more pure colors in the highlights, but still retain typical photographic rendering in the highlights. The reason why current DRT doesn’t achieve that is that the highlight desat functionality affects all colors equally.

So I made a version of the CAM DRT that has a new chroma compression mode that changes the compression based on the purity of the color with the goal that more pure colors would be compressed less compared to less pure colors. This will then allow the DRT to reach highly saturated colors in the highlights while still having a “path-to-white”. The implementation is very simple. It’s just a lerp between no-compression at all and the user defined amount of compression. Hue is taken into account too. It all happens in JMh. When enabled, it overrides the highlight desat.

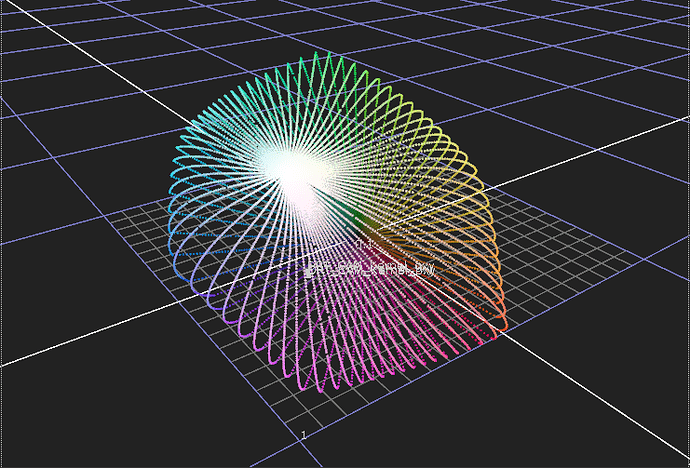

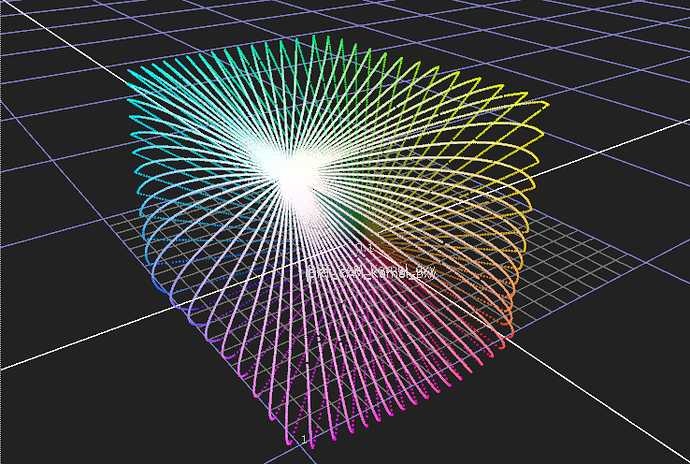

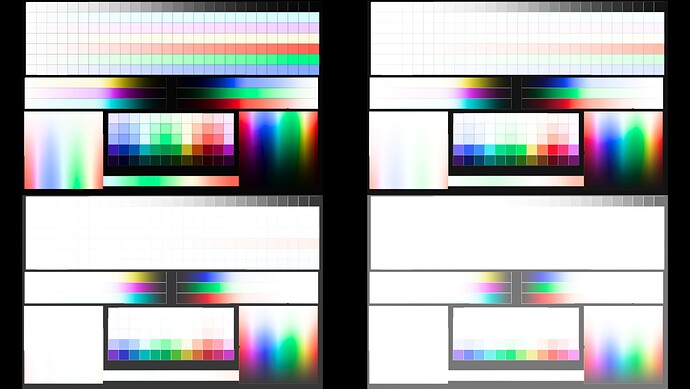

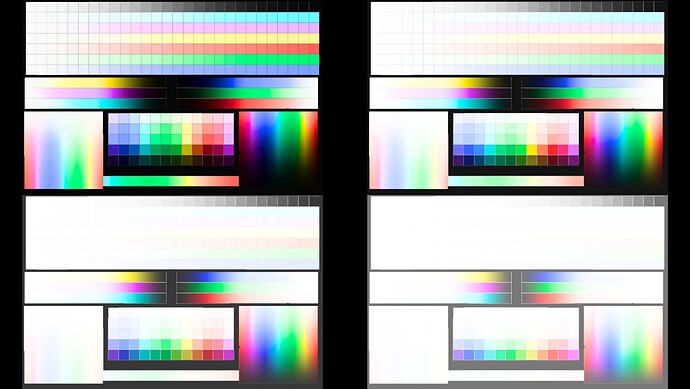

The difference is very clear when looking at the display cube:

highlight desat (at 1.0):

chroma compress:

The current version is tuned specifically for ZCAM and not for Hellwing. Reason is that Hellwig is causing me a lot of trouble and most images have white pixels (NaNs most likely) in them. While the compression works with Hellwig too, none of the internal values have been tuned for it.

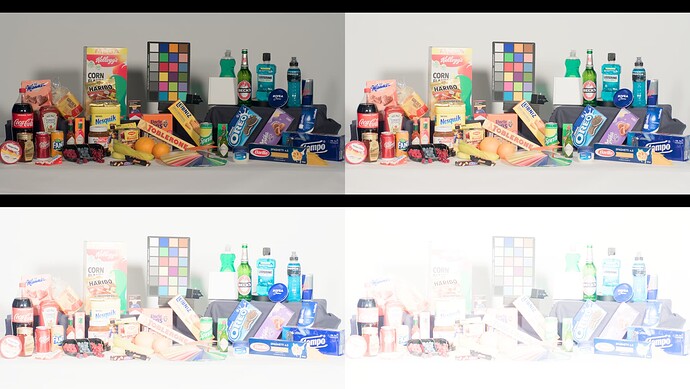

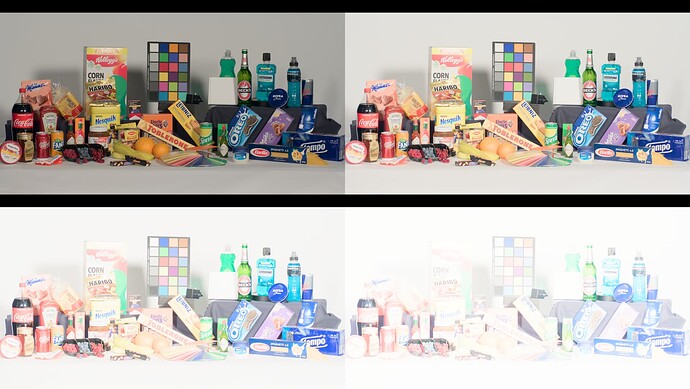

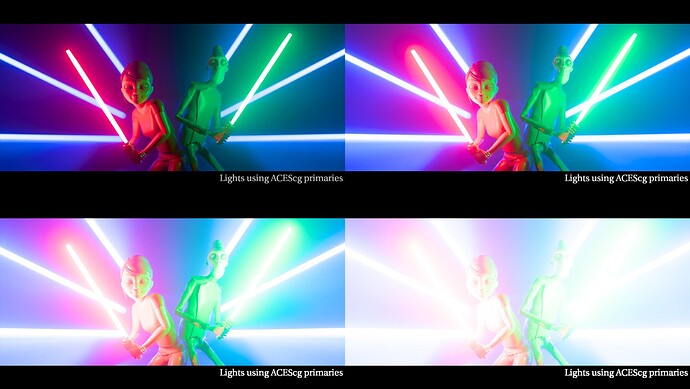

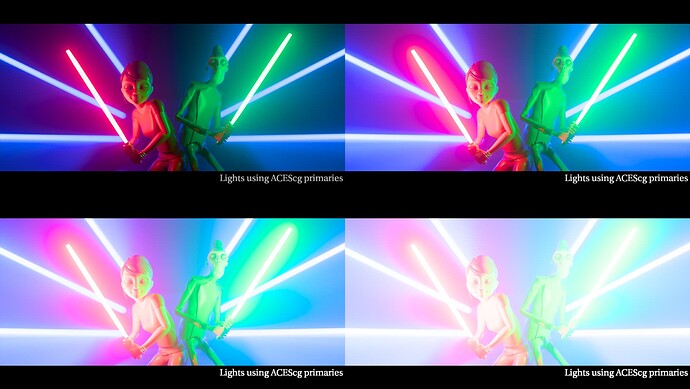

Following images compare ZCAM Candidate C and CAM DRT v20 with ZCAM (and fixed gamut mapper) and this compression mode. Each frame includes 4 exposures, 0, +2, +4, +6. First frame is Candidate C, second CAM DRT v20+compression.

The difference is noticeable. Purer yellows, cyans and magentas can easily be retained even longer if wanted. Unfortunately, the gamut mapper prevents from getting any more saturated blue or red, even if there is no compression done at all to those colors. Something to look at there…

This version is available at: aces-display-transforms/CAM_DRT_v020_pex.nk at main · priikone/aces-display-transforms · GitHub