Hi Stu,

thanks for sharing the renderings from your blog, there is always a lot to learn from them.

I was not aware that you have this blog and this article in it.

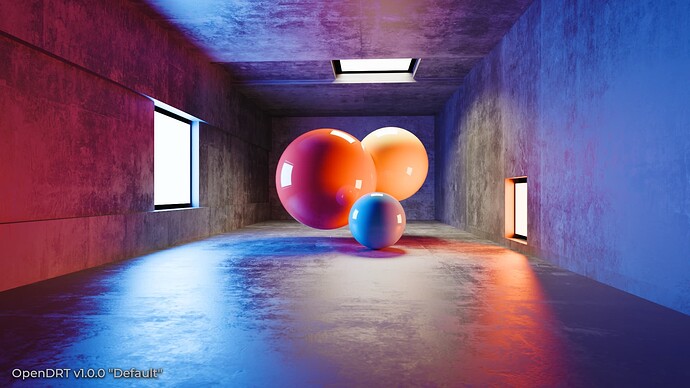

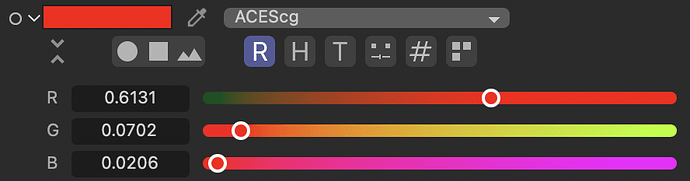

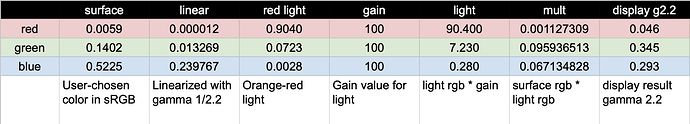

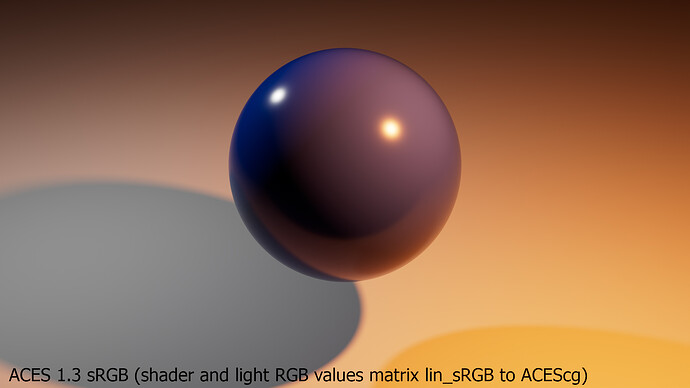

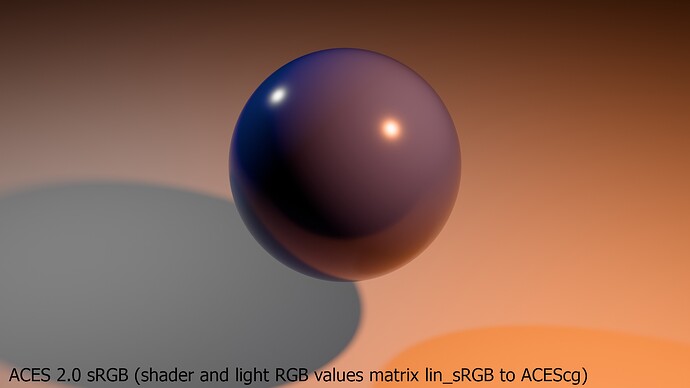

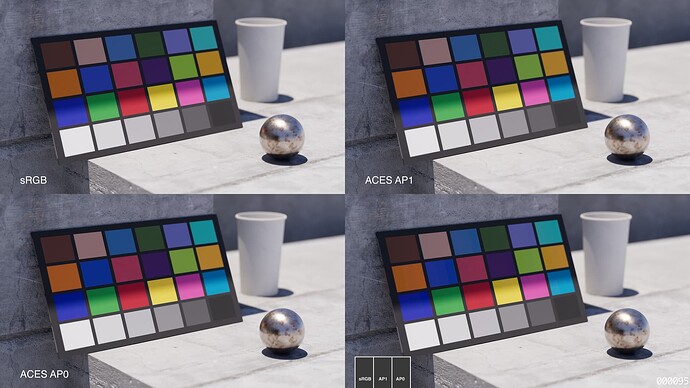

Image 1:

With a bit of time on my hand I re-created your experiment with success in Blender (Standard sRGB)

A ground plane, a blue ball, a white light and an orange light.

It does not look so fancy as yours, but it works well too I think

The orange lit side of the blue ball looks greenish.

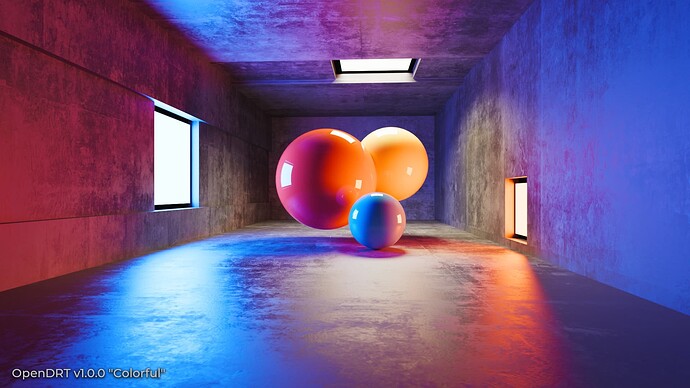

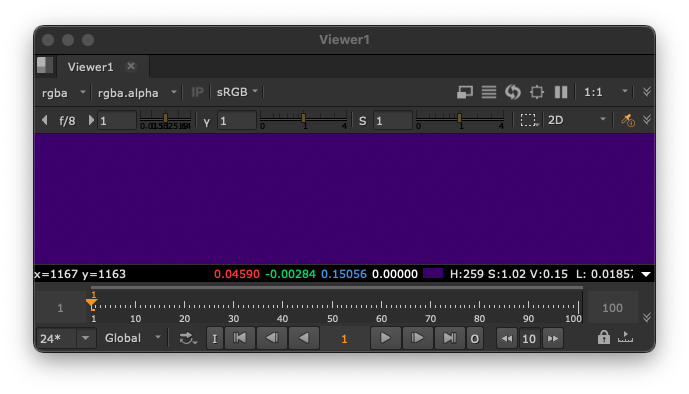

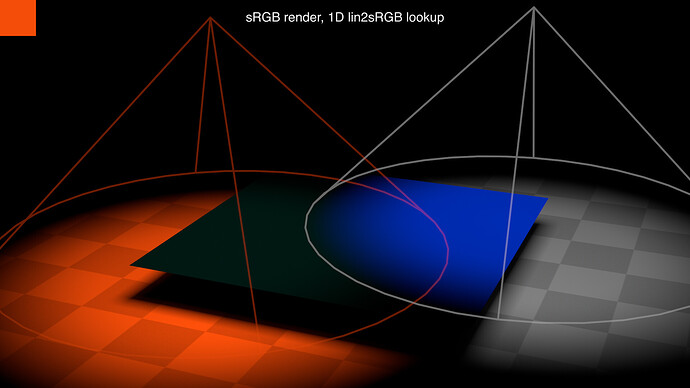

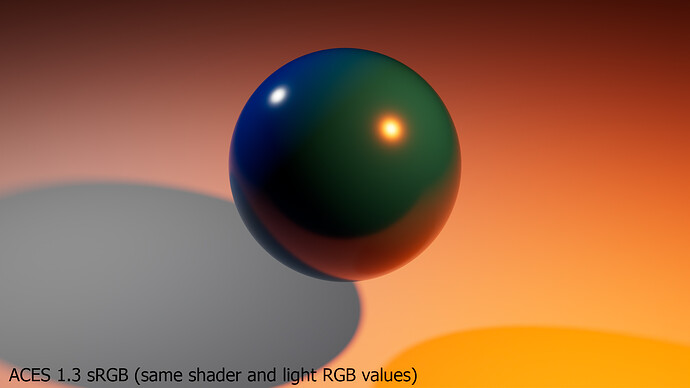

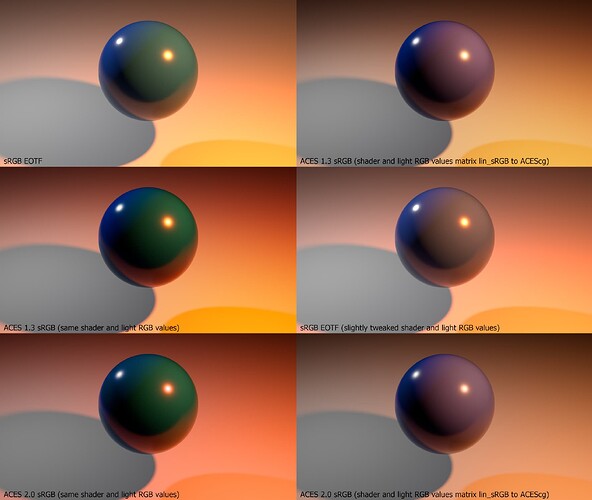

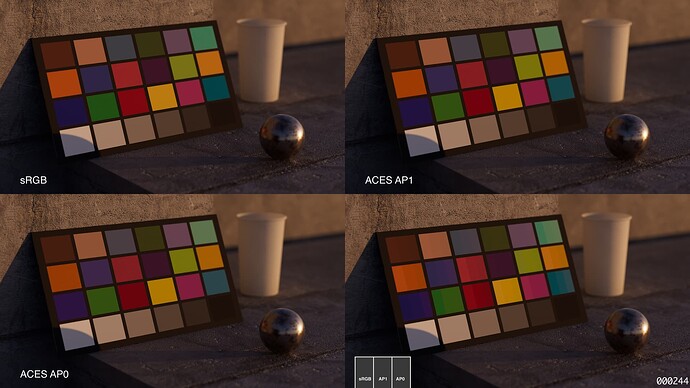

Image 2:

And for the Blender ACES 1.3 version I converted the blue ball shader values from linear-sRGB to ACEScg (via Nuke as Blender does not offer an internal way to do so as far as I know).

The rgb values for the orange light “color” I converted as well.

I see the same changes happen as in your images.

As @nick explained and as I understood it in the first example (Image1) we operate close to a boundary with our RGB values when they get displayed, therefore the orange light reflecting side of the blue sphere turns greenish. It’s math as you showed. Is that about right?

And by converting our shader and light values from linear-sRGB to ACEScg we get the second result (Image2), because we “operate” in a bigger working color space. Is that right too?

The orange light reflecting side of the blue sphere turns more to the magenta side.

The disadvantage of Blender not having a “color managed” colorpicker, something that Cinema4D and other DCC apps have, makes the next experiments even easier.

I still would like to have a colorpicker that “tells” me:

With these values you are inside of the sRGB gamut, now you enter P3 or Rec.2020 etc and therefore you are out of the sRGB gamut for example, because this is your chosen display output.

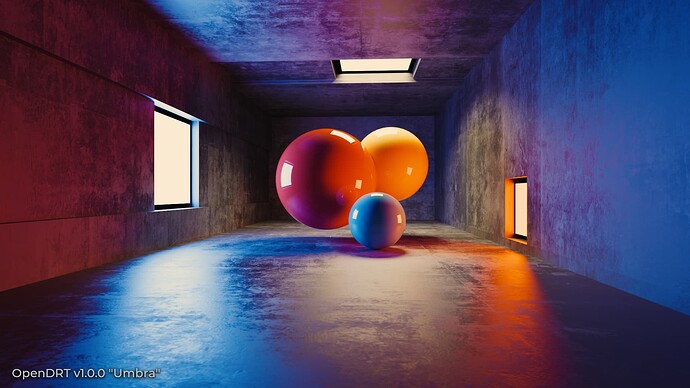

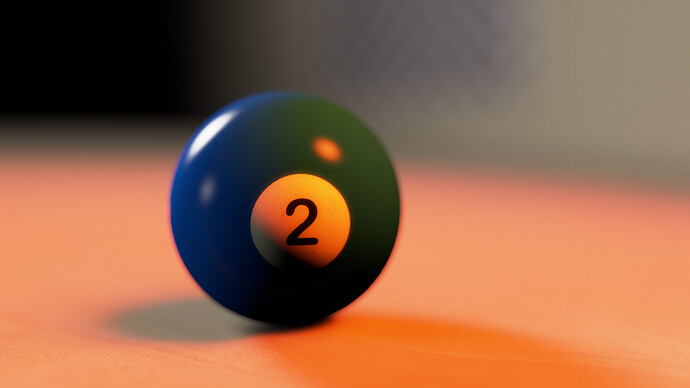

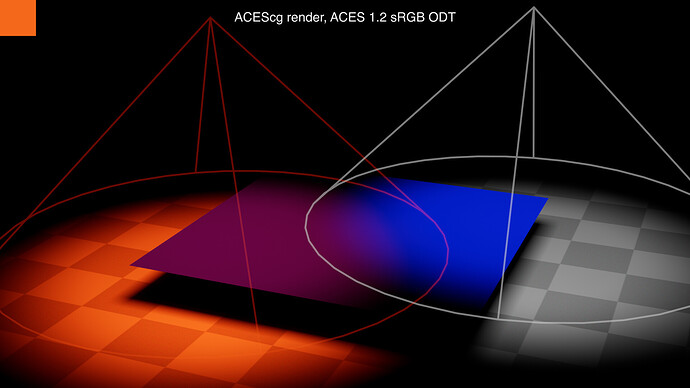

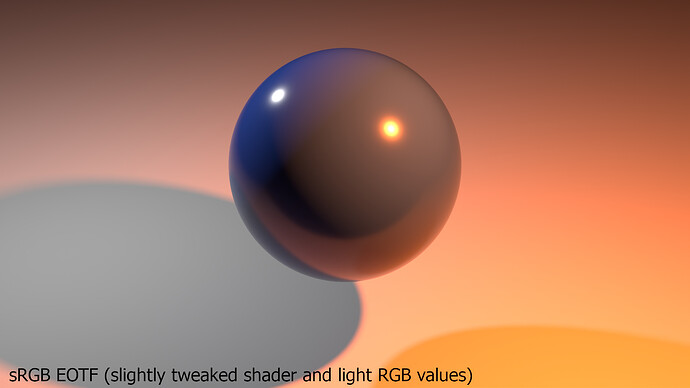

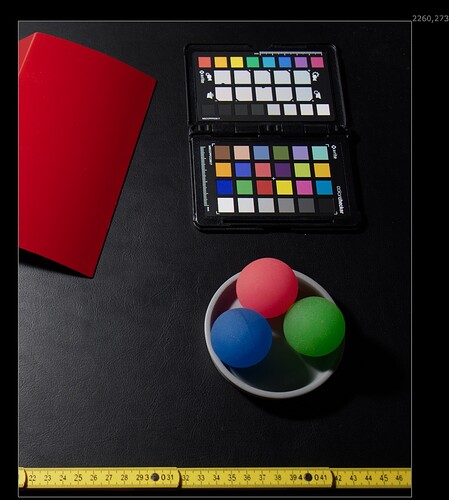

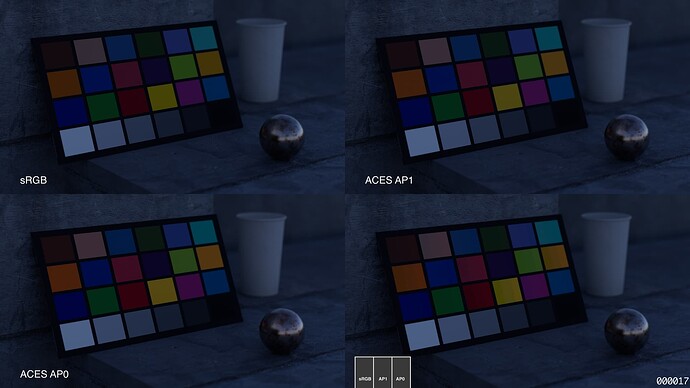

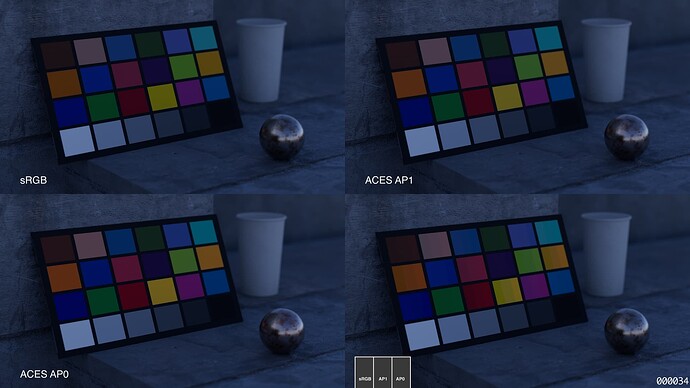

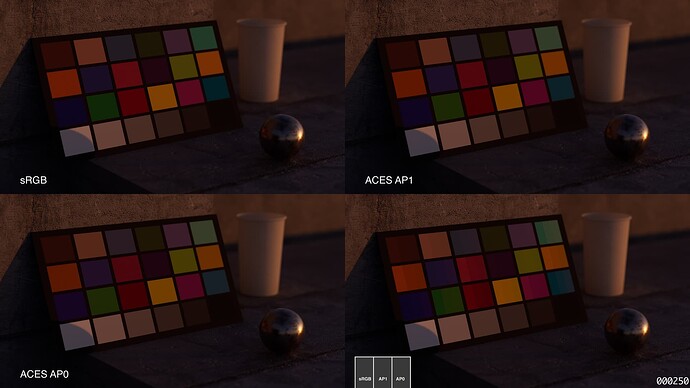

Image 3:

Here are the first shader values again for the blue sphere and for the orange light.

Just this time I do not convert them and start Blender with the ACES 1.3 OCIO config.

As far as I understand, now I am operating again on the edge of my working colorspace, but this time I assign it the meaning - you are ACEScg instead of linear-sRGB as in Image 1.

I would say the Images 1 and 3 look somehow similar, they have different look because of the different approach to map the RGB values in the EXR to be displayed on an sRGB display.

And comparing the RGB pixel values in the same nuke script setting both colourspaces to “RAW” shows me the rendered pixels are actually identical.

In Image 1 I assign the EXR file the meaning linear-sRGB and I view them through a simple inv.EOTF/EOTF on the monitor.

In Image 3 I assign the EXR file the meaning ACEScg and I view them through the ACES 1.3 sRGB ODT on the monitor.

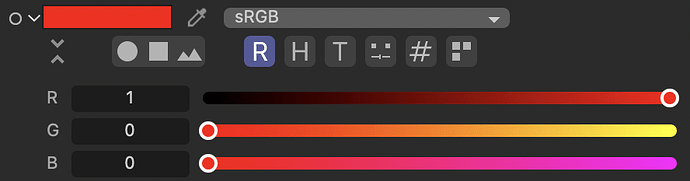

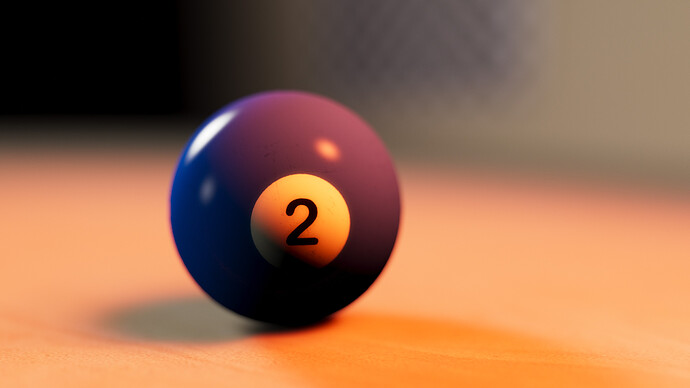

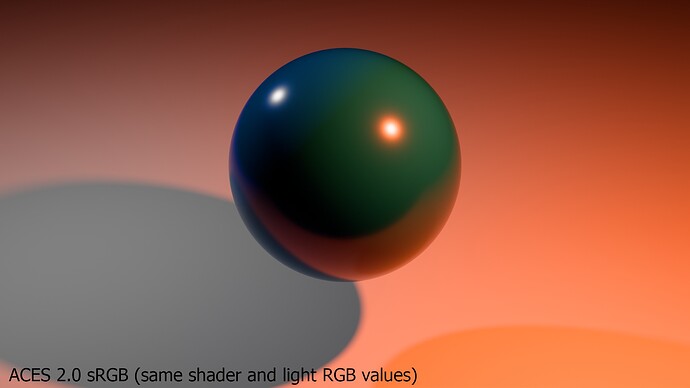

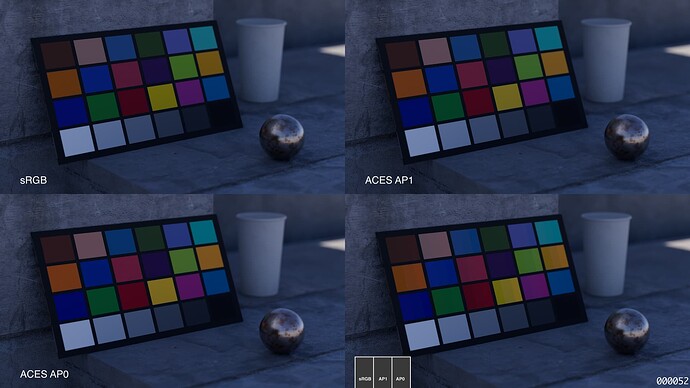

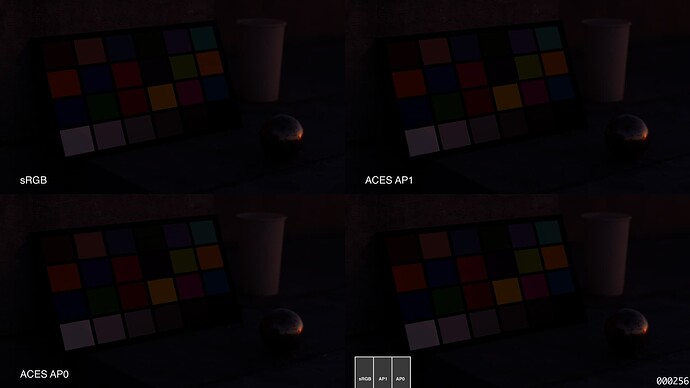

Next is Image 4 (same OCIO config as Image 1 but with slight adjustment to the shader and the light rgb values. This resembles a bit more Image 2 again in my point of view.

I end up with four images of a blue ball lit by two lights.

And the main thing that changed the look of images are changed ratios of the RGB channels in the shader and light and assigning the RGB image date a different meaning - a different working colourspace and a different viewing pipeline.

The PBR world has some strange rules:

The albedo of the “virtual physical” blue ball that “reflects” 1,3% red, 8,9% green and 48% percent blue of the RGB lights in the linear-sRGB working colourspace changes to 6,1% red, 8,9% green and 43% blue in ACEScg after a OCIO colourspace conversion. The blue ball changed its “virtual physical” properties when changing the working colourspace.

To bring the conversation back to ACES 2.0 I also rendered the same two images in Blender directly with the latest OCIOv2 config for ACES 2.0 as this is one of the few DCC apps that reads this config at the moment. But right now you must use the Blender 4.5 alpha version, because the OCIOv2 config support is broken in the current Blender 4.4.x release and won’t be fixed until the release of version 4.5 as far as I understood.

At last here are all six images together to make it easier to see them at once.

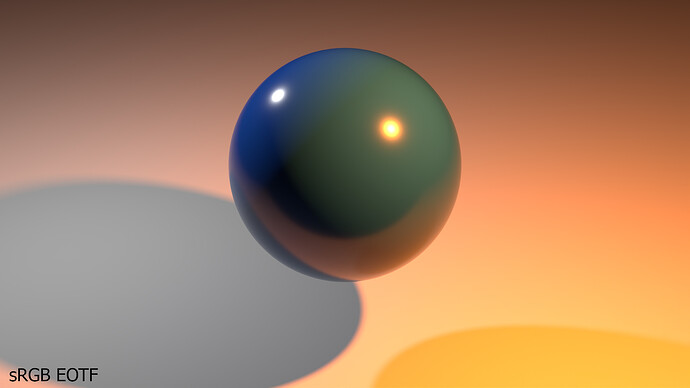

You showed on your blog a photo of a blue object lit by an orange light to prove your point that the blue box does not turn greenish.

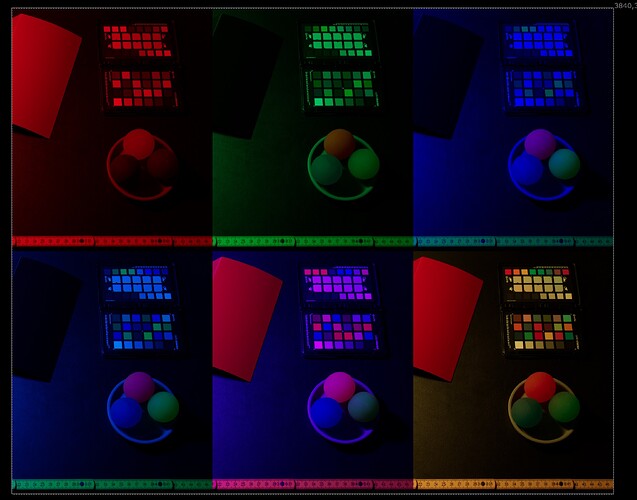

I also did a similar test a while back, but I used a HomeKit LED bulb, changed the colours and made lots of photos. The resulting images are rendered out with the ACES 1.3 sRGB ODT. It’s amazing how strange the objects look under narrow band lighting.