We’ve eliminated the restriction on the number of archivedPipeline and lookTransform elements.

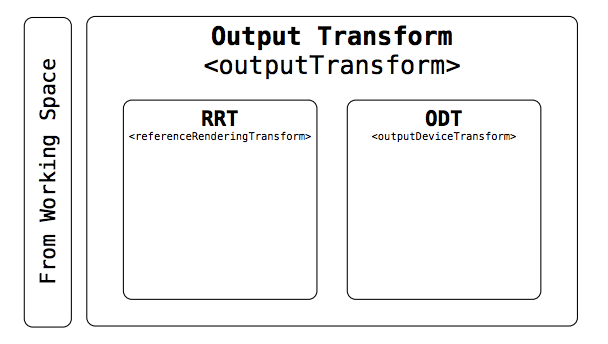

The outputTransform need to contain either one transformId or one referenceRenderingTransform and one outputDeviceTransform

We’ve eliminated the restriction on the number of archivedPipeline and lookTransform elements.

The outputTransform need to contain either one transformId or one referenceRenderingTransform and one outputDeviceTransform

@Thomas_Mansencal I’ve updated the regEx for the transform names. Can you please take a look and confirm they make sense again the versioning document?

Hi Alex.

Yes, filename, sequence and Id were my original proposal; however, neither filename nor sequence should ever contain paths at all, as paths severely change throughout any camera/post/mastering/distribution workflow and that is something that is frequently changed by data transfer / data wranling practices that don’t necessarily employ ACES-aware software.

Think to an Aspera file-transfer workflow that moves content from a audio post facility’s watchfolder into a mastering facility’s dropbox. Or to a versioning workflow based on subtitling software.

In scenarios like the two above (and many others) paths would be changed by a software that most certainly doesn’t understand AMF (and will never do), therefore who would be expected to keep the paths consistend in the AMF when this also moves across filesystems?

Paths should be mandated by specs.

Happy to discuss briefly at the phone if necessary

So should filename just be the name of the file sans path? Would anyURI still make sense but with guidance in the spec that it be just the filename?

Hi Alex.

Path linking should follow these two scenarios:

filename/sequence/uuid, nor clipID elements (named with an arbitrary filename itself), shall stick with every footage it is going to share the same folder with. It is just that simple. This holds for as long as one keeps the AMF as a sidecar file in the same paths.filename/sequence, but shall always include some sorts of UID: as usual, such UIDs may be a mixture of one or more among: TimeCodes, reel-names, tapenames, UUIDs, time-stamps, etc.In a theatrical DI workflow, footage will be linked to AMF using the former scenarion when it is shot on-set and near-set. At its first “ingestion” into a fully-fledged ACES-aware software (data management, conforming, editorial, rushes, VFX pulling, etc.), a new AMF is produced out of every particular clip that links to it using the latter scenario.

This opportunity paves the way to workflows where the AMFs and the footage are stored in physically and logically different URIs. Footage is ingested into an AWS Snowball, while the color metadata are pushed on a Google Drive or a Box cloud folder, together with proxies/rushes, for sharing with the DI lab.

No Thomas.

The binding is not between the AMF’s filename and the sequence’s (or any other footage’s) filenames.

The AMF may potentially have any filename and be located in any path, even in a completely different filesystam than the one where its referenced footage is. It is by means of a UID that is based on one or several among filename/sequence/uuid and other metadata, that the bond is maintained.

In the case of frame-per-file sequences this may happen specifying the frame-sequence filename (as per slides #7-#8 of my original PPT presentation on ACESclip) within the AMF’s sequence element. whwre the frame-sequence’s basename is kept separate from the frame index.

However, good practice would be to generate the AMF itself with a similar name than the footage it references. Therefore, for frame sequence, this ultimately means naming it as the sequence’s basename without the frame indiex (and, optionally, without symbol separators between the basename and the frame index).

For example, for an OpenEXR sequence Thomas-Mansencal_[0000624-0032768].exr, the AMF may just be named Thomas-Mansencal.amf (notice the drop of the underscore ‘_’ too). Yet, the only real link between the EXR sequence and the AMF is the presence of the following XML element in the AMF:

<aces:sequence min="624" max="32768" idx="#">Thomas-Mansencal_#######.exr</aces:sequence>

Hi all,

Thank you for your comments and feedback thus far. We have been working on changes to the spec based on feedback and have a new draft ready for review below:

S_2019_00x_AMF_DRAFT_2019.12.19.pdf (978.5 KB)

Summary of changes:

<author> element<lookTransformStackPosition> attribute<lookTransform> (singular) elements rather than <lookTransforms> (plural) with sub-elements<aces:file> type to xs:anyURI within <clidID>

The XML schema and examples have been updated here (thanks to @Alexander_Forsythe):

https://github.com/aforsythe/amf

Please have a look and let us know if you have any comments or suggestions.

@walter.arrighetti you raise good points about the potential uses and misuses of <clidID>, but I think that is largely an implementation question. We feel covered with the current <clidID> in the spec for the use cases you describe. Please join the AMF implementation calls if you can!

Thanks,

Chris

I want to bring up a comment we had in the past.

Unbounded existence of nodes is difficult in some implementations

and they preferred a limit even if large. As always, there are

implementations that could be GPU or hardware resource bound,

not every use is a software app where you could consider infinite mallocs.

So I think you should discuss this one.

Jim

One could bring a modern GPU to non-interactive framerate or even to a halt with a single large analytical LMT implementing a complex model. So why even bothering allowing even a single one?

To give you two concrete examples, the bane of my existence these last past 4 years has been length restrictions on Windows, i.e. maximum path length of 260 characters and maximum PATH variable length of 2048. Those are from MS-DOS era and I’m paying the price everyday for it without really easy way around them. I’m assuming that there was at least use cases or concrete system limits back then, when Microsoft engineers decided to put them in place.

In our case, those are arbitrary numbers that do not have any explicitly described and explained use cases or reasoning behind them, so they are the worst kind. Nobody will stack an infinite amount of LMTs and nobody will archive an infinite amount of pipelines, but if I need to stack 6 LMTs or archive 11 pipelines, I want to be able to do so.

If limit there must be, either a use case needs to accompany it or it must be set to a number with the likelihood for it to be an issue in the distant future close to null.

Premature optimisation really is the root of all evil.

Hello.

I just want to recap a couple of things I’d like to discuss during today’s meeting.

As per W3C standard, XML namespaces can be used in two alternative ways

xmlns attribute (like in all the Appendix examples), This means that all XML elements in the document (apart those with a different explicit namespace, like cdl in the Appendix examples) are supposed to belong to the same namespace. This is the easiest choice for parsers, but it usually taints interoperability should AMF be embedded in larger XML documents.xmlns:prefix, whose scope starts from that very element downwards. In this case the namespace prefix must be used in every XML element that belongs to that namespace. This solution might be a bit more difficult for less flexible XML parsers, but assures the maximum interoperability and protection vs ambiguities.I think it’s important to state that AMF parsers should be capable of understanding both namespacing praxis, as they are both valid in W3C XML.

In fact, this is remarked in §6 introduction, by referencing the namespace prefix amf as associated to the namespace name urn:acesMetadata:acesMetadataFile:v1.0, as per latter alternative. --Whereas, only the former alternative (one unprefixed namespace root) is used in the Appendix examples.

As a Corollary, please note that the <asc-cdl> element is part of the ASC-CDL standard, therefore it should also be declared with XML namespace ("cdl" is used in the Appendix examples) – not just the <SOPNode> and <SapNode> elements. Moreover, if the additional lookTransformStackPosition element and/or the <lookTransformWorkingSpace> element are to be used under the <asc-cdl> element, they should be namespaced, because they’re not part of the ASC-CDL standard. Doing differently, makes an ASC CDL “strict” parser fail parsing that part.

Another remark: the hash type could be defined using W3C definitions (already employes in the XML digital signature standard XML-DSIG defined in https://www.w3.org/TR/xmldsig-core1/ ) rather than just “sha256”, “sha1” or “md5”.

I really mean the hash algorith names like, for example, “http://www.w3.org/2001/04/xmlenc#sha256” .

An even more interoperable example would be --for the customized <hash> element, to re-use the XML-DSIG <DigestMethod> element. This means using a ds prefix, like:

<hash xmlns:ds="http://www.w3.org/2000/09/xmldsig#">

<ds:DigestMethod Algorithm="http://www.w3.org/2001/04/xmlenc#sha256"/>

<ds:DigestValue>ffcd28772e244f9a3c6e9893f499f2b4f2f3313d292db51aeea4fd3f65f00d9</ds:DigestValue>

</hash>I believe you may be working from an older version of the XSD at this point. The latest is both on my fork in the master branch and in the ampas/aces-dev repo here : https://github.com/ampas/aces-dev/tree/dev/formats/amf where the development history was squashed.

The latest includes updates to the name space, removal of the node, removal of the lookTransformStackPosition. Also, all elements are now explicitly declaring the name space.

Finally, we have udpated the algotihm types to use the URNs specified by the W3C but we decided not to import the <ds:DigestMethod> and <ds:DigestValue> tags from XML-DSIG.

@Alexander_Forsythe,

Thanks for the prompt reply .

Did you have a chance to read my previous post as well, that on on XML namespaces in general?

Sorry for not getting this feedback in earlier, hopefully this is not too late to be helpful. I have one larger critique of the LMT working space to start with, followed by a bunch of suggestions on rewording different bits of the spec.

6.3.19 aces:lookTransformWorkingSpace

I am struggling to find the original intention and goal of adding this feature, can I request someone highlight that for me? I follow the mechanisms, I’m just not clear on the intended value with providing this flexibility.

I’m pretty divided on this feature. Top pro: leave lin/log conversions up to the applications instead of the LUT. Top con: Interop nightmare web of doom.

I’ll briefly mention TB-2014-010 to get the pedantic problem out of the way. That document lays out a clear definition: “The input to an LMT is always a triplet of ACES RGB relative exposure values; its output is always a new triplet of ACES RGB relative exposure values.”

So, if AMF supports breaking out transformations in order to feed the LMT a non-ACES image, and to receive a non-ACES image from the LMT, it is technically in conflict with the LMT definition. If this is really what is wanted, then TB-2014-010 should be updated along with the release of AMF. I think it is important this is not overlooked, because we should fully expect software vendors to start implementing more LMT features now that they can be exchanged/archived properly with AMF. We should ensure they are implemented against a 100% valid specification.

As for the feature itself, the top advantage, to me, of the currently proposed LMT working space feature is that adjustments applied in non-ACES color spaces do not have to package together all of the ACES to/from conversions into itself. Theoretically, this makes the translation of pre-existing look libraries to LMT libraries easier, because you just have to work out it’s working color space and specify it in the XML. I say theoretically, because in practice I’m sure a vast majority of stashed LUTs output display-referred RGB anyways. Lastly, those lin/log transforms are being trusted to the application rather than the LUT.

The top disadvantage, to me, is how unwieldy this is going to be with software support and interoperability. The current proposal includes that the fromLookTransformWorkingSpace and toLookTransformWorkingSpace are specified as Color Space Conversions transforms. I saw earlier in the thread Alex updated S-2014-002 to allow for user-supplied CSCs. I am wondering how all of this comes together. Many applications have already implemented a lot of these transformations, and they will need to map those functions with these transformIDs, and somehow ensure they accurately reflect them. Does a user-supplied transform need to be uploaded to the ACES github? Who provides reference images for these so every application knows they’ve implemented them properly? What happens when an application receives AMFs referencing a user-supplied LMT working space that it doesn’t support? Does that user need an emergency special build of the software? Or are we back to the same pain we’ve always been in w/o AMF, by manually communicating intentions and digging up the right files to fill out the color recipe?

I’m also concerned this will inevitably lead to different apps performing different to/from transforms, and generate paranoia around relying on AMF workflows. CDLs + LUTs are tedious, but at least you always get the same image out once you’ve stuck them in the correct order.

If we want to look at things like ASC CDL as an inspiration for metadata-driven specifications, maybe it’s worth trying to simplify AMF if we can, and consider removing this to/from transform flexibility. This would leave all of this up to the LMT itself, as expected by the TB-2014-010 document. I’ll end with a quote from the 2017 paper that argues for simplification of ACESclip:

“The ACESclip file format attempts to achieve many things at the same time which is likely another factor slowing down its adoption.”

Out of all the current components of AMF, I would reckon the concept of interpreting and mapping to/from transforms for the LMT working space will be high on the list of slowing down AMF adoption. Again, I’m a bit torn myself on this, but I wanted to unload all of the scrutiny I can before the bell rings.

Grammatical critique:

To preface, I know many industry folks that will be reading this spec whom are not native English speakers. I decided to pick apart most paragraphs and simplify the vocabulary and grammar as much as possible so it is a smoother read. For myself as well!

• Output Transform – on what display was this viewed?

This is completely pedantic, but ODT does not tell us what display was used, it tells it the calibration of the display that was used for viewing. Maybe change this to:

• Output Transform – how was this viewed on a display?

For production, carriage of this information is crucial in order to unambiguously exchange ACES images, looks, and overall creative intent through its various stages (e.g. on-set, dailies, editorial, VFX, finishing).

For archival, storage of this information is crucial in order to form a record of creative intent for historical and remastering purposes.

This is reading awkwardly for me. Also, maybe it’s better to avoid hamstringing the specification by being specific to motion picture production? Suggestion:

To maintain consistent color appearance, transporting this information is crucial. Additionally, this information serves as an unambiguous archive of the creative intent.

An AMF may be associated with a single frame or clip. Additionally, it may remain unassociated with an image, and existing purely as a translation of an ACES viewing pipeline, or creative look, that could be applied to any image.

Attempt to reword for clarity:

An AMF may have a specified association with a single frame or clip. Alternatively, it may exist without any association to an image, and one may apply it to an image. An application of an AMF to an image would translate its viewing pipeline to the target image.

In Figure 1, the element names are extremely squished. Can the RRT + ODT blocks be arranged top down so that these element names can be presented larger?

5 Use Cases

In cases where the looks are stored external to the AMF, the files must be assigned a valid ACES LMT TransformID.

“ACES LMT TransformID”: I’m being pedantic, but it doesn’t help a newbie if they try to search this phrase in the spec and get no other results. The later part of the spec states this as “lookTransform” and “transformID”. Can this sentence at least refer to the relevant section: 6.2.1.6. Or, maybe this sentence can just be removed completely, since it’s a little overkill for the use cases section? Why state LMTs need a transformID, but not IDTs, ODTs, etc…

5.1 Look Development

Developing a creative look before photography can be done to produce a pre-adjusted reference for on-set monitoring. This may happen in pre-production at a post facility, during camera testing, or on-set during production. Typically, this has involved meticulous communication of necessary files and their intention, which may include a viewing transform, CDL grades, or more. The viewing transform, commonly referred to as a “Show LUT,” can vary in naming convention, LUT format, input/output color space, and full/legal range scaling. Exchanging files in this way obfuscates the creative intention of their application, due to lack of metadata or standards surrounding their creation.

I’ve tried to simplify this:

The development of a creative look before the commencement of production is common. Production uses this look to produce a pre-adjusted reference for on-set monitoring. The creative look may be a package of files containing a viewing transform (also known as a “Show LUT”), CDL grades, or more. There are no consistent standards specifying how to produce them, and exchanging them is complex due to lack of metadata.

AMF can store a creative look in order to be shared with a production to automatically recreate the look for on-set monitoring. A common way to produce a creative look in an ACES workflow is the creation of an LMT (Look Modification Transform), which separates the look from the standard ACES transforms. Further, AMF can include references to multiple LMTs, while ensuring they are all applied in the correct order to the image.

Attempt to simplify wording:

AMF contains the ability to completely specify the application of a creative look. This automates the exchange of these files and the recreation of the look when applying the AMF. In an ACES workflow, one specifies the creative look as one or more Look Modification Transforms. AMF can include references to any number of these transforms, and maintains their order of operations.

5.2 On Set

On-set grading software with AMF support can load or generate AMFs based on the viewing pipeline selected and any creative look adjustments done by the DIT or DoP.

Suggestion:

On-set color grading software can load or generate AMFs, allowing the communication of the color adjustments created on set.

5.3 Dailies

The AMF (or collection of AMFs) from on-set should be shared with dailies in order to be applied to the OCF (original camera files) and generate proxies or other dailies deliverables. Methodologies of exchange between on-set and dailies may vary, sometimes being done using ALE or EDLs depending on the workflow preferences of the project.

It is possible, or even likely, that AMFs are updated in the dailies stage. For example, a dailies colorist may choose to balance shots at this stage and update CDLs or LMTs. Another example could be that on-set monitoring was done using an HDR ODT and dailies is generating proxies using an SDR ODT.

Attempt to simplify:

Dailies can apply AMFs from production to the camera files to reproduce the same images seen on set. There is no single method of exchange between production and dailies. AMFs should be agnostic to the given exchange method.

It is possible, or even likely, that one will update AMFs in the dailies stage. For example, a dailies colorist may choose to balance shots at this stage and update CDLs or LMTs. Another example could be that dailies uses a different ODT than the one used in on-set monitoring.

It may be that AMFs are tracked the same way that CDLs and LUTs are tracked today (such as ALE or EDL), until more robust methods exist such as embedding metadata in the various formats used.

I think this sentence “dates” the spec too much. It reads more like an article by talking about the past/present/future. I think the main point here is that defining exchange has been decided to be out-of-scope of this spec, so I think it helps to make that clear. Suggestion:

This specification does not define how one should transport AMFs between stages. Existing exchange formats may reference them, or image files themselves may embed them. One may also transport AMFs independently of any other files.

5.4 VFX

A powerful use case of AMF is the complete and unambiguous communication of the ACES viewing pipelines or ’color recipe’ of shots being sent out for VFX work.

As with on-set, this is commonly done in a bespoke manner with combinations of CDLs and LUTs in various file formats in order for VFX facilities to be able to recreate the look seen in dailies and editorial.

AMFs should be sent alongside outgoing VFX plates and describe how to view the shot along with any creative look that it associated with the shot (CDL or LUT).

Attempt to set this up better and simplify:

The exchange of shots for VFX work requires perfect translation of each shot’s viewing pipeline, or ‘color recipe’. If the images cannot be accurately reproduced from VFX plates, effects will be created with an incorrect reference.

AMF provides a complete and unambiguous translation of ACES viewing pipelines. If they travel with VFX plates, they can describe how to view each plate along with any associated looks.

VFX software should have the ability to read AMF as a template for configuring its internal viewing pipeline. Given the prevalence of OpenColorIO in the VFX software space, it is likely that AMF will inform choices of OCIO configuration in order to replicate the ACES viewing pipeline described in the AMF.

Attempt to simplify:

VFX software should have the ability to read AMF to configure its internal viewing pipeline. Or, AMF will inform the configuration of third party color management software, such as OpenColorIO.

5.5 Finishing

This would give the colorist or artist a starting point which is representative of the creative intent of the filmmakers thus far, at which point they may focus their time on new creative avenues, rather than spending time trying to recreate prior work done.

Attempt to simplify:

AMF can seamlessly provide the colorist a starting point that is consistent with the creative intent of the filmmakers on-set. This removes any necessity to recreate a starting look from scratch.

That is great feedback Joseph!

The ability to use non-ACES working spaces for the Look is certainly one of the most complex aspects of the spec and I agree it will probably be a leading source of interop problems. During the spec drafting working group meetings it seemed like most people felt this was a requirement for the spec, so the question is probably not “should this feature be included?” but rather “how can we design it to work as robustly as possible?” I share your concerns that the current design may prove problematic.

I thought your rewording suggestions were nice improvements. Alex, is it still possible to incorporate these?

Glad to have your participation Joseph!

Doug

I agree with @jmcc that including non-ACES working spaces in AMF may be overkill definitely slowing down the spec’s completition.

The ACESclip requirement VWG had identified such a feature to be, indeed, a requirement for AMF; that is why it is still being discussed.

I was, personally, neutral to that.

However, to clarify the rationale behind lmtWorkingSpace to @jmcc, it should be considered that one may have a pre-existing show LUT working, for example as a ACEScc-to-ACEScc LUT, or "LogC-to-LogC LUT.

Re-using such in an ACES-workflow as a LMT means, otherwise, It is hard to tell these guys to manually convert it into an ACES2065-to-ACES2065 LUT first, before it can be included as the LMT part of an AMF: it makes more sense to define the space(s) it works on in case they’re not implicitly ACES2065-1.

That said, I also agree that, due to the fact that AMF is an ACES component, it could be narrowed down, for now to support only ACES color spaces.

Hey all,

I put together a solution to address the output referred use case where we want to go through the inverse of an output transform or inverse ODT / RRT.

You can take a look at it here :

Basically underneath the <aces:inputTransform> tag you can have a transformID for an IDT, or a series of tags specifying the transformID of an <aces:inverseOutputTransform> or an <aces:inverseOutputDeviceTransform> and <aces:inverseReferenceRenderingTransform>.

You’d use the inverse transforms to convert output referred images to ACES before going forward through the rest of the pipeline as specified in the AMF.

Let me know if this makes sense before I write it up in the spec document.

Thanks for adding that @Alexander_Forsythe.

@ptr would this suffice for your Inv ODT questions here: AMF and transformIds for "special" IDTs and ODTs ?

I want to voice my support for Joe’s grammatical edits to the spec. If there are no objections, I will update the spec with those edits.

I also agree that the non-ACES working spaces adds significant challenges to both implementation and interoperability (as we have already seen). In going back to old recordings from our Requirements group, it sounds like the use case was from a real-world project that used LogC as the LMT working space. Personally, I feel that this workflow could be adapted to utilize an ACEScct working space, and greatly reduce the complexity of the spec. This may deserve its own thread but I wanted to voice my opinion here.

Thanks,

Chris

I just had the chance to take a very brief look today, but the syntax in the new example4.amf looks very good to me. I like that it is explicit that this is not a regular IDT. So yes, that specific issue with the inverse-ODT “IDTs” seems solved very well with that.

I have a question about the latest changes about the WCS definitions that are proposed here: https://github.com/aforsythe/aces-dev/commit/b8497c0af1615ae037635b901df1818336e83d67

Generally limiting the definition of a working color space to the transforms that actually require it – namely the ASC-CDL node – seems like the right way to me in order to limit the use of WCS to necessary use cases, and to further substantiate the intended use of working color spaces in the context of LMTs. It also might help reduce maybe yet unforeseeable consequences for the future of “regular” LMTs, where now ACES 2065-1 (AP0) is always required in order to make them applicable as universally as possible.

I have one question about how to use that in a use case that we currently cover in our software:

In Livegrade (and I guess also in other applications) users have the option also to apply one or more 3D LUTs in the WCS (e.g. ACEScct) before or after the ASC-CDL.*

If we want to recreate such a pipeline (for instance IDT X -> ASC-CDL in WCS Y -> 3DLUT in WCS Y -> RRT -> ODT Z) in other systems, how would we encode that in the proposed AMF variant?

Maybe we are missing a second “legacy” transform node that also requires a WCS definition, namely a “LUT-based transform”. Again you could argue that it is necessary to specify a WCS for that (because applying LUTs in linear doesn’t make much practical sense the same ways as ACS-CDLs). So we might need to introduce a similar mechanism for 1D / 3D LUTs as for ASC-CDLs. Or is this already covered somehow else?

I feel we’re getting very close now, but didn’t nail yet the use cases with externally defined transforms in the role of “LMTs”. With this I mean transforms that are custom-created for certain scenes or productions, and thus need to be defined outside the AMF file (e.g. as the mentioned 3D LUT files, or maybe also as CLF files), and that yet need to be referenced from the AMF file somehow as look transforms in order to be able to recreate that pipeline.

Maybe @Alexander_Forsythe or @CClark can add some clarifying comments. Thank you!

(*) Allowing to apply 3D LUTs in the WCS together with the ASC-CDL has become necessary in order to allow “custom LMTs” (e.g. provided by a post house or dailies facility) to be transported and applied in the format of 3D LUTs – especially as long as we didn’t have CLF or other formats that allow to be applied meaningfully in the scene-linear encoding of ACES 2065-1. Thus providing such “LMTs” as 3D LUTs (to be applied in a certain working color space) has become a practicable way of encoding and applying custom LMTs in such available 3D LUT formats.