Post your questions, comments, ideas, … on CLF implementation in your code here. Thanks!

I am part of the group investigating adding CLF to the LUT support in Colour Science for Python.

Attached is my write-up of some thoughts on the subject, particularly related to bit depth handling, since I originally misunderstood the way that was supposed to work. Apologies that it is something of a ‘brain dump’. It was never really intended to be shown in public!

It is also just my understanding from reading of the spec, and some brief testing, so it is possible some of my assumptions may be wrong.

CLF_bit_depth_v3.pdf (109.0 KB)

Hi JD.

During September/November 2014 --just before ACES 1.0.0 went public-- I made a few proposal to improve CommonLUT format. I remember Alex and Jim and FilmLight’s Peter Postma were also very active on the subject. Those proposals were too heavy to be implemented at that time, due to short time-to-release.

I tried to bring up the discussion many times (even later on here); some of those, discussions may be found here (please not this is a mixed feature-request for the whole ACESNext project, not just CLF):

and here (focused on better integration between CLF and ACESclip):

and here (not really on CLF, but focusing on ACECclip and CTL):

Is the old Basecamp-based forum still alive? I think there are tons of technical XML comments buried in there by many contributor (mostly Alex F., Jim H., Doug W. and myself)

I tried to dig in my old inboxes, but I could just raise two topics “from the dead” emails: one conversation with Jim H. from 2014 (PDF page 1) and one post-interview correspondence with J.Z. from 2018 (PDF page 2, where I removed the suggestons not pertinent to CLF); please download below.

Walter A.'s comments on CLF from Oct-2014.pdf (729.5 KB)

It took me a while to get back to my original CommonLUT v2.0 proposal on the old Basecamp site (dating back Oct-Nov 2014).

I’m attaching here the PDF with the original postings on Basecamp.

Walter A.'s proposal on CLF 2.0 from Oct-2014.pdf (685.7 KB)

I will upload the content of the two CLF samples, as mentioned in the above PDF:

Academy_ColorLUT_sample_v4.clf

and, later on,

Academy_ColorLUT_sample_v5_minimal.clf

in the following post (with a different extension to “tweak” ACEScentral into accepting the uploads).

There are a few seminal ideas there, like having the CLF “store” a whole series of color transformations (coming either from 1D LUTs, 3D LUTs, CDLs, RGB matrices, etc.), that can be composed / chained to form complex transformations.

A practical use is the combination of one or more creative LMTs, with possibly RRTs and/or ODTs referring to non-standard devices.

Of course the oribinal ACES Input/Output transforms may be simply recalled by using the Academy component’s TransformID or TransformHash names from the original CTLs.

This feature (particularly if combined with merging CLFs with an ACESclip, in order to bind the color-transform with the actual footage/content), would really boost the interoperability of moving footage and color mappings across applications.

This is the first sample mentioned in the PDF from my previous post, Academy_ColorLUT_sample_v5_minimal.clf. It is a hugely revisited version of CLF where several ColorLUTs may be composed of several transformations that can be chained to gether across different pipelines (e.g. one for post, one for mastering, one as a viewing proxy, etc.).

The information in the XML content of the CLF are destructured in a way much similar to how CSS is composed and apllied to an HTML document via its class and id tags.

This is a similar phylosophy to how assets are handled into packing lists and then into playlists in several XML layers of a DCP metadata.

Academy_ColorLUT_sample_v4.ctl (6.7 KB)

The second example, Academy_ColorLUT_sample_v5_minimal.clf.xml, is instead a minimal version of the above LUT, with less “advanced” features. It was proposed shortly after as a possible candidate for ACES 1.0.0 (whereas the more extended format was clearly to be proposed for a future ACESNext release)

Academy_ColorLUT_sample_v5_minimal.clf.xml.ctl (4.3 KB)

p.s.: The files were renamed to .ctl otherwise ACEScentral doesn’t allow for uploading. Its extension should really be .clf.

Hi Nick,

So a few comments on your .pdf

First, although I would have liked it to be so, the Python code never got the in-depth

validation to call it the “reference implementation”. I do like it, but it is I think still a

“ guide for implementation”. In other words, though unlikely, it could contain errors

or not reflect the document completely. Still it is a great resource and HP did great with it.

I believe it functions well.

-

there is a statement that processing should be done at 32f for all bit depths.

-

"The inBitDepth of each Process Node defines the way that incoming data should be interpreted.

This means that it should match the outBitDepth of the preceding Process Node. I am unclear

whether this match is mandated by the spec (I cannot see that explicitly stated) and an error

should be raised if there is a mismatch.”

All elements of design of a LUT (and the workflow for it) were intended to be accessible to the LUT designer. So except for the case of integer-to-float conversion (i.e. 1023/1023=1.0) control of bit depth changes should occur using the range node for scaling of bit values. So a mismatch of In and Out should raise an error. A Range node can be used to correct all mismatches and each end of the Range node would ‘connect’ to the previous and Post node.

" If none of minInValue, minOutValue, maxInValue, or maxOutValue are present, then the Range operator performs only scaling. The scaling of minInValue and maxInValue depends on the input bit-depth, and the scaling of minOutValue and maxOutValue depends on the output bit-depth.”

-

I think your process description of the Range node is a bit off. The bit depths are normative and the Min and Max values may be within the range of the bit depths. In general, I do not think that for the floats, that Min and Max values should be omitted. There was a controversy here because the LUT format is designed to work on the entire range of a float and manipulated as such. The spec is not completely clear that the default of [0…1.0] is only for when interacting with an integer range on either end of the node. It would not make sense in a full floating point in and out to normalize a full range scene value to a scale of 0…1 — accuracy would suffer.

-

I also think that the description of ASC-CDL isn’t right – it isn’t the only case where in and out bit depths are needed to change. see 9.5 Rescaling operations for input and output to a ProcessNode

The input and output bit depths of a single node may not match each other. For 1DLUTs and 3DLUTs, the

output array values are assumed to be in the output bit depth. For Matrix elements, a scale factor must be

applied within the coefficients of the matrix.

[This is also about the IN and OUT of a Node changing which is where the Matrix element not comes from]

In my view, you have found the points of contention where the Python code made assumptions that do not exactly match the spec (and the spec itself is sometimes unclear about which way to go).

The CLF was designed to be able to create LUTs fully in the floating point domain, so that for example, you could work in linear ACES with 222.0 as the top ‘white’ fed into a range node for conversion to integer without any assumption about scaling of the integers. The implementations though focus too much on the traditional integer LUT manipulations as a default. Again control of all of these should be with a LUT designer and a minimal number of assumptions should be made. But the Python code does make some assumptions as does CTF, and in my view sacrifice generality. Improvement in the wording and clarity of the spec is why the CLF document is still listed as a DRAFT on the Academy website.

I also wanted to note that there existed some hardware manufacturers who could directly load CLF LUTS in hardware using OutDepth of 10i. So the world is still not completely software and the manipulations of a Nuke style node graph are not necessarily the only assumptions that should be used. [floating point though is less onerous that it used to be]

The reason for 32f and 16f separately is again to allow the LUT designer to control precision and range of the input or output. (again no scaling should be assumed if both ends are floating point. The RANGE node should be used instead to make explicit if you want 0 to 1.0 scaling of a section of the float.) A 16f Out to a 32f IN is really just a straight pass through with the native 32-bit processing of a ProcessList. It could matter on output though – so people output a full HDR scene referred file with greater range than EXR if they want. Again, the format was not just for output devices, it was especially for full range scene-referred float LMTs. So be careful about limiting the generality in your description of processing for each.

I was mistaken when I wrote that. It is stated in the spec. In section 4 it says “The input format of each node must be matched by the output format of the previous node in the processing chain.”

I will summarise here the content of a few email discussions I have had recently on the subject of CLF with @jdvandenberg , @doug_walker , @hpduiker and @Thomas_Mansencal.

As part of my testing I created the attached CLF which goes through a series of transforms, using the different types of Process Node possible in CLF. I used only half-float bit depth to reduce the permutations necessary. The transforms are paired so that the net result of the CLF (within limitations) should be a NoOp.

kitchen_sink.ctl (4.1 MB) (renamed from .xml to .ctl for upload)

Testing my CLF in various implementations, it became immediately apparent that different implementations support the S-2014-006 spec to varying extents. The spec itself states that support of some aspects (IndexMaps with dim>2, for example) are not required. I would suggest that specifying, but not requiring support of, a type of process is counterproductive. For something which is supposed to be a “common” LUT format, if an LMT is archived with a project as CLF, and uses a non-required feature which worked on the system used for original mastering, if that feature is (permissibly) not supported on another system used for subsequent remastering, that would be problematic.

My testing also indicated that some features which are required are not supported in all implementations. I will refrain from being specific at this time.

I think that the next iteration of CLF should be rigidly specified, and support of all features should be mandated. I do think that some features could be removed, to simplify things. One candidate, in my opinion is support for integer bit depths. These days, integer LUTs are only really relevant for hardware LUT boxes. Most of these support only a single 1D or 3D LUT, with some, such as the FSI BoxIO, supporting a 1D input LUT, a 3D cube and a 1D output LUT. This means that in most cases a complex CLF transform will need to be collapsed to a single 3D LUT for the LUT box, and the compromises inherent in that accepted since the LUT box is likely to be used only in a monitoring path. Even where a CLF is being designed specifically for the capabilities of a particular LUT box, say 1D > 3D > 1D for a BoxIO, it would not be problematic to store the array values as floating point, and it would be trivial to multiply them by 1023 or 4095, as appropriate, as part of the upload to the hardware. LUTs designed for hardware LUT boxes are at any rate a special case, since they do not need to take linear input, being driven by an SDI signal. Linear data over SDI will not be commonplace until floating point SDI is a reality, at which point integer support is moot anyway.

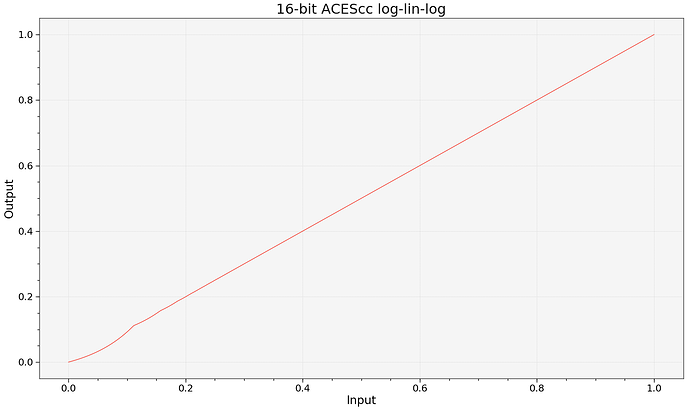

I would also put the case for some “convenience functions” to be added. Legal <> Full range is one candidate, although these are trivial to implement as a Range Process Node. More useful in my opinion would be some ACES specific colour space conversions. Since LMTs are specified to take ACES2065-1 linear data as input, and looks may often be designed in a grading system working in ACEScc or ACEScct, one of the first operations in a CLF may be a conversion to e.g. ACEScc. Intuitively one might think that this could be done simply with a Matrix and a sufficiently large 1D LUT. However for this to be done accurately the 1D LUT must have non-uniform input sampling, using either a fully enumerated IndexMap or the halfDomain setting. If a simple 1D LUT is used, even with 65536 entries, the transform from linear to ACEScc and back will result in distortion, even before any look is applied. This is because even 65536 samples are not sufficient if evenly distributed over the range covered by 0-1 in ACEScc, as shown in this plot:

An exact explanation of the reason for the distortion near zero is too much detail to go into here.

I therefore suggest that preset transforms between the various ACES colour encodings would help transform designers make better, more accurate transforms more easily. I would also suggest that some of the more complex elements of the forward and reverse RRT and ODTs would be useful, to facilitate creation of empirical LMTs without the need to bake large parts of the process into 3D cubes, with their associated limitations. The granularity of the breakdown of these transforms is up for discussion. While it might give the greatest flexibility if all the elements of the “RRT sweeteners” were available separately, this might overcomplicate things. Having just the base set of Output Transforms in forward and reverse forms would be helpful.

The ideal might be to allow transforms defined as mathematical expressions. However I do not know what the implication of this would be for real-time implementation in grading systems.

I also want to reiterate the suggestion from my earlier PDF that the <InputDescriptor> and <OutputDescriptor> tags change from free text comments to allow for at least ACES standard colour spaces to be specified and interpreted appropriately by implementations. This would allow the removal of redundant (and potentially lossy) conversions to and from linear by e.g. allowing an LMT to specify that it receives and returns ACEScct image data.

Hi Nick,

Great work! Couple of bits of feedback:

I’d suggest separating the definition of a library of transforms from the development of a LUT format. They feel like pretty different challenges. Standardizing and validating CLF support feels like one of the biggest challenges. That was my motivation in writing the Python implementation as a reference originally.

For extensions to the format, I might suggest also looking at some of what @doug_walker and team have done with ‘CTF’ in the Autodesk products. Some of the process node types that they’ve added would be very useful to have in the general CLF spec.

The extensions, plus a few of my own are listed in the Python repo readme here

and copied below

Extensions

- Autodesk extensions

- Gamma ProcessNode

- Log ProcessNode

- Reference ProcessNode

- With some separation of Autodesk-specific functionality like the ‘alias’ attribute

- ExposureContrast ProcessNode

- ‘bypass’ Attribute

- DynamicParameter Element

- ASC-CDL ‘style’ keywords

- Duiker Research extensions

- Group ProcessNode

- Useful for organizing list of nodes that represent a single filter/transform

- ‘floatEncoding’ Array Attribute and associated behavior to allow for exact floating-point value storage and transmission, i.e. without converting to a potentially ambiguous decimal representation

- gzipped reading and writing

- Group ProcessNode

My two cents,

HP

I’ll admit to being influenced by what Autodesk have done with CTF in my suggestions for extending CLF!

S-2014-006 already defines various ‘style’ keywords for ASC CDL – ‘Fwd’, ‘Rev’, ‘FwdNoClamp’ and ‘RevNoClamp’. Does CTF add more?

Nick, regarding your question about the style keyword for the CDL op, technically yes, there are four more, but functionally they are just aliases. In other words, during the S-2014-006 working group meetings, it was decided that for CLF the group preferred a different set of strings for the four options than what we were using in CTF. So our CTF/CLF parser recognizes both the new strings and the old strings, but mathematically it’s still just the same four options: the official ASC CDL v1.2 equations, the similar equations without clamping, and the inverse of both.

I also agree that the Duiker Research extensions proposed by HP would be useful.

Hi all! Looking forward to the call coming up in a few weeks.

Two things I would definitely like to discuss:

-

I second Nick’s request for eliminating integer formats from the spec. I do remember a call (maybe right before ACES 1.0?) where this was discussed and I do recall hearing some agreement there. I do understand Jim’s use case example but I really do think hardware manufacturers are able to go 32-bit float with a conversion on their end. It would be great to get someone on the call to confirm this. Do we have any hardware manufacturers here? Please let me know. Honestly this is the one thing that has kept me from implementing. I would also like to hear an argument for keeping RawHalfs and HalfDomain around as I think they are not needed either. There’s so much room for confusion and differing implementations - I think the simplest solution for getting everyone to implement the same way is to reduce the permutations of possible inputs and outputs, preferably to one (32-bit float).

-

IndexMaps seem to function identically to a 1D LUT - I do wonder if we actually need them to stick around. Obviously if removed we would still need a way to define the input bounds of LUTs.

See my earlier post (with the plot) for my reasoning why either IndexMap (with more than the required two entries) or halfDomain is needed for accurate linear to log conversion over a wide range. An IndexMap is not the same as a 1D LUT. It can often be like a 1D LUT applied in reverse – a 1D LUT normally has equally spaced input samples and unequally spaced output values. an IndexMop lets you have unequally spaced input samples, perhaps with equally spaced output values, which is useful for lin to log. halfDomain effectively is a preset IndexMap with 65536 entries which are all the possible half-float values.

rawHalfs is more of a grey area. It lets you precisely specify half-float output values rather than decimal approximations. But how crucial that is I would say is debatable. By my calculations, eight decimal places is sufficient to unambiguously define any half-float value.

Nick,

Re: IndexMaps of course! I forgot about your chart earlier. Just out of curiosity, in your testing, what size 1D LUT will accurately perform the conversion?

As far as rawHalfs, you’d be hard-pressed to find anyone working with half floats on the CPU as most languages (CPU-based) have no native support for half float primitives.

Define “accurately”! You need to go to 20-bit (1048576 entry table) before the plot looks visually like a straight line at a reasonable size.

import colour

import numpy as np

import matplotlib.pyplot as plt

bit_depth = 20

fromLin = colour.io.LUT1D(size=2**bit_depth,

domain=[colour.models.log_decoding_ACEScc(0.0),

colour.models.log_decoding_ACEScc(1.0)])

fromLin.table = colour.models.log_encoding_ACEScc(fromLin.table)

x = np.linspace(0, 1, 2**bit_depth)

y = fromLin.apply(colour.models.log_decoding_ACEScc(x))

p = plt.plot(x, y)

plt.ylabel('Output')

plt.xlabel('Input')

plt.title('{}-bit ACEScc log-lin-log'.format(bit_depth))

plt.show()

Gotcha. Just trying to determine the feasibility of not needing a color transform node. A 1D LUT of that size isn’t really an issue with modern GPUs (around 8MB texture) but on constrained systems it could be. I do wonder then if we need to discuss adding an ACES process node that provides a way to go back and forth between linear and ACES log formats. I’m not really for adding arbitrary log formats as that could open a can of worms in terms of support, but I think Academy standardized ACES functions could be acceptable. This is the same justification that can be made for why the CDL process node exists.

I agree.

Indeed. The result of ASC CDL can be replicated with a 3x1D LUT and a matrix, but there is a logic for having it as a parameterised invertible preset mathematical transform.

First off, happy new year! I have a feeling 2019 will be the year of the CLF (among other things of course).

Thank you to all of you for already jumping on the subject. I read all your posts and will gather the main points for the call. It will be posted within the ‘Notice of Meeting’ thread. You will be welcome to weight in.

There is something in the current spec which seems ambiguous to me. Section 9.3 says:

“…a transform designer and/or the application should insure that output floating-point values do not contain infinities and NaN codes.”

But section 9.4 says, regarding halfDomain LUTs:

" The design of this 1D array must take into account the presence of negative numbers, infinities, and NaNs in the original 16f bit pattern."

+/- infinity can be clipped to the maximum and minimum float value respectively, but what is the correct handling of NaNs? Logic might suggest that they should be passed unchanged. But this would conflict with the demand that the output not contain NaNs. An identity halfDomain LUT would not be compliant. So is it a requirement that any transform designer must pick a value for NaNs to be mapped to?

NaN doesn’t exists in math. Most of the time, it is just a +infinity or -infinity. For instance, 2/0 is a NaN in computer science but a +infinity or -infinity depending on the direction of the limit. My point being: I am pretty sure we can force the LUT designer to pick +/-infinity instead of a NaN.