As someone thats too you g to ever have worked with film stock (ive done 1 feature but it was delivered as adx10 and they wanted back acesAP0 it was very simple).

I have no good understanding about the relationship between scene-light and the codevalues that come out of a filmscanner.

In my mind it would go like that:

Filmstock has a certain response to light, depending on intensity and wavelength of the light it causes a change in density on the negative per color layer(I have done a lot of analoge photography so its not foreign to me) .

This response can be measured and profiled.

the scanner then scans the negative, again causing a change in current? (I have no idea how laserscanners work) depending on the density of the negative, this would go into a A/D converter that quantizes that into linear 16bit or whatever codevalues that then get processed into something like adx10 12bit log and saved as dpx files using the aformentioned film stock and laser profile to create a scene-reffered output.

If this is correct (I am sure I got something wrong I am really just guessing, please correct me! I am keen to understand)

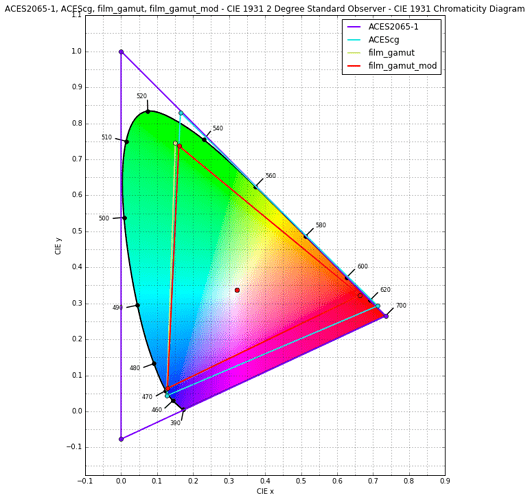

What even is ADX10? I wasnt able to find much about it on google, is this cineon with ap1 gamut? when going adx10->adx10 there is 0 error in nuke so I assume its not doing any gamut conversion under the hood.

Then also what gamut is cineon? How do you define the colorspace of filmstock… etc

So many questions.