Help! I am totally lost trying to set up this new stop-motion production for monitoring HDR content and eventually delivering in Dolby Vision.

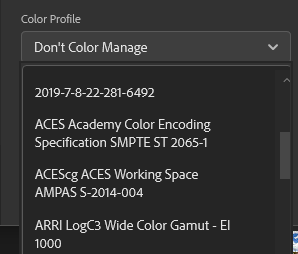

We have access to a Canon DP-V2421 monitor which meets the Dolby Vision mastering monitor specs. We are supposed to deliver everything at the end as 16-bit TIFF files, in P3 color space, D65 white point, and the PQ transfer curve.

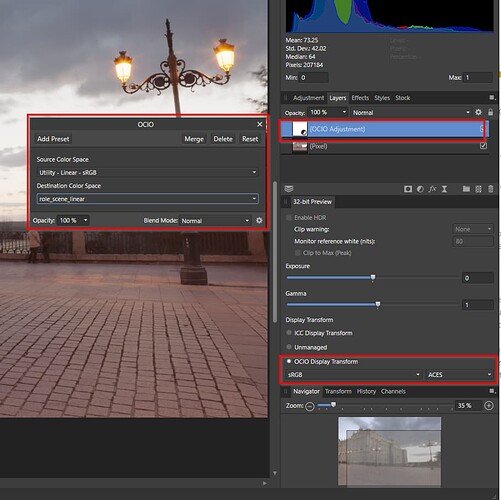

We are using Canon EOS R cameras, and can convert those .cr3 files to .exr in any color space/transfer curve I like. We are looking at using Affinity Photo as part of our processing pipeline, and I have test frames from it exported as .exr in ACEScg and Linear sRGB.

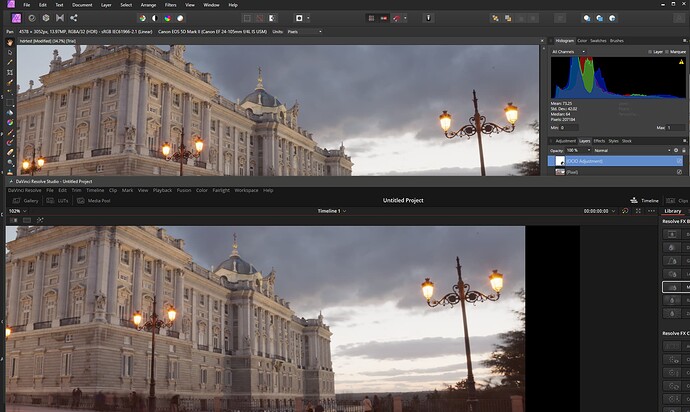

According to Canon’s instructions, I had the monitor set to our delivery specs using its internal hardware calibration settings. On a 2013 Mac Pro, following Dolby’s instructions, I created a project in Resolve 17.4 (non-studio version at the moment), and set preferences to enable 10-bit precision in viewers and “Use Mac display color profiles for viewers”. In project settings, I set the following:

- Color science: ACEScct

- ACES version: ACES 1.3

- ACES Input Transform: No Input Transform

- ACES Output Transform: P3-D65 ST2084 (1000 nits)

- Enabled “Display HDR on viewers if available”

I imported my test frames as clips, and set their ACES Input Transforms to “ACEScg - CSC” and “sRGB (Linear) - CSC”, then created a timeline for each frame.

When viewing those timelines on the Canon, they look nothing like Affinity’s GUI viewer.

I have an X-Rite i1 Display Pro Plus colorimeter that I got to calibrate our VFX and stage monitors, so I thought I would use that with DisplayCAL to make sure that the Mac Pro was sending calibrated data to the Canon. I used its calibration to adjust the monitor’s white point to exactly D65, and the peak luma to 1000 nits, then ran the profiler to create a simple single curve + matrix display profile that macOS could use in System Preferences. Then I went back and made a second XYZ LUT profile, from which I could make a 3D LUT to convert from the monitor to P3D65/SMPTE 2084 with a hard clip at 1000 nits, specifically to use in Resolve. These steps were all what I had learned from the DisplayCAL forums and guides. In Resolve, I turned off “Use Mac display color profiles for viewers”, and in Project Settings I set the Video monitor lookup table to my new 3D LUT from DisplayCAL.

At this point the UI of Resolve started glitching like crazy and at random, flickering as I moved the mouse around, shifting the color of the viewer when the application focus changed, and suddenly making the entire UI look like I was displaying a linear image without any color space conversion. I surmised that I had made an error by doing my monitor profiling with the Canon’s internal PQ setting still on, and that this had resulted in out of whack values in the single curve + matrix profile that the macOS was using. So, I went into the Canon’s settings and turned off all of the internal hardware conversion - native color gamut, gamma curve/EOTF off. I reran the DisplayCAL calibration to tweak the white point back to D65, but found that turning off the EOTF in the monitor had limited the peak luminance to ~695nits. I settled for a target of 600nits, and recreated both the single curve macOS profile and the XYZ LUT profile + 3D LUT for Resolve’s Video monitor lookup table.

Still no luck in Resolve - no more glitches, but the color managed frames still look totally different from the Affinity GUI, so I don’t feel confident that I know what I’m looking at in Resolve. I get that Affinity is probably using the macOS display profile for its viewer and this could cause some difference, but I’ve confused myself to the point that I don’t know for sure.

Tomorrow we are supposed to get a Blackmagic Decklink Mini Monitor 4K card with a PCIe expansion box for the Mac Pro, along with an SDI cable so that I can hopefully be sure I am sending a proper 10-bit Full Range RGB signal as Dolby Vision requires.

I have three questions:

- When I install the Blackmagic card, what are the correct settings for the Canon monitor’s hardware, and what are the correct settings for Resolve so that I am properly viewing an ACEScg exr source output transformed to P3D65/PQ?

- What am I doing wrong regarding macOS’s Display Profiles and DisplayCAL’s 3D LUTs?

- When everything is set up correctly using ACEScct, why do Resolve’s scopes roll off everything to 1000 nits when raising the exposure? I thought the Output Transform was only supposed to affect my display. By comparison Davinci’s YRGB Color Management can be set to a Timeline and Output gamma of ST2084 with a Timeline working luminance of HDR 1000 and the scopes show values raising all the way to 10000nits.

Thank you anyone for helping to educate me.