Heya guys!

Thanks for the conmments, I ended up resolving this myself but am writing out my explanation here to check with guy guys if it sounds correct! Was written to send to some people at work, but please comment if there’s anything wrong I cover. Seems I can only upload one image as I’m a new user, but have put up the other examples on imgur links.

Hey all!

Many thanks to everyone who chimed in here, have managed to get it all solved, and get the most accurate colour rendition I’m likely going to get through the ACES pipeline (I hope! if anyone has anything extra to add or any amendments please do!) - Thought it best to lay out everything on the table here for anyone wondering what was up in case this pops up in the future! Done some extra playing around with transforms too to clarify some further things for a correct ACES pipeline, hopefully it’ll help firm up some things for anyone else wondering too.

Issue one - Maya/Redshift OCIO Implimentation:

OCIO configuration is unsupported in Redshift for maya currently as previously stated, however the assumption has previously that it should still be enabled in colour management, and that pre-converting input textures is enough to overcome this issue. In fact, by having it enabled in settings it lead to some very strange transforms happening at rendertime. It seems that it was double transforming every input 8bit sRGB file leading to my original completely gamma-incorrect example images at the start of this post. The only one that had roughly the correct gamma was the one with Output sRGB -> Utility sRGB Texture - Which effecticaly begins by de-linearising our input texture so that when its double transformed, one of those transforms is now canceled out, but still isn’t in the ACES space, and the amount of conflicting transforms has messed up the colour along the way. (This is my assumption of what is happening under the hood on those original images, either way, this needs to be avoided)

https://i.imgur.com/MkfeG4N.png

By disabling OCIO in colour management we get the default maya ones back which already support ACEScg (This is where some people obviously go wrong, assuming that the ACES ocio NEEDS to be enabled in maya, when it already has some of it supported by default). Sure, it’d be great to be able to do the transforms directly in maya, rather than pre-converting our textures, but the custom OCIO it isn’t needed to render in ACES space and until it’s supported natively by Redshift it should be avoided like the plague so that we can use the maya file reader default colour space options.

NOTE: Arnold works perfectly with custom OCIO configurations, this should only be disabled for Redshift.

Correct ACES Transforms:

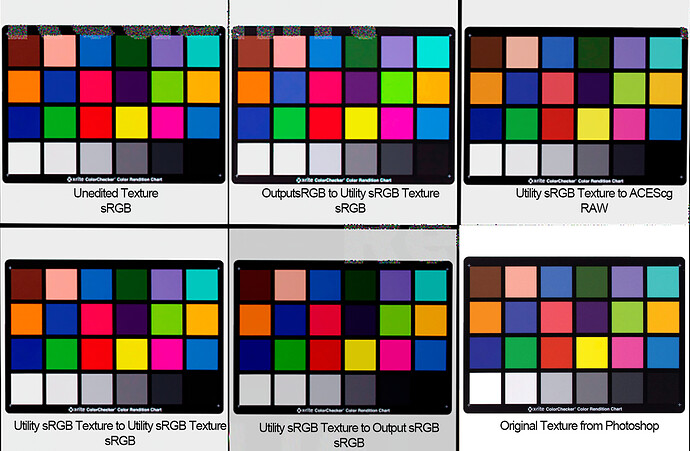

Now with that specific maya issue solved, the next issue is colour accuracy. We can now use the default Maya colour space transforms for input textures, but they won’t be converted into ACEScg space - All input files need to be transformed to ACES primaries for accurate colour rendition. There had been a lot of conflicting information thrown around regarding what transforms to do for 8bit textures, but I did a test of each one mentioned online to make sure I didn’t miss any.

As can be seen, each transform here is giving vastly different results and not pre-converting at all (top left) has lead to drastically oversaturated colours. These are all being read in as 8bit Jpgs here, with the maya file read node’s colour space annotated below. The correct transform of these here is the Utility sRGB Texture to ACEScg - each image has clipped highlights and shifting colours as transforming sRGB gamut into a space as wide as ACEScg but accounting for this, this transform is the closest to our original starting point. The other examples are mostly being read in correctly for gamma (except Utility sRGB texture to Output sRGB due to double transform) but the conversion hasn’t actually taken them into ACES space. Even 8bit textures need to have these colour transforms applied to them, but this transform is also linearising our input texture. As a result our Jpg now looks like so-

https://i.imgur.com/aiA9RlZ.png

Obviously, packing a linear file into an 8bit JPG would be advised against, and any input texture going through this process should be taken to 16bit, then converted and saved out as Tiff or so to retain quality. But for my example here with the JPGs, I’ve actually seen little difference, and converting to ACEScg and back again in photoshop didn’t introduce as much of an issue as I thought it would. This Utility sRGB Texture to ACES cg transform is linearising our texture, but also doing another transform for the ACES primaries, so when read into Maya as RAW it doesn’t need to do any conversions and is perfectly ready for use. Now, there’s another route of Converting our texture from Linear sRGB Texture to ACES cg, which effecticaly only applies the ACES transforms as it’s assuming the input texture is already linearised - this way we don’t have a linear file packaged into an 8bit shell and can have maya do the linearisation as render time, but doing this transform to a non linear file causes issues with the colour as you might expect. This approach should be avoided, even if your input texture still looks good in photoshop/nuke and it’s technically in ACES space - reordering of these functions doesn’t play well.

/i.imgur.com/DuPqoK4.gif

(Can only post two hyperlinks, so please remove the / and use URL to see image)

Viewing in Rec.709

Now, the last issue for colour rendition is that the Utility sRGB Texture to ACEScg texture seems to have some extra added contrast on the low end, something I was fighting against for a good couple of hours, but if I switch my renderview to a Rec.709(ACES) transform rather than sRGB(ACES), things match up almost dead on. As accurate as I assume I’ll be able to get with the small shifts in colour that’re expected when moving colours into ACES space. Gif comparison below (with white and black point adjusted for comparison)

/i.imgur.com/WBj7FH0.gif

(Can only post two hyperlinks, so please remove the / and use URL to see image)

Everything that I feed through this pipeline now seems to have near dead on accuracy (none of my maps are as saturated as these macbeth charts), and I’m not having to adjust my input textures as much to compensate for the color space/gamma issues. I hope this is in any way informative, and if someone with more knowledge sees that I’ve misunderstood/miscommunicated something please message me ASAP!