Hi @daniele ,

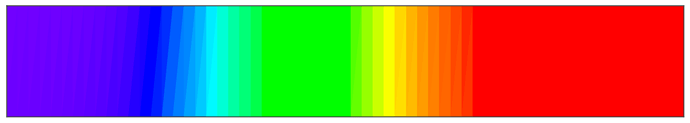

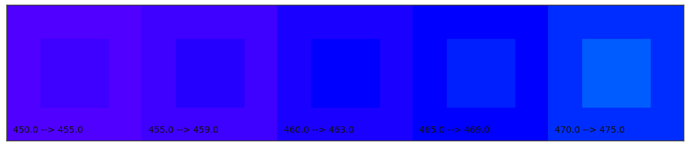

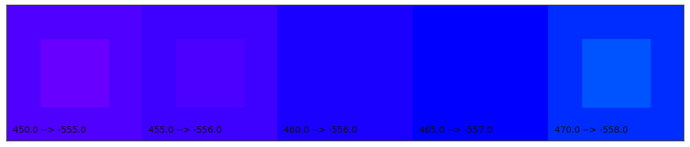

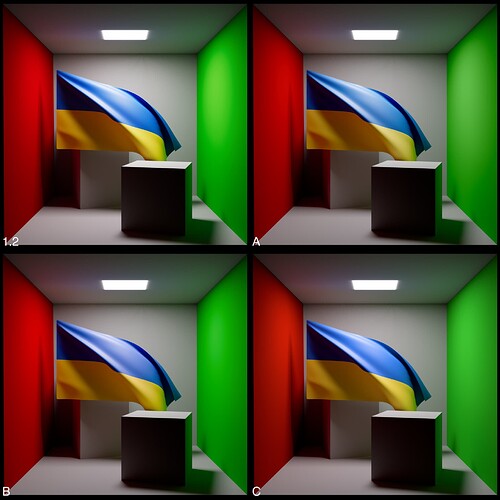

In Nuke if you control-click on the color it will open up added controls beyond RGB sliders. What I am changing on each color swatch is the hue from the HSV sliders. This value is indicated in the text over each swatch.

I have seen the values ![]()

So you went HSV mode on the colour widget in the UI and went in even steps there.

I think you can’t trust any legacy HSV slider really. This is basic generic RGB to HSV

I would not try to draw any visual conclusion from that series, I am afraid.

My intention was to see what it was like to pick colors from a color picker under each DRT when doing CG lookdev (Mari, Maya, etc.). I’m sure you are correct about them being fishy, and I have my own gripes with color pickers as an artist. However since this is the only tool we have to pick colors at present, I’d think it is representative of that particular task. That’s all it was intended to be.

Testing picking is a useful expertise.

But you write

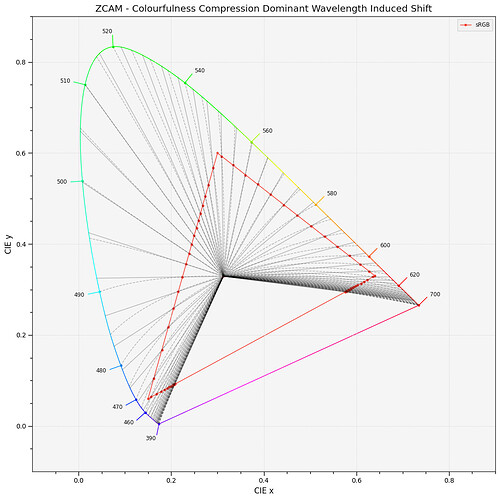

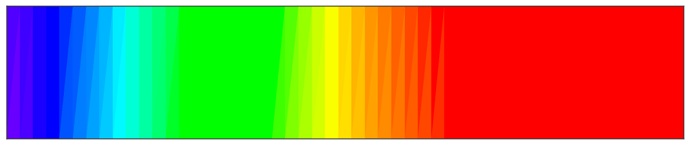

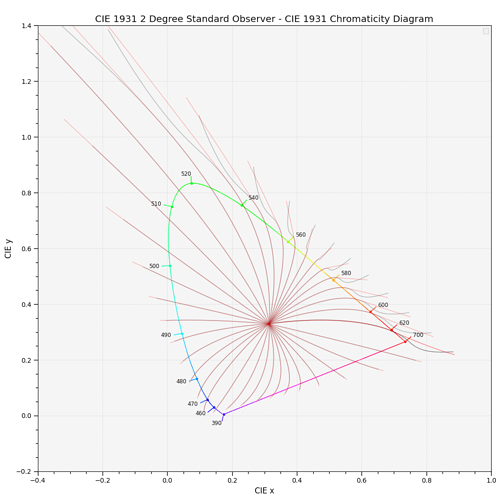

I find that there is no consistency in saturation in the original image to begin with. Now I understand why. The hue chips are surfing around the AP1 gamut hull, that is not constant in any perceptual term.

P.s.

Also note how the red colours look very Fluorescent in OpenDRT.

I find it interesting that ZCAM seems to compress brilliance much more.

So puzzling this together… In Maya I commonly pick colors in HSV. What I was noting is that under zCAM in ACEScg space, if I moved the hue slider (with the saturation on 1 and value 1) the saturation appeared to drop as a moved the slider between green and blue. …Am I understanding correctly that this is expected as I move that slider “surfing” along the AP1 gamut?

I had not seen that happening under ACES 1.2 or OpenDRT so I was surprised by it. Thanks for helping me understand what was going on!

Yes definitely have noticed this. I wonder if that “compressed brilliance” may be a plus when picking albedo colors for reflective materials? Artists need to learn to purposely choose darker and less saturated colors (as opposed to picking neon colors not found in nature), and perhaps this could help with that. Will need to do more experiments.

709 or AP1 are not perceptual spaces

On a Video monitor you can produce a much more saturated red than cyan.

So the HSV slider telling you that the saturation is 1.0 just means it is the most saturated colour you can achieve in that space for a specific hue, not that it has the same saturation than another hue with saturation at 1.0.

This is a common misinterpretation.

Same is true for the other scales including implicit scales like brilliance.

How the DRT is rendering those colours is another topic.

I think you need to render the colour picker widget through the DRT to the UI colour space otherwise picking is not intuitive.

But I would not draw correlated conclusions from that test image. That is all I want to mention.

It’s not super relevant, but some of you might find it interesting/amusing.

Kodak Ektachrome E100, scanned on a Fuji Frontier SP500.

I’ve taken a pass at creating a Nuke/blinkscript implementation of the Hellwig-Fairchild2022 CAM.

It currently works in both directions, but only outputs (and receives) the JMh components. I’m starting there, as those are the ones that are currently used by ZCAM DRT.

My intention is to try and bolt what I have here into ZCAM DRT in way that allows you to just flip between the two models. But we will have to see how that works in practice, as the is an intermediate Izazbz space that ZCAM uses that doesn’t seem to have a direct analog in Hellwig2022

It’s on github if anyone wants to take a poke at it:

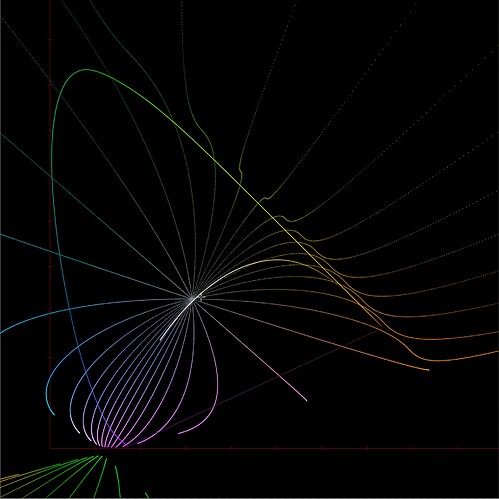

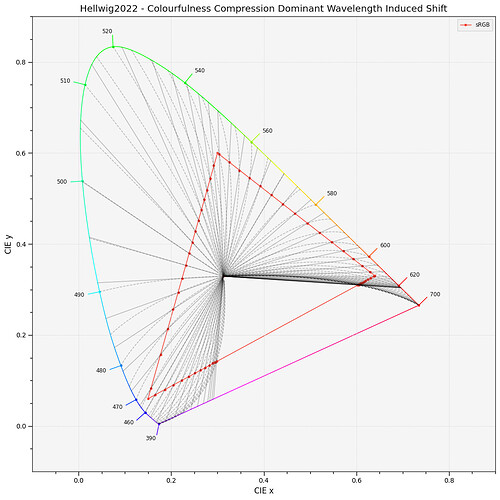

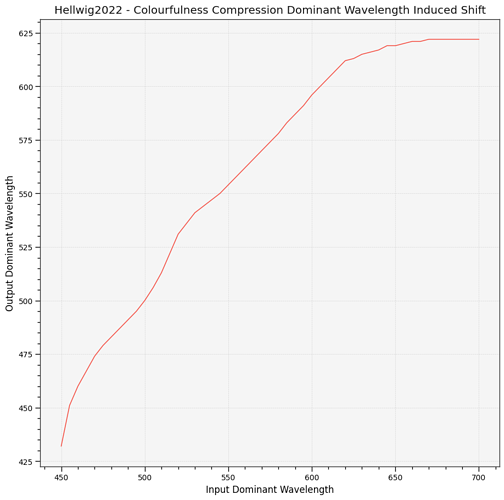

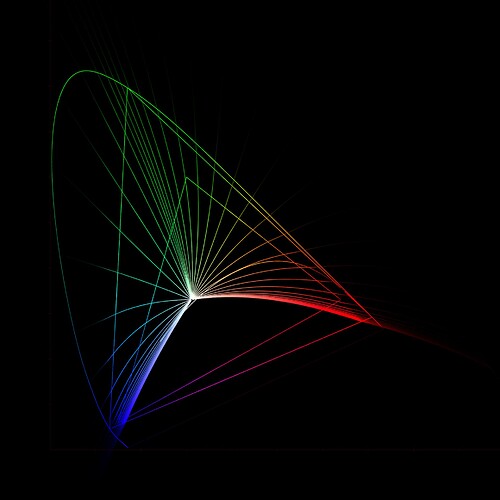

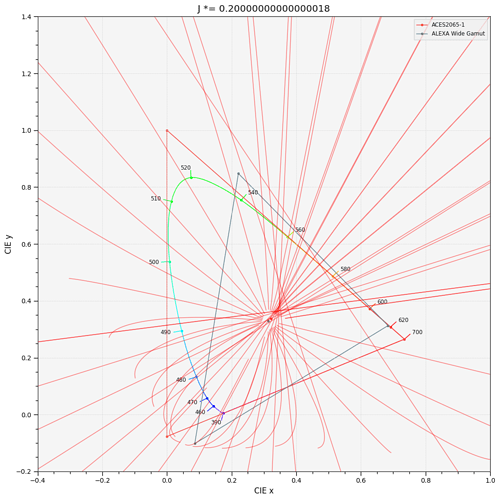

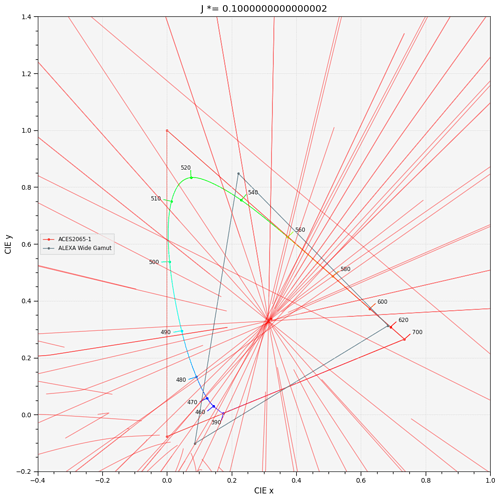

Whilst I havn’t been able to bolt it into a complete DRT yet, I have been able to make this plot.

J = 5.0

M = 0 → 100

h = 0 → 360 in 32 bands

My initial impression is that this isn’t going to make things any more controlable around blue, with a collapse point very similar to ZCAM (Maybe even closer), and a lot more strangeness around yellow beyond the spectral locus.

All of this looks like super reasonable behaviour for real world plausible colours inside the locus, but weirdness outside of it. Totally reasonble for a model who’s goal is to make predictions about the appearance of real colours to real observers. But less than ideal in our case, where we’re trying to find plausible new homes for nonsense colours.

I’m seeing interesting stuff too… It could be an issue with our implementation and some of my code for the diagrams being off, I lazily search’n’replaced!

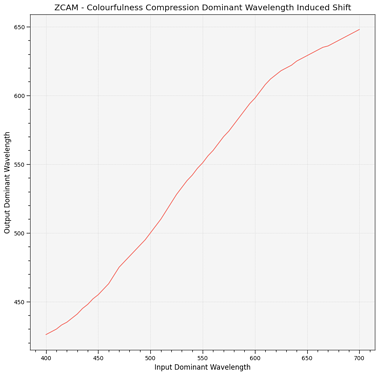

ZCAM

Hellwig and Fairchild (2022)

Cheers,

Thomas

Hello,

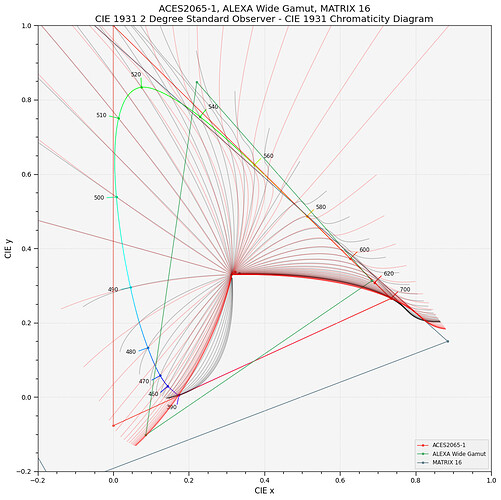

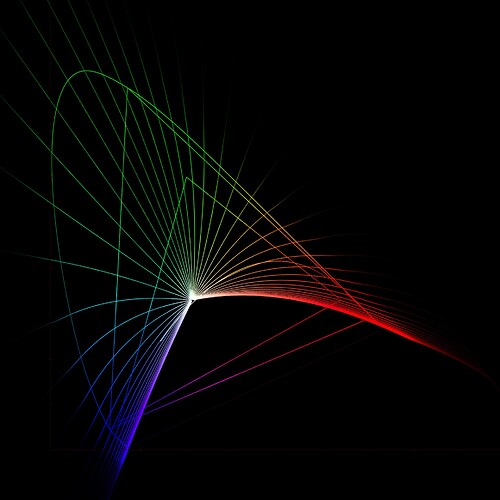

Did some massaging of the MATRIX16 to move the focal point around like @alexfry did with ZCAM earlier:

I’m tempted to leap between the two as a function of distance to whitepoint and possibly the direction, e.g. we use the massaged version more toward blue.

Notebook is here: Google Colab

Cheers,

Thomas

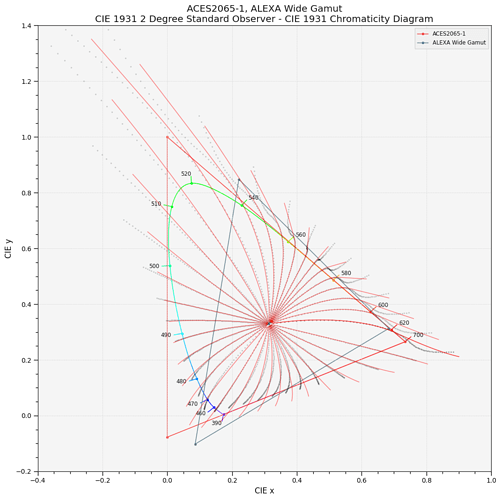

@alexfry is there “official” Nuke version of the ZCAM DRT with the Smith-Siragusano-MM tonescale and the modified matrix? Matthias’s repo for the DRT still has the v11 version. The bake script in the ODT candidates repo does have a v12 version but there’s also some additional matrix before the DRT, and quickly putting a hue sweep through the v12 version produces pretty wonky results (when plotting display linear chromaticities without gamut mapping and clamping)… I’m not sure exactly how that v12 in that script is supposed to be used…

Example of that wonkiness with v11 vs v12 with the “bodged” matrix. Input is the “dominant wavelengths” and output is display linear with tonescale but without gamut mapping and clamping.

v11:

v12:

For wider user testing, might it not be better to incorporate gamut compression instead? It’s quite easy to get the blue (and green too) adjusted with gamut compression, as has been mentioned earlier. For example:

v11 with parametric GC:

I’m not sure what version of GC I’m using but the distance limits were cyan 0.3, magenta 0.5, yellow 0.3.

v11 with GC:

v11 without GC:

v12 (blue is still skewing to cyan):

Ah, there was an extra clamp inside the v12 node that messed up the output. With that removed v12 vs v11:

Hi,

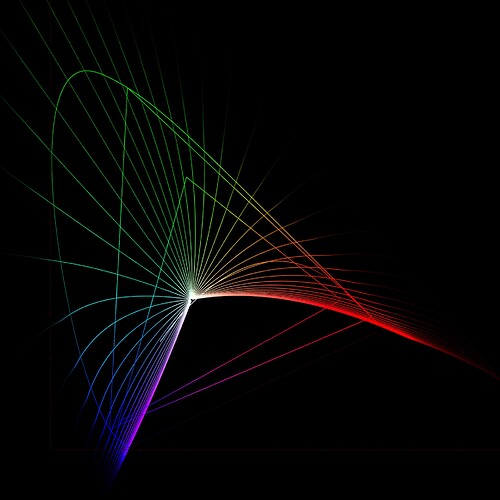

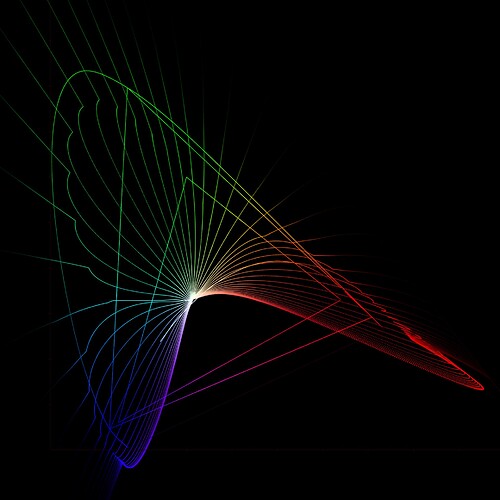

I added the linear extension as discussed last week (and following Luke’s maths):

Here it is with some fudge primaries to move the blue focal point:

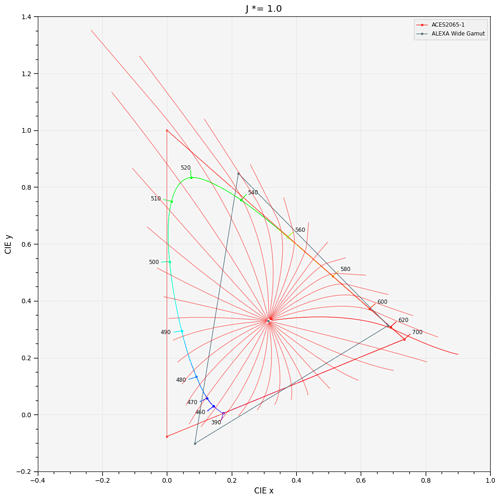

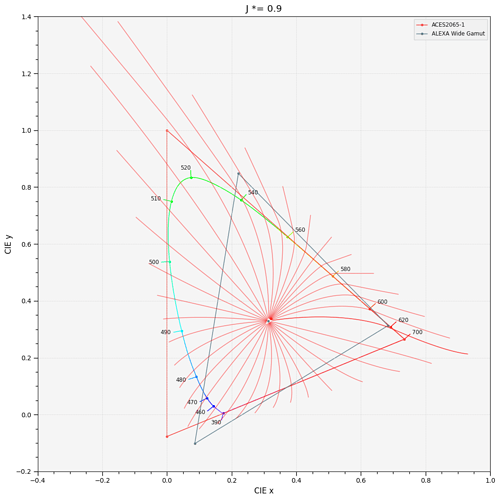

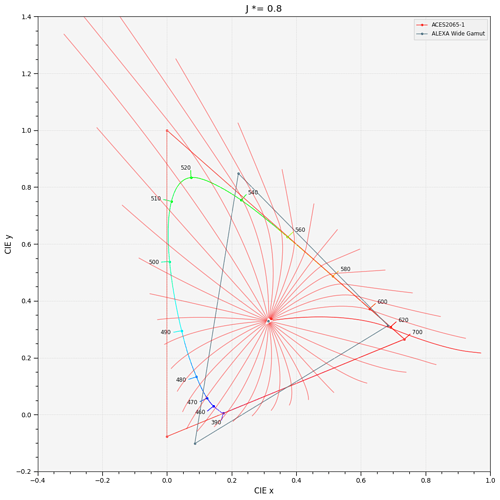

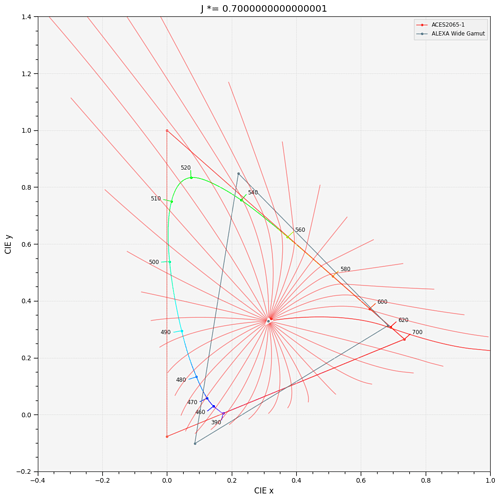

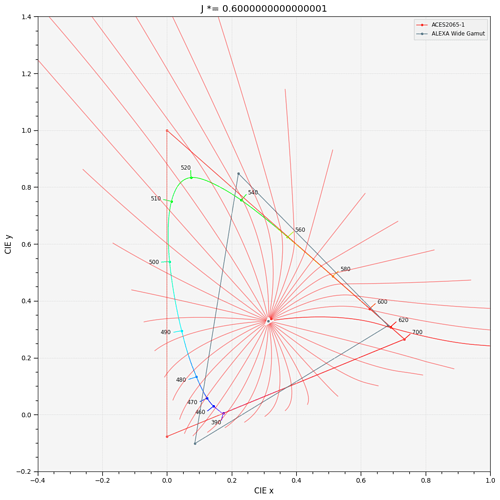

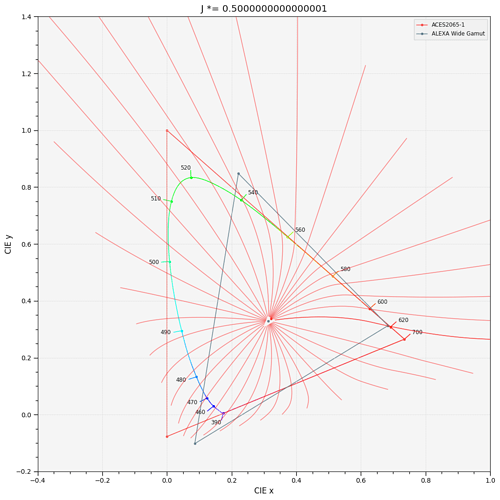

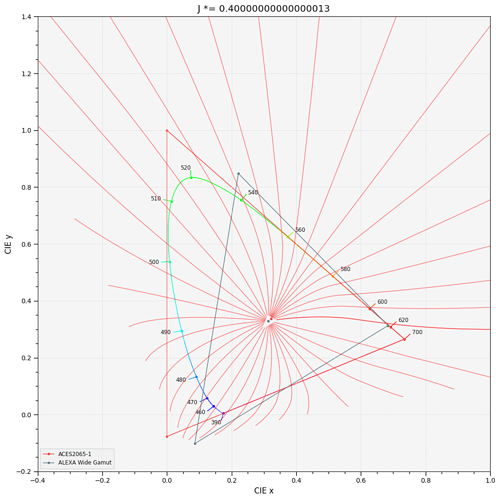

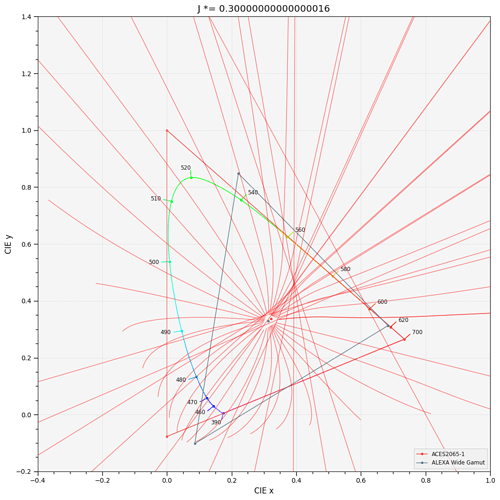

And a few plots while multiplying the J correlate:

Cheers,

Thomas

One for @LarsB