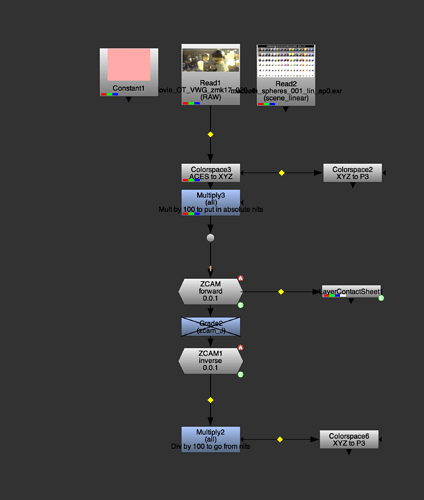

After Alex Forsythe’s suggestion about using ZCAM in last weeks meeting, I thought I better try and get my head around it. Generally I find the best way to do that is to try and make it work in Nuke, so I’ve given that a crack.

I’m not going to pretend I fully understand what’s going on here, but hopefully there is enough here for people to have a play around with, or improve on, or integrate into something larger.

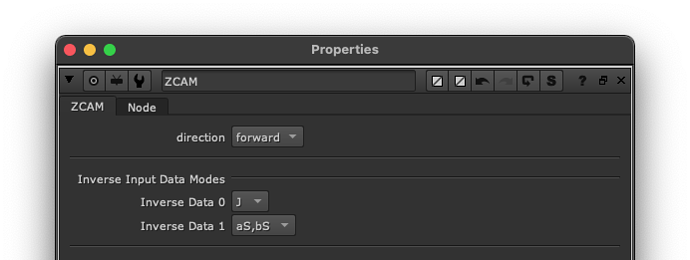

The node has two modes, forward and inverse

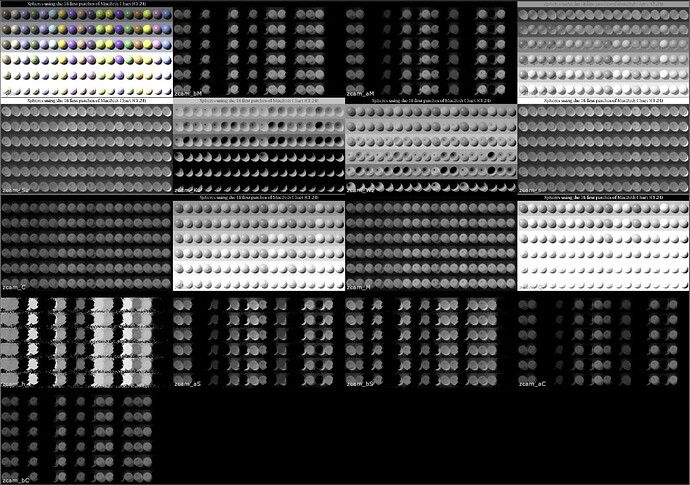

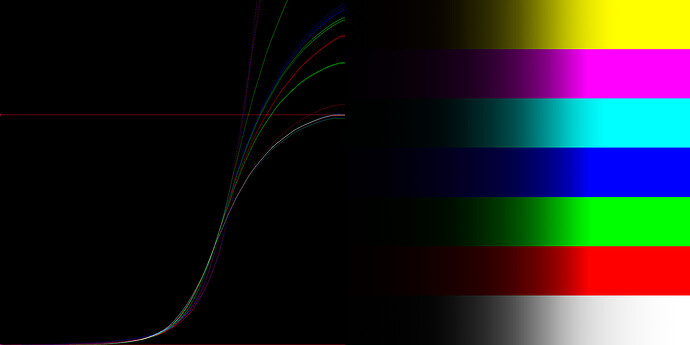

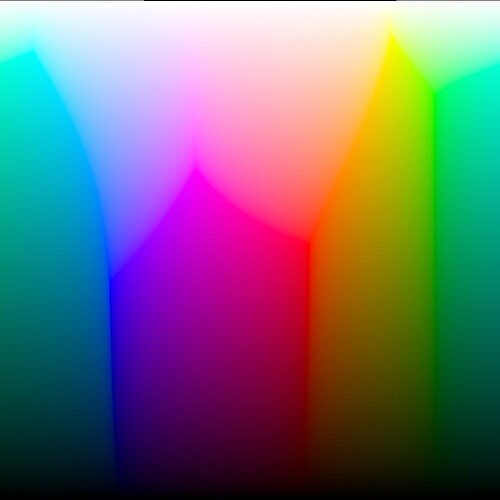

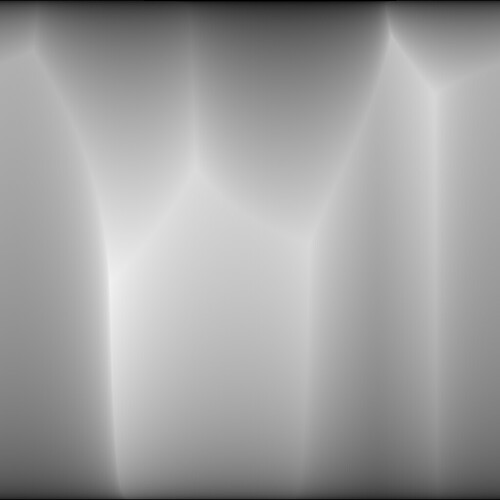

forward will dump as many of the attributes as I could produce into layers with a zcam_X. naming convention (same info in each layer’s rgba channels), whilst leaving the xyz data in the main layer. You can view them by looking at the stream with a LayerContactSheet node.

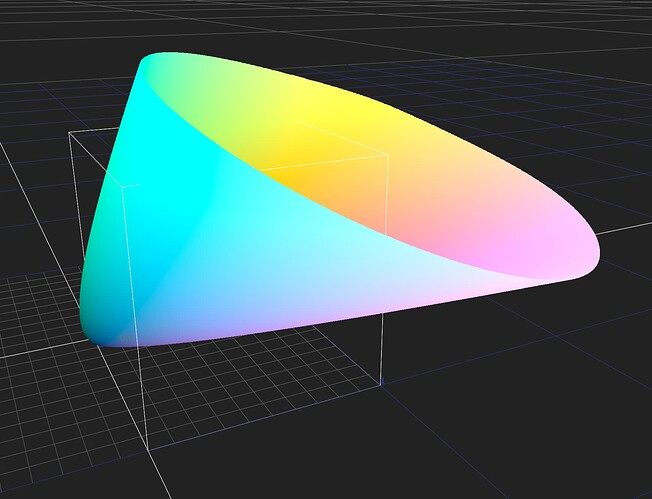

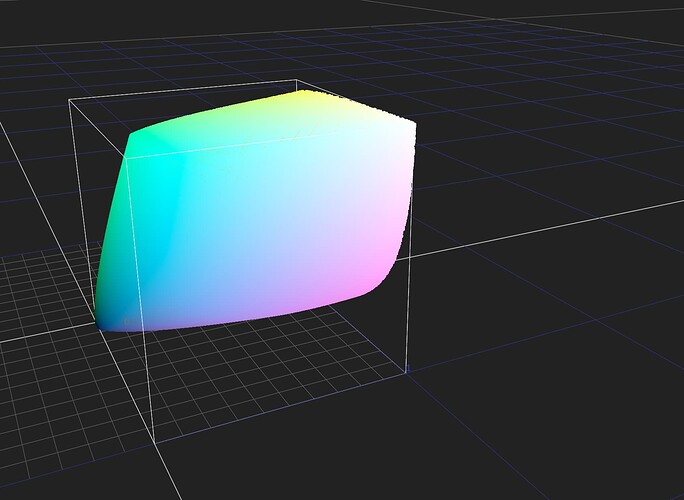

inverse will reconstruct xyz, but only using the J,aM,bM attributes (This could/should change in the future).

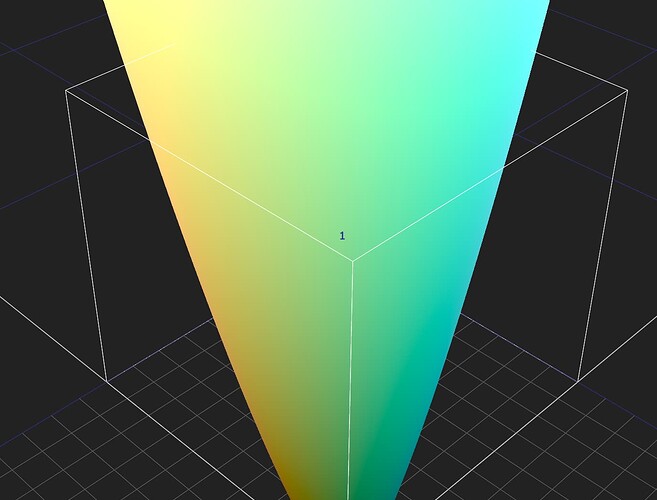

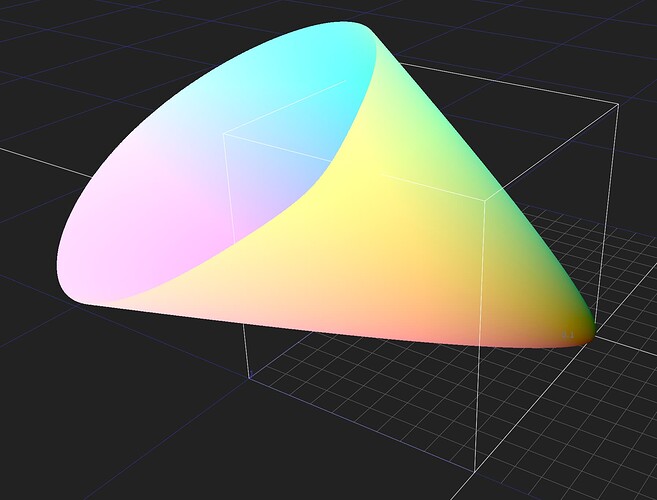

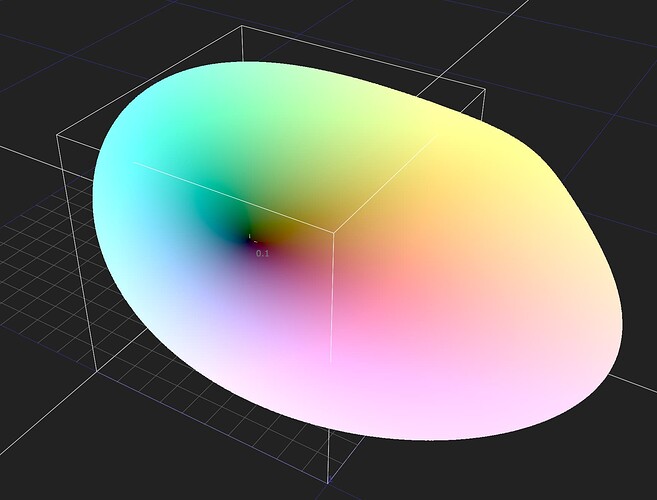

There are a bunch of params to play around with, the only ones I’ve really touched so far are the different surround constants (which seem to do… something).

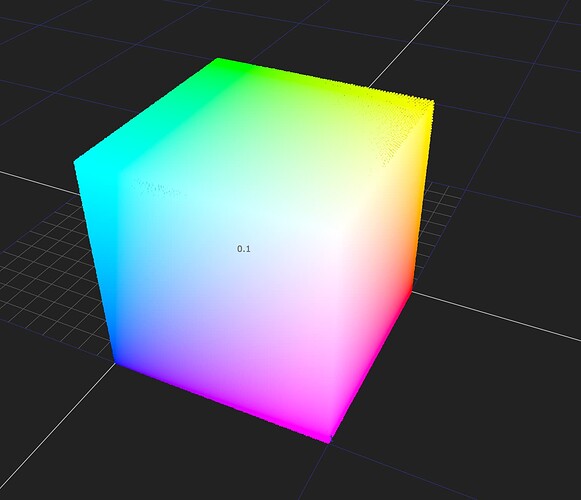

The guts of the node are pretty gory, but it seems to do what I expect, forward → inverse will behave as a null op, and pushing around the zcam_J layer in the middle will push things around. Hopefully there is some value here for people who want to experiment, and try and understand it (which is all I’m attempting to do here)

I’ve based my implementation on the python version found in luxpy here: