Hi,

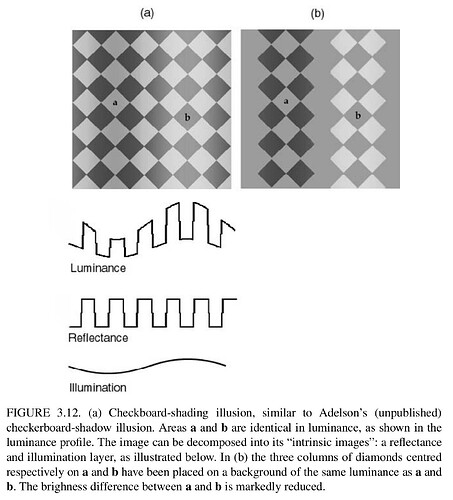

a few weeks back when @Troy_James_Sobotka presented some ideas after one Wednesday meeting. I contacted him afterwards and tried to setup a small and simple scene in Blender to understand one of the issues a bit better.

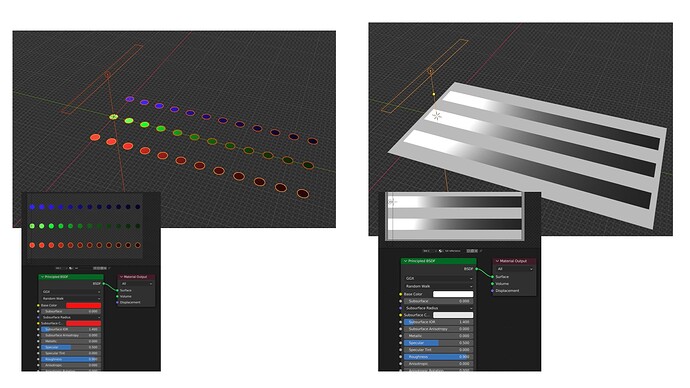

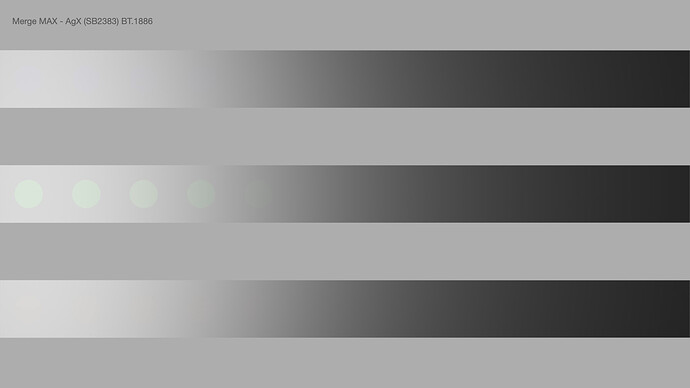

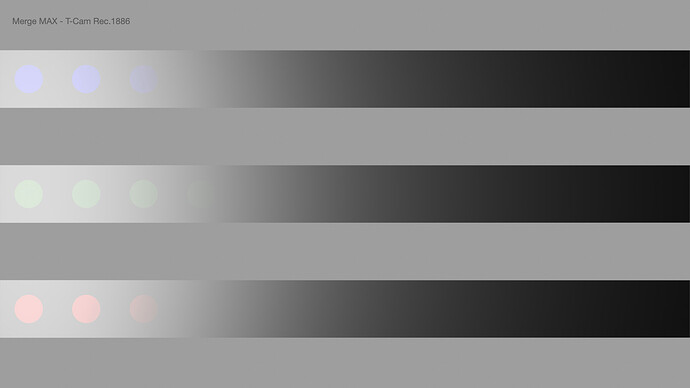

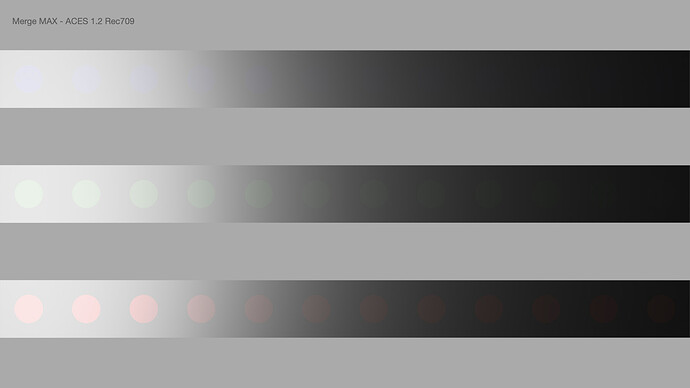

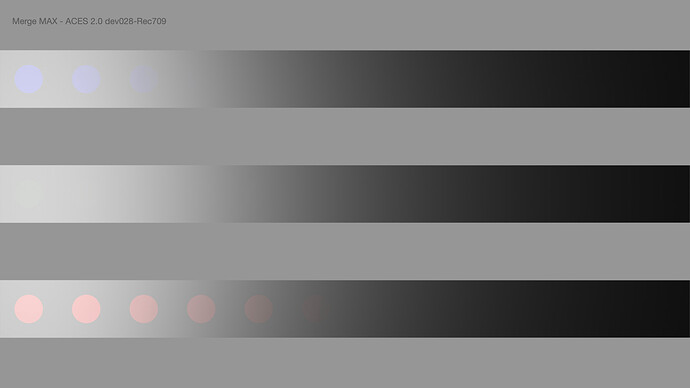

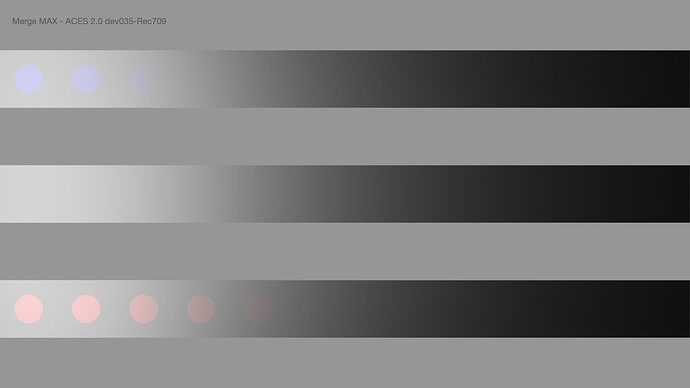

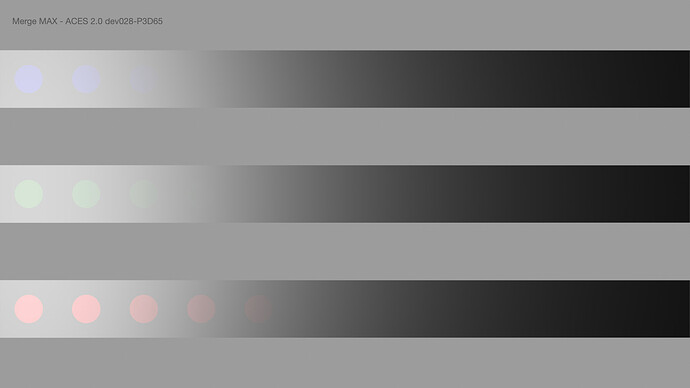

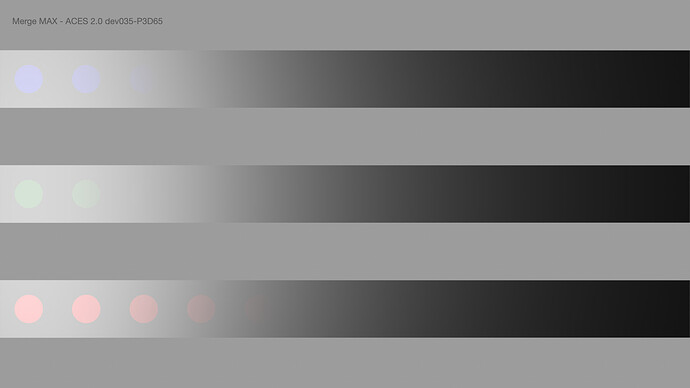

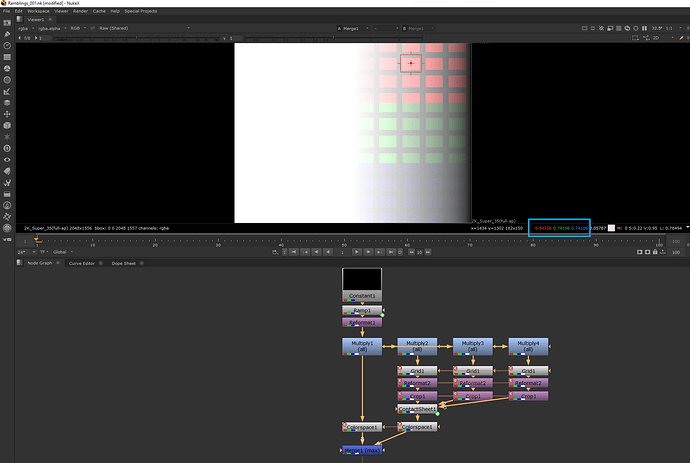

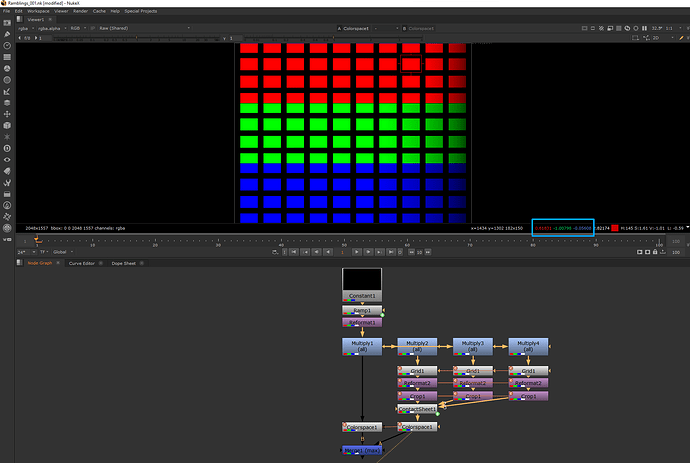

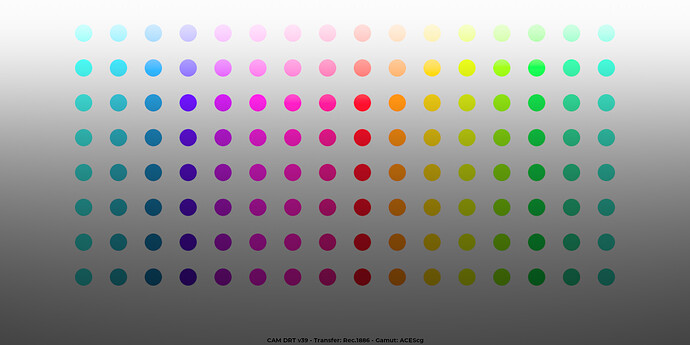

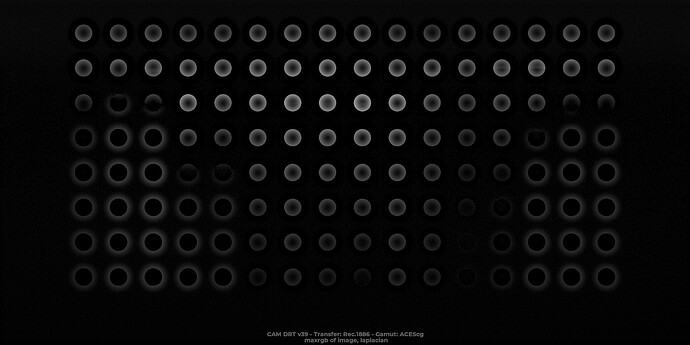

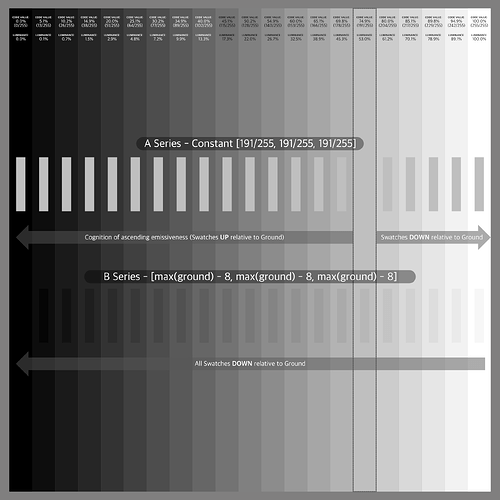

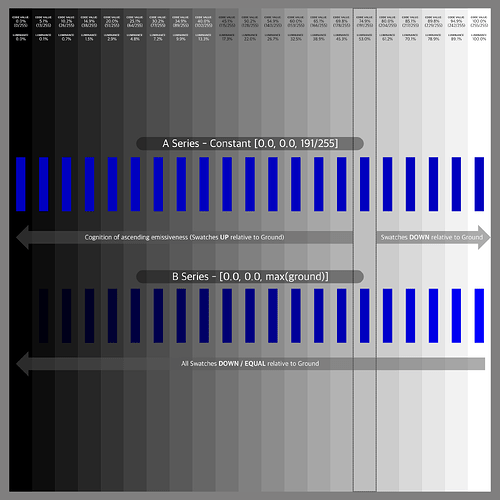

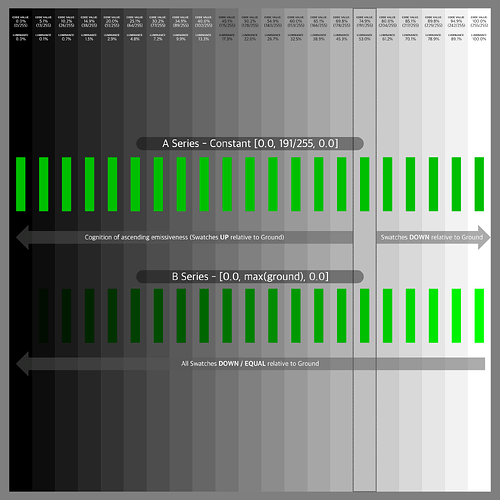

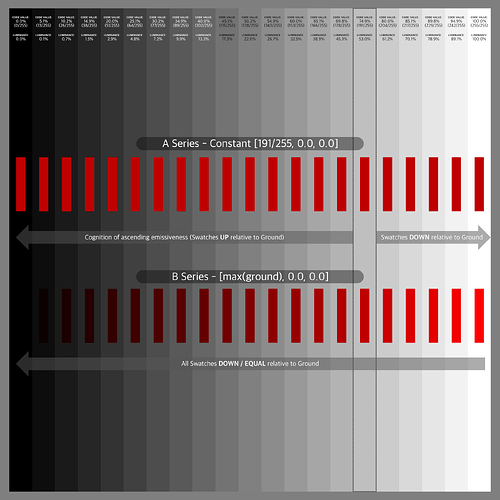

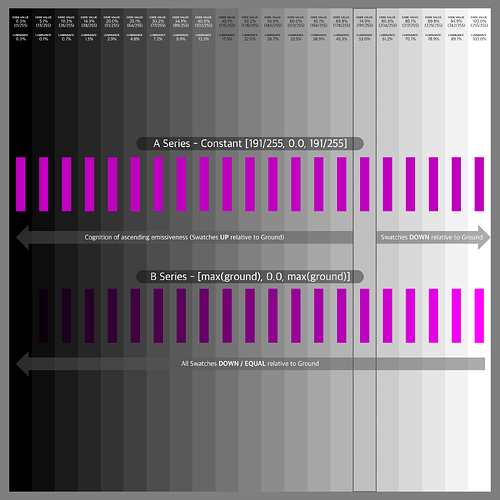

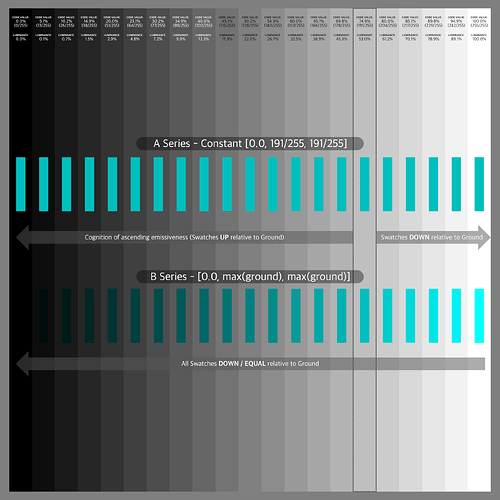

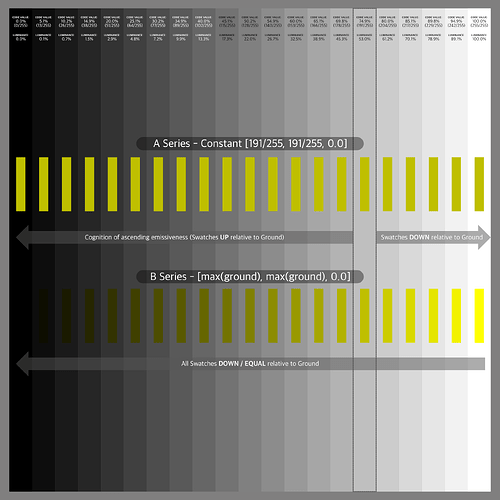

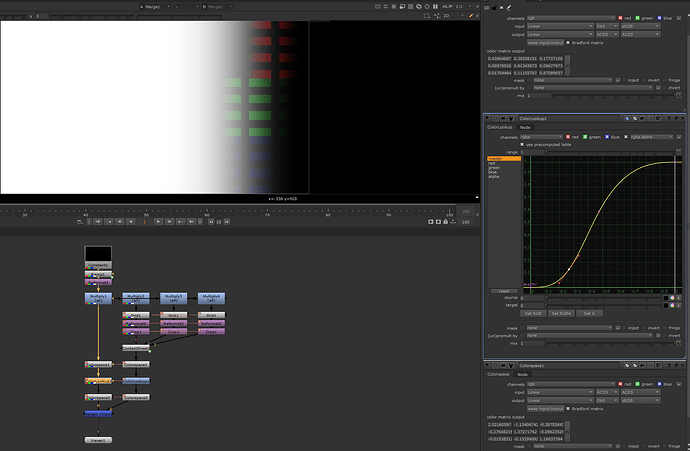

The setup is using a PBR shader to simulate a full reflective achromatic (white) wall. The wall is only lit by one achromatic (white) area light. The fall off of the light is creating a gradient. Next I set three rows of 100% (very rough) reflective red, green and blue dots. They also are being lit by the area light. I render two passes, one for the “white” stripes and one for the dots. The blender scene is set up in standard linear sRGB/REC.709.

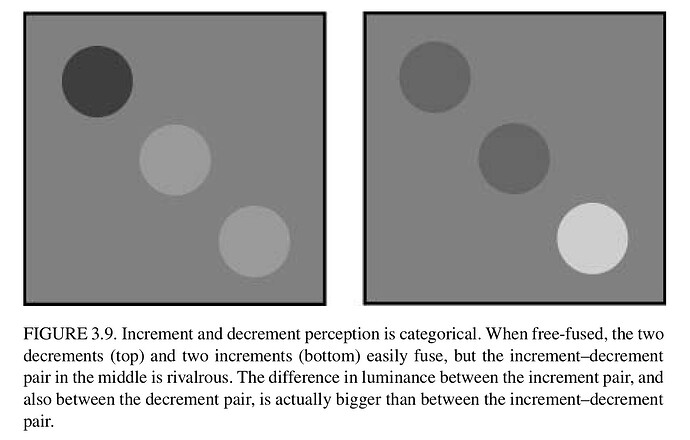

The idea is, if I got it right, that the coloured dots receive the same amount of light energy as the white stripes below. The dots reflect only in one channel each and absorb the other two. So no matter which viewing pipeline you see the merged result of the two render layers, the coloured dots should not have a higher emission value than the white stripes at the same horizontal position.

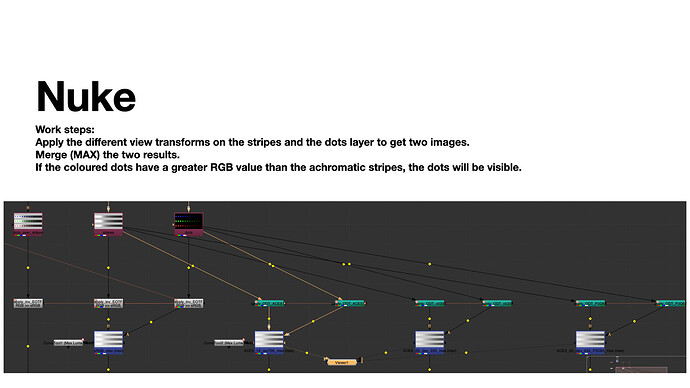

To check the results easier, the idea is to merge (max) the dots and the stripes layer after the ODT, DRT, etc.

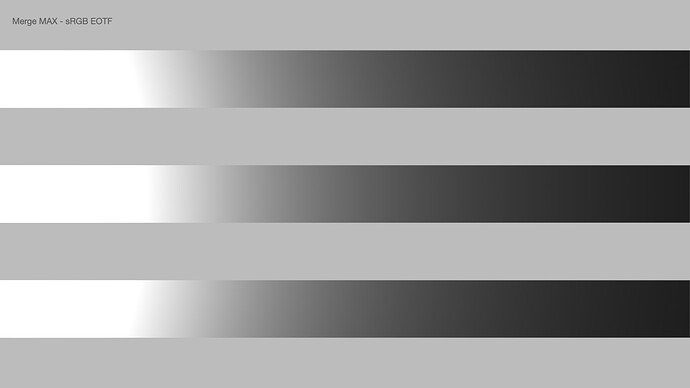

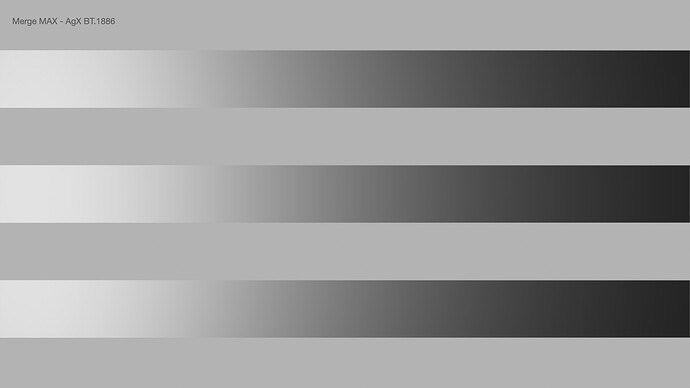

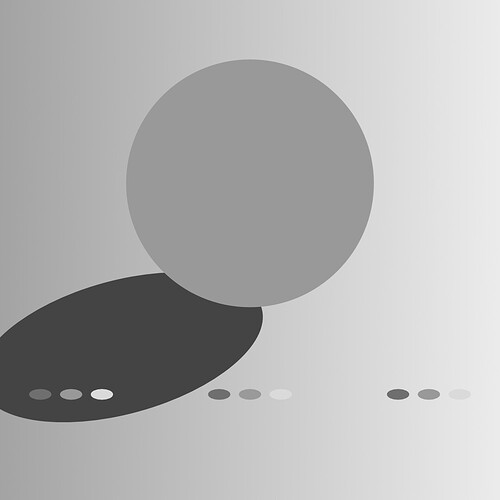

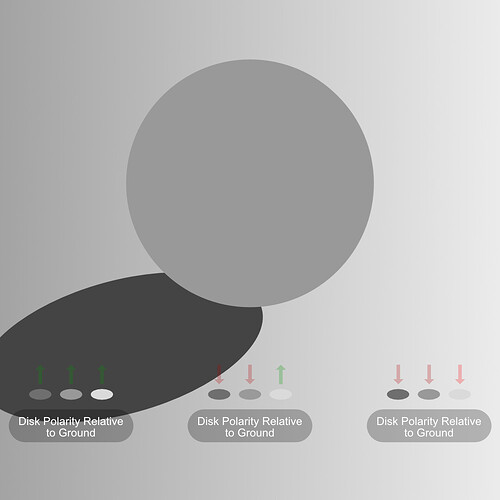

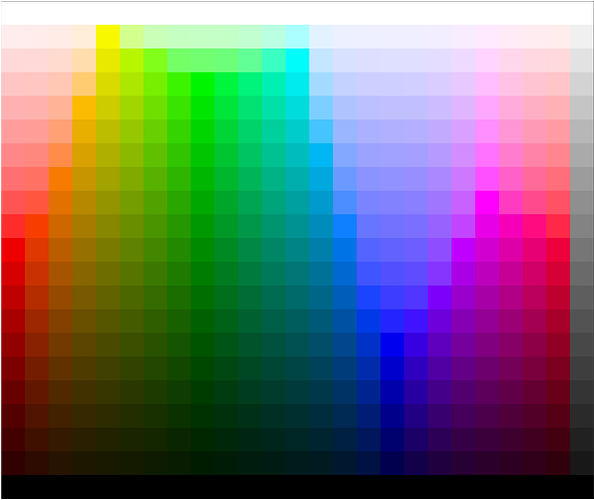

With the standard Nuke pipeline the merge (max) operation results only in the white stripes as expected. Next I tried two versions of AgX, T-Cam, ACES 1.2 Rec.709 and ACES 2.0 dev028 and dev035 in Rec.709 and P3D65.

The results show that different viewing pipelines “render” the coloured dots with a higher RGB value than the white stripe below. But in the source blender render scene the stripes and the dots received equal energy.

I think it is interesting to inspect, but maybe someone can share some thoughts about why this happens and what it means in general.

(I exported the images out of a keynote document, that’s why the Jpeg uploads are all tagged DisplayP3. The original files that I dropped into the document are sRGB and P3.)

Here is the EXR multipass file:

https://my.hidrive.com/lnk/FZUrl4Sh

Best, Daniel