Thanks Scott for these very detailed notes. The menu on the left side is very handy as well (to switch between meetings). That’s great !

As promised, I just wanted to copy/paste here a couple of thoughts shared after the meeting. As you can see, my willingness to ask questions is probably endless.

About @daniele 's proposition :

the one idea does not mean we cannot come up with an awesome vanilla ACES output transform.

As it has been mentioned in the meeting by Daniele and Thomas, one thing does not take away the other. That’s one interesting aspect of this proposal. ACES could indeed be a compartmentalized system, with the default focusing on making imagery look good/correct/neutral (I don’t know how to put it differently, sorry).

But if the framework is generalized, deploying fixes and updates becomes much easier. […] I think if we do a open source joint effort development we should aim for solutions which easy our day to day life.

If one could easily build an output transform with ArriK1S1, RedIPP2, TCAM for different displays without having to “hack” the system with a LMT, it would be indeed an improvement for many studios since this is what is happening already. A bit like the OCIO config from the Gamut Mapping VWG which I love. Anyway, as mentioned by Thomas, both are not mutually exclusive which makes this proposal really interesting and maybe more future-proof and suitable for the long-term.

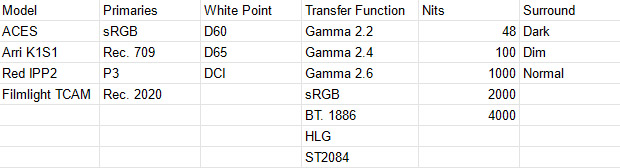

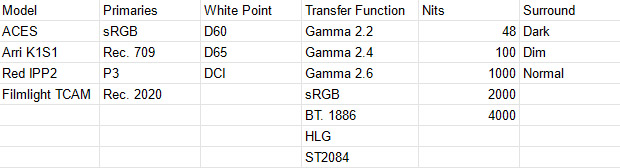

I have done this super basic spreadsheet just to list all the options actually present in the output transforms (and added the ARRI, RED and TCAM models) :

I don’t know if that’s useful but I am curious to see if I have forgotten any “parameter”. One could indeed generate its own Display Rendering Transform with these modules available. I did not include the White Point Simulations on purpose.

As mentioned by Thomas, it could be interesting to re-poll people’s opinions on the Output Transforms. Meanwhile I am happy to share my personal experience. I have been leading the ACES “battle” at our studio for the past 2 years and I have faced a lot of resistance (most supervisors love it and one hates it). The main arguments I have faced were :

- Hue Skews (mostly noticeable on blue and red sRGB primaries)

- Posterization (mostly noticeable when using ACEScg primaries in our lights)

- Why replacing our own filmic look (1d tone-mapping) that has worked for ages ?

If I play devil’s advocate :

we often describe ACES as an ecosystem, right ? What frustrates me is that this ecosystem allows me to light with ACEScg or BT.2020 values in my CG but cannot display them properly. I would expect an ACES 2.0 OT to compress values in a perceptual way rather than a Matrix and a Clip. I have also tried to use sRGB primaries in my light saber renders but the red was going orange and was not saturated enough. I also noticed that a red sRGB primary on a red character was causing posterization on his shirt. So far the best solution was to apply the Gamut Compress algorithm to scale back the values.

Most Digital Concent Creation (DCC) softwares use ACEScg as a working/rendering space and it could be argued that an artist should be able to use values such as (1, 0, 0) for a light or an emissive shader without any display issue.

About @doug_walker 's comment :

Doesn’t consider it a “sweetener”. It’s compensating for saturation effect of RGB tone scale.

Are you referring to this @doug_walker ? From Ed Giorgianni’s Digital Color Management (shared by Scott originally) :

In this example RRT, the 1-D grayscale transformation is applied to individual RGB color channels derived from the [ACES] values. Doing so affects how colors, as well as neutrals, are rendered. For the most part, the resulting color-rendering effects are desirable because they tend to compensate for several of the physical, psychophysical, and psychological color-related factors previously described. The exact effect that the transformation will have on colors is determined in large part by what RGB color primaries are used to encode colors at the input to the rendering transform. In general, the transformation will tend to raise the chroma of most colors somewhat too much. The reason is that the transformation is based on an optimum grayscale rendering. As such, it includes compensation (a slope increase) for the perceived reduction in luminance contrast induced by the dark surround. Since a dark surround does not induce a corresponding reduction in perceived chroma, using the same 1-D transform for colors will tend to overly increase their chromas.

I have noticed, using the Gamut Mapping VWG OCIO config, that the Rec.709 (ACES) Output Transform was more saturated that ARRI K1S1 and Red IPP2. Is this what you’re referring to ? Because from what I understood, per-channel does not get more saturated but creates rather a different mixture (gif originally created by Nick Shaw and originally posted here) ? Thanks for your insight !

Finally, @daniele : please never shut up !  I personally think your idea is very interesting and worth debating. Thanks for being here and sharing so much ! For a noob like me, your knowledge is priceless !

I personally think your idea is very interesting and worth debating. Thanks for being here and sharing so much ! For a noob like me, your knowledge is priceless !

Kind regards,

Chris

PS1 : Once again, if you guys need synthetic imagery, without any IDT involved in the process, just let me know. Maybe it will be useful at some point to review Output Transforms.

PS2 : If you guys want me to create a post about splines (b-splines, beziers versus expressions), just let me know. I could copy/paste useful stuff in it.