Splitting this topic from @ChrisBrejon original post:

What I don’t understand about this is, why do we (the ACES team) have to make this possible? Can’t people already do this? I mean, if you want to use K1S1 or RED IPP2 or TCAM, then there’s nothing prohibiting people from just using them. Why are people trying to shoehorn these looks into an ACES workflow? And if they are so hell bent on using one of the other renders, then why not just use them? Why are they fighting to use system X within ACES when that system X exists already in parallel and can just be used instead? I expect there are reasons for this, and I’d like to hear them. I think they will inform the work that this group decides to do.

For example, if the reason is anything like “well ACES doesn’t let me get to this particular color” or “I like the way system X looks better” or “ACES doesn’t let me do whatever” then I feel we should be looking at fixing those hinderances rather than just resorting to a metadata tracking system (which IMO always fail eventually). If we can make ACES easier to use (dare I even say “a pleasure” to use?), then will that instead help some of those detractors be ok with ACES? There are things that are broken in ACES - we know this. So let’s fix them such that those easy excuses and reasons are gone.

One argument for allowing “choose your own” output transform as a part of ACES could be that we already allow flexibility in working space choices, which must be tracked, so if we’re already doing it for a different system component, what’d be the harm in allowing it for the rendering transform?

I have always viewed that with ACES we strongly recommend that you should use ACEScct (I personally want ACEScc and ACESproxy deprecated - for simplicity). You transcode camera files to ACES, you work in ACEScct, and you render in ACEScg. Three colorspaces is enough. They’re all there for a reason. Having options for a working space is unnecessary complication, imo. But that’s probablyl a topic for another thread.

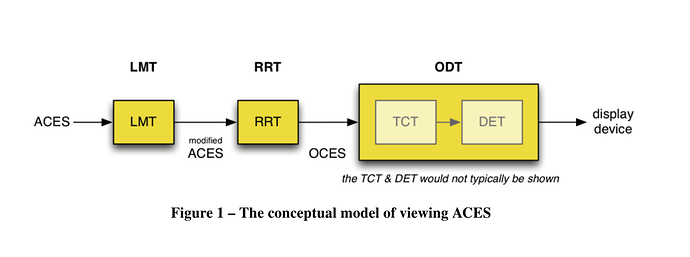

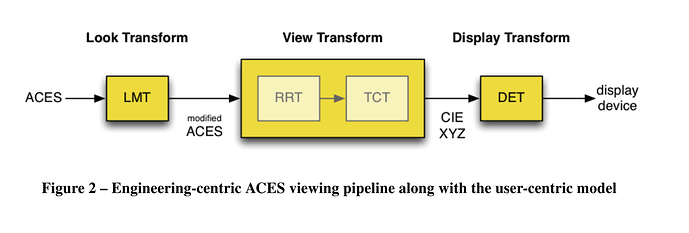

Remember the original name of ACES was the Image Interchange Framework - and it stemmed out of the desire to unambiguously encode, exchange, and archive creative intent. Metadata and AMF and all the other “stuff” that goes alongside ACES2065-1 are great for making it useful on production, but the idea is that even if all that other digital “stuff” is lost that a “negative” would still remain in a color encoding that is standardized and not just a “camera-space-du-jour”. We could theoretically make a new “print” of those ACES2065 file and have a pretty good idea of what the movie was “supposed to look like”.

Finally, if we build support into ACES for swapping in existing popular renders, what do we then do when other new renders come out? Where do we stop with what we do or don’t support? Do we support output referred workflows, too? Rec. 709? HLG? Pretty soon it becomes too much. I personally want to see the system simplified, not added to. (I didn’t even want to add the CSC transforms, because suddenly it becomes “why isn’t this camera or that camera included?” instead of “oh, i can just use ACEScct for every project? i don’t have to reinvent my workflow for the next show I do that uses a different camera?”)

Final point I’ll make is that it is the charter of this group to construct a new Output Transform, not to rearchitect the entire ACES framework. So let’s fix the broken stuff and see if there’s still such a need for ACES to be expanded in scope. I really think it can deliver as long as we fix the stuff that doesn’t work so well right now as we had hoped.