Hello!

I´d like to know if this is normal.

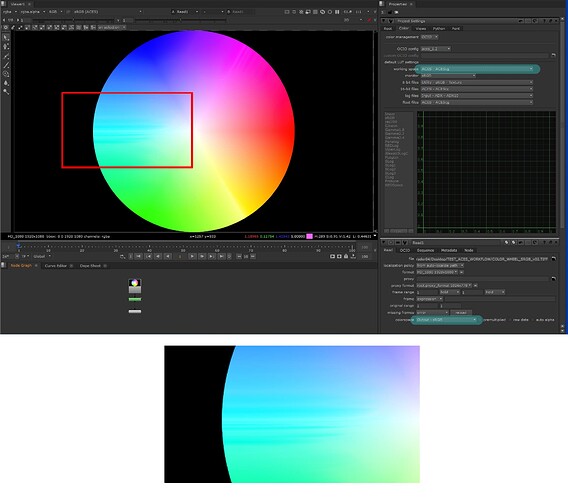

My NUKE´s project is in ACESCG, and i´m reading a TIF 16 bits file SRGB, and in the READ node i use OUTPUT-SRGB in the colorspace tab, but some defects has been generated in the CYANs (some CYAN horizontal lines been generated)

Same happens with the same image in JPG 8bits.

Whats happens?

If i use UTILITY SRGB TEXTURE (in the READ node/ Colorspace), the gama darken the image.

Wich one is the colorspace i must to use in the READ node to see an SRGB image correctly?

Hi PatucaRandall,

Have a look at this post for some more explanation.

Great!!! Thanks a lot!

Hello again!!!

After been reading the post, i understand the correct setting is to use UTILITY SRGB TEXTURE.

But now the question is the following: if i use UTILITY SRGB TEXTURE the image get darken .

So how i must to work with an ACES FILE exported from Resolve (in this case the colors looks right), with an SRGB file, set in UTILITY SRGB TEXTURE (in this case the image looks darken).

If i must to compose and integrate one to the other, how i must to do to match both materials?

Utility sRGB Texture is intended for textures. If you are doing compositing of film from Resolve that should be exported as ACES 2065-1

I mean, for example a stockfootage or photo from a camera, in SRGB.

I exported the camera footage in Resolve in ACES.

But the problem is if a must to use a stockfootage or photo, to integrate and compose together with the ACES footage,how i must to do? Wich one is te right setting, and if the right setting is UTILITY SRGB TEXTURE, how i must to do to match the colors, due UTILITY SRGB TEXTURE get the image darken

If the intent is to use ACES only for compositing but you don’t want the tonemapping on any of your footage and have sRGB or any other already display referred content change there really is no point in using ACES. In that case doing standard gamma removal linear workflows will suit better.

If the idea is to integrate sRGB/rec.709 footage into a scene referred plate, be it CG or Camera raw/log files and use ACES down the line as colorgrading/finishing solution the setup is correct. You can use a simple gain operation to get the image to the brightness level you’d want. Just know that the appearance will never exactly match that of viewing the original file outside an ACES pipeline because of the tonemapper. But I can’t imagine a compositing scenario where it should. You’ll have to match the lighting conditions anyway.

Hope that makes sense! ![]()

This is interesting. What are your thoughts on alternately using the inverse of the output transform for this? One advantage is that it would give values above 1 for light sources in the image in the comp, as well as retaining the look of the original sRGB/709 image.

The reasoning is that for a texture map you do not want to have values go above 1 as this makes them light emitting which is physically incorrect for a texture map on a render. Conversely if you are reading in a film plate, you do want the values of light emitting things in the image (the sun, a bulb) in the image to go above 1 as they would in a render or camera raw.

The example of the color wheel is perhaps somewhat misleading as it is not a photo at all. More an example of integrating logos and graphics, which is a whole other can of worms

Right, I understand the differences. I guess my assumption was based on having photographic material like a still or video of some scene. There is no way for an inverse ODT to make whatever was tone mapped from something non-ACES to scene state resulting in incorrect values where white hits 16 in float regardless of what the original scene’s state was.

In the case of compositing on a self emissive object like a display it would make sense to still use sRGB Texture because in scene state that display itself is only capable of a limited range.

For graphics it can be useful if you want very high values for easy glows perhaps? In that case the asset isn’t really bound to some original scene state that was captured.

Yes that’s certainly true. It’s definitely an imperfect “make the best of it” workflow.

To put it in a concret case: i shoot a house with the SONY VENICE, in Raw, and i must to put the house on fire, using fire from a stock footage library.

The set was shoot with the SONY VENICE, then i export the raw material with Resolve in ACES.

I will make the comp in NUKE.

The Nuke setting is ACES CG.

If the STOCKFOOTAGE is in SRGB, wich setting is the right one?

OUTPUT SRGB or UTILITY SRGB TEXTURE?

Can we assume that the file does not contain smoke? If so then fire by itself is self-luminous, and generally it is relatively uniform in its luminance. In this case I’d go with the sRGB texture and then adjust the exposure to the image. As Shebbe was saying, there is no such thing as the “right” setting here, i.e there is no way to select a color space to bring an sRGB image into correct scene linear values. However, with something like fire, you can adjust the exposure to make it that value.

That’s a good point. Thinking about this further, it seems like it would indeed be a better workflow to read in each comp element with sRGB Texture, and then adjust the exposure for each element as appropriate. For an element of a building likely not at all, for a monitor a little, and for a sky a lot, and for sun a whole lot.

Great!!!

I will try this method.

I thought it was something more “automatic”, like a tab / setting and nothing else.

That’s interesting, I didn’t think about fire… If it looks nicer with sRGB Output why not. It’s probably easier for adding ‘punch’ and glows etc. But afaik with ‘default’ linear workflow linearizing sRGB gamma is also only 0-1 values. Go with whatever works most natural I guess.

I’d say there is no rule for this specific creative context. As long as your global ACES pipeline is valid the result is all that matters.

With fire you definitely want the tone mapping of the ACES output transform, rather than default Nuke. The default Nuke output transform is a simple gamma EOTF without tone mapping and will clamp values over 1 making fire and pyro look like this:

as opposed to tone mapped like this:

Ah sorry, I was talking about the IDT side, converting gamma encoded assets to linear working space like stock footage. Not scene-referred CG fire to display output.

If you want your fire to render through the ACES Output Transform to something that looks like it has emissive properties, rather than being a diffuse reflector, you definitely want an IDT which will produce scene values >1. sRGB Texture will not do this. Output sRGB will do, and if left unmodified in the scene state will render back through an sRBG Output Transform to (closely) match the original. But you need to be careful what you do with the ACES value in the scene state, as anything you do will break the end to end ~NoOp, and may push values further into the roll off than you want. And if you need to be able to use HDR Output Transforms you need to be doubly careful that you don’t end up with something that was only slightly brighter than diffuse white in the original, but ends up at an HDR value that is far too high.

So it can be useful to make an IDT that is not an exact inverse of the sRGB ODT, since an exact inverse has a curve that goes vertical where the forward transform goes flat.

Nick can you say more about how to approach this in ocio?