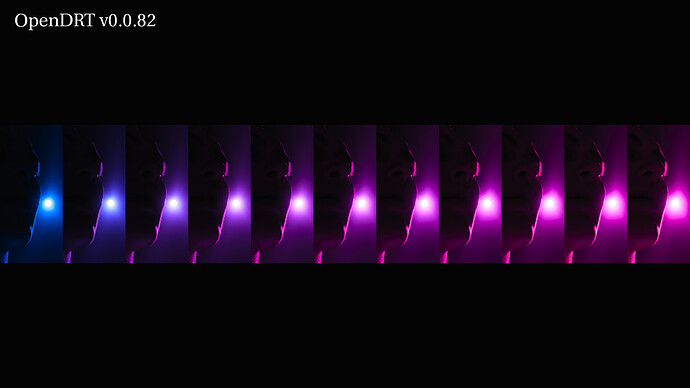

v0.0.82 is worth poking at. I think it’s Jed’s best work so far. It includes a very interesting feature :

Update perceptual dechroma to use ICtCp colorspace. This biases the chromaticity-linear hue paths of the highlight dechroma and gamut compression, along perceptual hue-lines, resulting in more natural looking colors and better appearance matching between HDR and SDR outputs.

From the tests I have been doing, it gives a very nice span of values. This is a sweep from an ACEScg blue primary to magenta. It goes like this :

- 0/0/1 - 0.1/0/1 - 0.2/0/1 - 0.3/0/1 - 0.4/0/1 - 0.5/0/1 - 0.6/0/1 - 0.7/0/1 - 0.8/0/1 - 0.9/0/ - 1/0/1

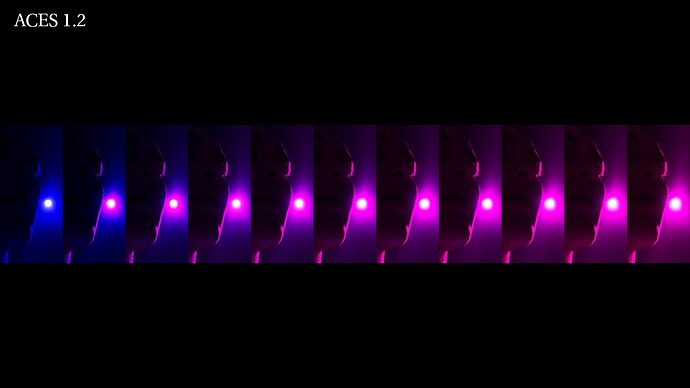

Same render displayed with ACES 1.2. My range of values got collapsed into two.

Thanks,

Chris

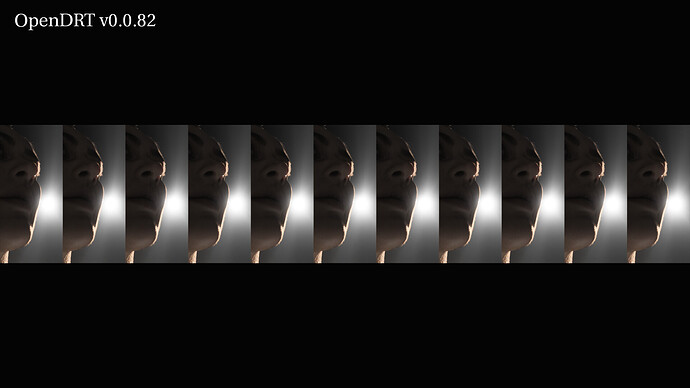

PS : If you’re wondering what you’re looking at. I don’t blame you. This the CG model EiskoLouise lit by an Area Light. This is the render with achromatic values :