Hi all,

During our meeting today, we ended up in a discussion around a couple key topics, and we thought we’d take the opportunity to do a bit of a summary post, separate from our meeting recaps, to chat about where we are, the workflow decisions made thus far, etc. We want to make sure we’re still aligned on implementation plans, as we are well into writing our User Guide, and starting the Implementation Guide.

The discussion was centered around three main points, in my opinion, though there was certainly a lot going on. Feel free to comment and add things if I’ve missed anything.

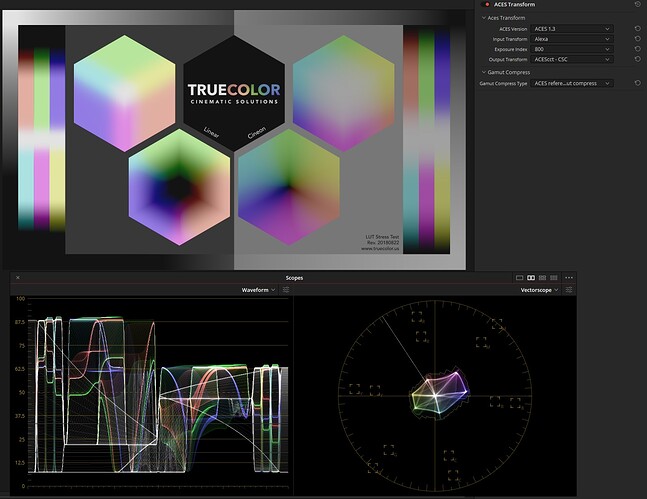

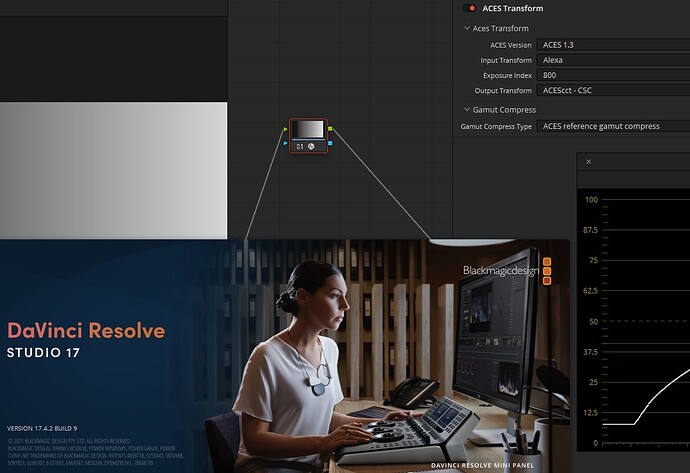

- Should VFX Pulls have the gamut compression baked in?

- Should EXRs or other scene-referred media with the gamut compression “baked in” be called something other than AP0?

- Is the default of “always on” in the viewing pipeline too heavy handed?

1 - VFX Pulls

This was definitely a topic of much discussion early in the group. We talked about it as early as April 1st, and the notes state that the group agreed to not bake the compression into the EXR VFX pulls for a couple main reasons:

- VFX needs the flexibility of where to apply it in their comp

- We want the inverse to be a last resort, not a norm

- If you do need to inverse the transform on a half float exr you receive, you might get quantization issues

- Maintains the ACES structure of maintaining the largest data set possible for the longest amount of time

The main note here is that while we are suggesting that ACES shows almost always have the gamut compression ON in their VIEWING pipeline, we maintain the ACES philosophy of only altering the scene-referred files as late as possible (either on return from VFX, or in finishing for non-VFX shots. This gives VFX and finishing the flexibility to define their workflows as they see fit.

The downside to this, brought up in today’s meeting, is that there is added work and complexity for both VFX and finishing. It’s possible that this could be simplified by “baking” the gamut compression into VFX pulls. However, @matthias.scharfenber , @nick and I don’t believe this benefit outweighs the points made above. We are open to dissent here!

2 - Is it really AP0? Should we call it something else?

If the files returned to finishing from VFX have the gamut compression applied, it is no longer a true “round trip” or full AP0 files as delivered to VFX. The architecture group discussed having the gamut compression as a part of a colorspace (AP2, anyone?) but decided against it for a couple of reasons:

- We wanted to be as “light touch” as possible to the overall ACES system - to reduce implementation thrash in the short term, but also in the case, in a wonderful perfect future with improved input and output transforms, where we no longer require the gamut compression.

- Keeping it as an individual operator allows flexibility in VFX and finishing.

Also, though the files have been modified from their original AP1 values - this is common in VFX and finishing. We compared the gamut compression transform to, for instance, a despill operation. Definitely changes pixel values, but for the better, and you’d almost never want to undo that operation.

3 - Are we being too “heavy handed” in our “always on” recommendation?

This is definitely a possibility! We would love input here. However, when we started working through the production pipeline, we quickly realized that if used on set and in dailies, post production would have to match. And without AMF fully implemented to easily tell whether the compression was applied or not, we decided the easiest approach would be to opt out - so “on” by default.

We also recognized that if used on set, it was likely to always be on - even if you have a DIT on set, you are unlikely to have time to / want to quickly turn the gamut compression on and off based on what you are shooting. The algorithm is designed to target out of gamut colors and only minimally affect those inside AP1 - but the visual consequences of NOT having the compression on are large and immediately apparent to everyone - and hard for the DP to work around. The goal is to instill confidence in ACES on set, so we have a greater chance of a solid color pipeline throughout production.

This turned out to be longer than I anticipated - but here we are. We appreciated the great discussion in the meeting today - tagging in @KevinJW , @joseph , @SeanCooper , @jzp and @ptr for feedback on my summary + thoughts.