Hey guys,

I’m trying to learn acescg and would like to incorporate it on my color map processing in nuke.

Just read this awesome thread:

I have few questions:

-

Is it correct to say that OCIO-ACESCG color space will make my “color grading, highlight and shadow recovery” more “easy and doable” because I have a bigger gamut range with acescg versus srgb.

Or should I just stay in the default linear srgb (nuke-default) color space in nuke because OCIO-ACESCG just does not give a significant benefits visually for shadows and highlights recovery of color maps. -

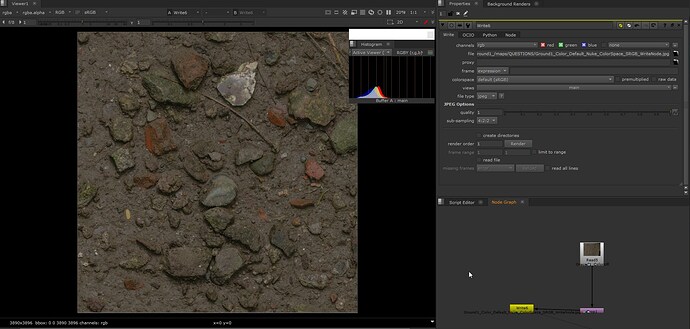

The color maps that I’m processing in nuke were exported straight from reality capture scanning program, like this color map scan of a muddy ground:

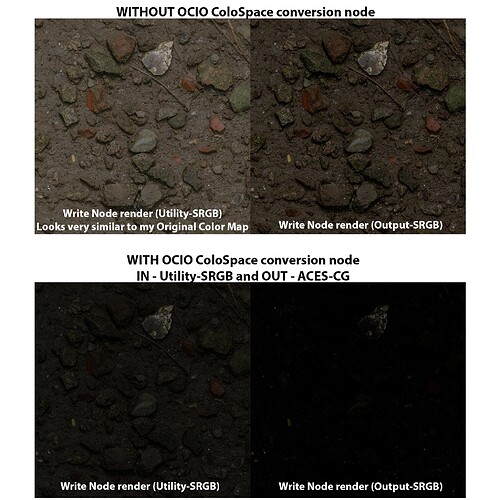

Now it makes me wonder, most of the people were saying that using OCIO-ACESCG colorspace will make the color map darker and “less” saturate BUT why is it that mine is looking exactly the same on JPG write node using OCIO-ACESCG colorspace? Here is the JPG render of a write node using Utility-SRGB:

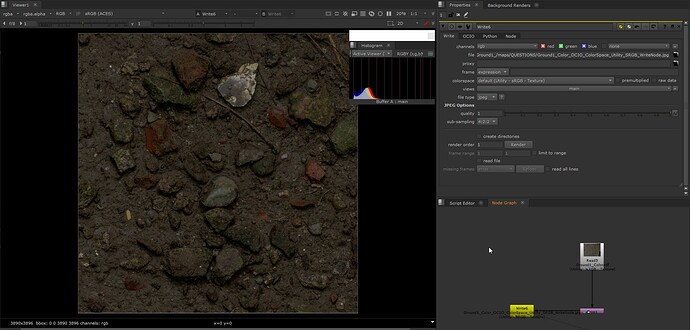

Maybe what they meant to say is the “viewer transform”? My viewer is indeed much darker and less saturate when using OCIO-ACESCG colorspace versus the normal srgb of nuke.

Viewer in Nuke-Default colorspace:

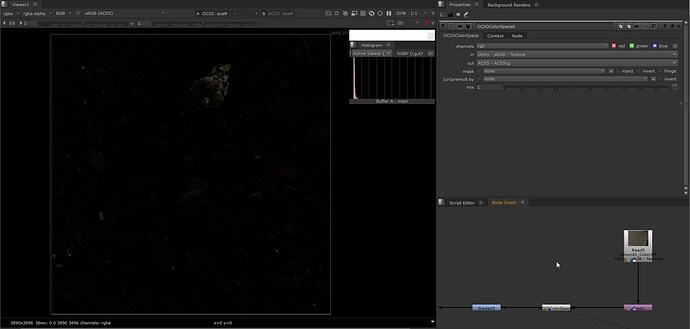

Viewer on using OCIO-ACESCG:

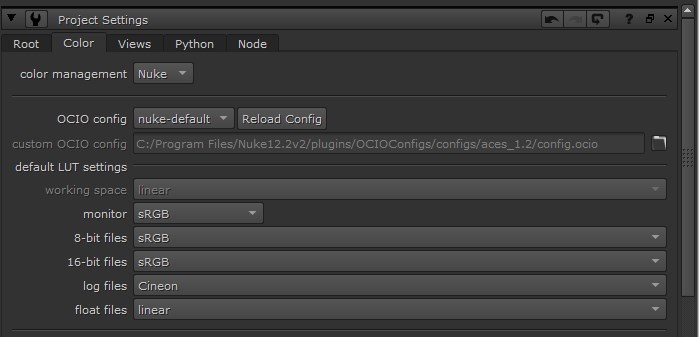

Here are my project settings using Nuke-default:

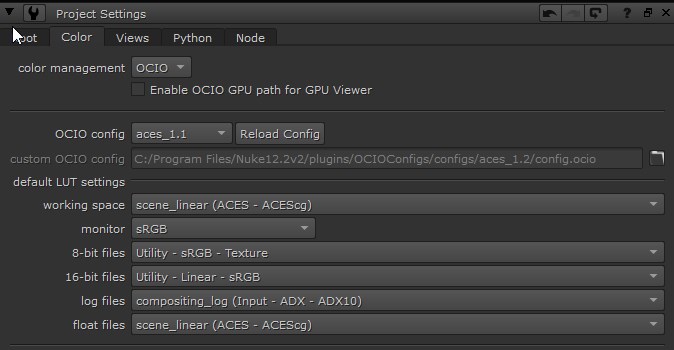

And for OCIO-ACESCG:

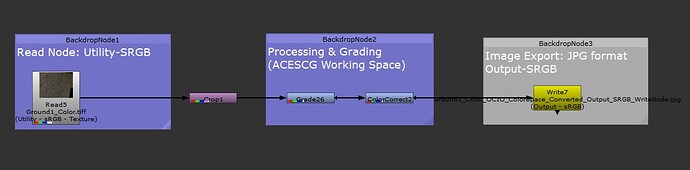

- For the conversions, do I need to use the “OCIO ColorSpace node” to convert my input sRGB color maps (from reality capture) into ACESCG before I can start to process and color grade it? Because if I use the OCIO ColorSpace node, my Color map will be super super dark and unworkable in the Viewer. Like these below node graph screenshot:

I cannot find any thread that directly answers the question - Do I need to use “OCIO ColorSpace node” to process (grade, exposure adjustments etc…) my color map inside Nuke?

And btw, I’m referring to the raw color map itself (JPG or Camera Raw formats) and not the actual 3D scene render outputs from Maya or 3dsmax that needs post-processing later on.

Is that the proper workflow in ACESCG? At first, I thought that nuke is doing the conversion automatically after changing to OCIO-ACESCG colorspace and using Utility-SRGB on the “Read Node” of the color map.

So using a separate OCIO ColorSpace node will “double” the conversion that results to a very dark image? Or maybe my color map is just too dark and is out of the proper albedo range for a PBR workflow (well, I also need to look for these correct albedo range values as I always read it on other threads but no one is posting the “actual” range for people to see.

Thanks!

I am supposed to write some articles on these topics soon for the ACES Knowledge Base. I will certainly use our conversation to provide great examples. Have a nice day !

I am supposed to write some articles on these topics soon for the ACES Knowledge Base. I will certainly use our conversation to provide great examples. Have a nice day !