I can only speak from my (perhaps somewhat limited) understanding, so corrections happily accepted:

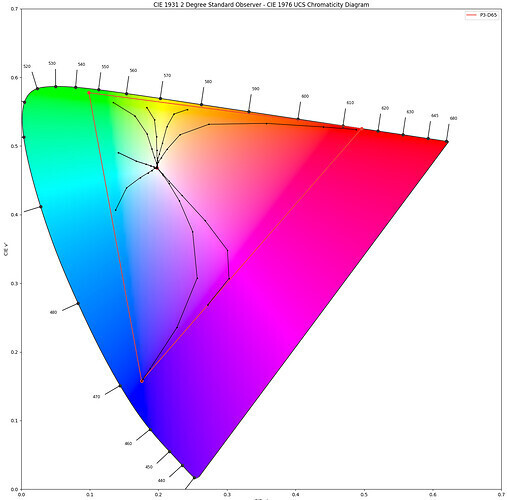

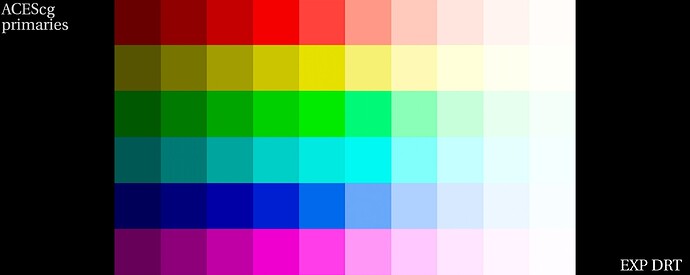

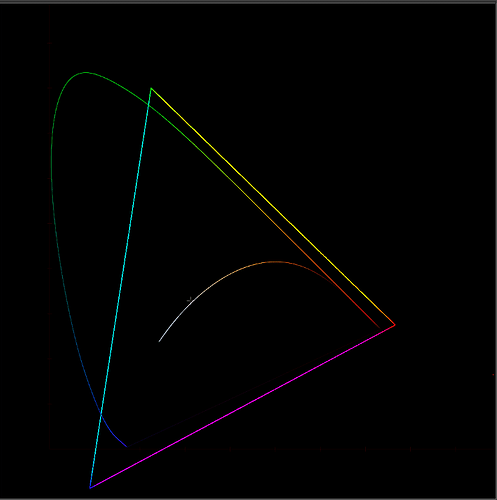

-Chromaticity-preserving (I think this was talked about some in meeting #6) means for example on a CIE xy plot, regardless of the luminance of a color it occupies the same point in the chart. In 8-bit values for example 1,0,0 and 255,0,0 would occupy the same spot (fully saturated red). One is higher luminance, but they are the same color (think also of 0,0,0 (black) and 255,255,255 (white) which occupy the same point). 255, 128,128 I believe could be considered “hue preserving” as it moves red towards the achromatic axis (grey/white) without influencing it towards green or blue, but it no longer occupies the same spot on the chart (because it is no longer fully red).

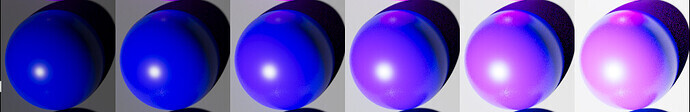

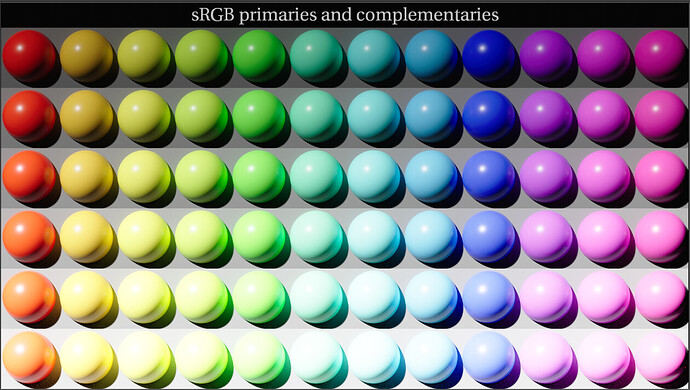

-“Filmic” in the context of this conversation I am mainly referring to the “path to white” and how colors “blow out”

-“Realistic” meaning what happens in nature, in the natural world. Not in a photo-chemical process, or inside a computer, or how a given display technology works, but what is physically occurring.

-Tonescale I guess I think of more specifically as “luminance scale”, how you are compressing scene-referred dynamic range down into a display’s dynamic range.

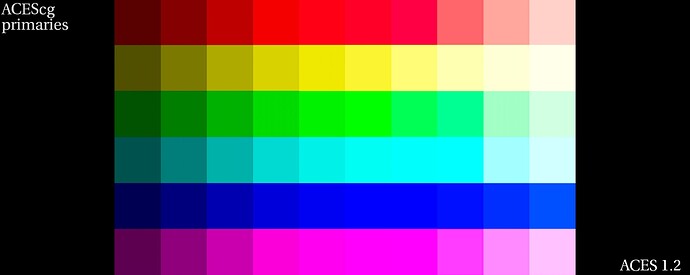

It depends on what the display’s gamut is, but yes, practically speaking some kind of gamut compression will need to happen to get to the display color space.

It can’t. The overall transform will never be truly chromaticity-preserving because the source gamut is larger than the display gamut. I don’t know if there is such a thing as “relative chromaticity”, but theoretically something that is fully saturated blue in say P3 space, when converted to 709 will still be fully saturated blue, and likewise something that was “half saturated” (128,128,255) in one space would be that way in another. There are undoubtedly many reasons why a simple transform like that wouldn’t hold up visually, but that would be the basic idea. I think many of us are on board with it being hue-preserving, though; that blue shouldn’t become purple.

That was not entirely intentional. The image was created with a 3D LUT and when creating the LUT I had to specify an exposure range based on peak luminance percentage. The first once I created (using the max value) was incredibly dark. The sample image I posted was a second attempt using a value of 800% peak luminance, which was jumped into my mind about some HDR transforms, but may not have been correct. It produced a reasonable looking image and at least got the point across of what I was trying to convey, so I uploaded it without doing much comparison to other renders.

Difference being the bulbs are white in the raw camera exposure; there is no color information to begin with (the camera never saw them as red so it will never clip to red). The faces are red in the camera exposure. Now we’re dealing with the limits of a camera’s (and/or recording medium) dynamic range. An “idealized” camera would have recorded the light bulbs as high luminance red also, but in this case it was beyond the capabilities of the camera so that information is lost before it ever gets to us.